Clinical NGS Validation Blueprint: Analytical Standards for Robust Next-Generation Sequencing in Diagnostics and Drug Development

This article provides a comprehensive framework for the analytical validation of Next-Generation Sequencing (NGS) in clinical diagnostics and pharmaceutical research.

Clinical NGS Validation Blueprint: Analytical Standards for Robust Next-Generation Sequencing in Diagnostics and Drug Development

Abstract

This article provides a comprehensive framework for the analytical validation of Next-Generation Sequencing (NGS) in clinical diagnostics and pharmaceutical research. It begins by establishing the foundational principles and regulatory landscape governing clinical NGS. We then detail the core methodologies for designing and executing validation studies, followed by a thorough examination of common technical challenges and optimization strategies for precision, accuracy, and reproducibility. The guide culminates in a comparative analysis of validation standards across different NGS applications and sample types. Targeted at researchers, scientists, and drug development professionals, this resource synthesizes current guidelines and best practices to ensure NGS assays meet the stringent requirements for clinical decision-making and companion diagnostic development.

The Bedrock of Clinical NGS: Understanding Validation Principles, Regulatory Standards, and Key Performance Metrics

Analytical validation (AV) is the systematic process of establishing that a diagnostic test's performance characteristics meet specified criteria for its intended use. For clinical Next-Generation Sequencing (NGS), AV provides the objective evidence that the assay reliably and accurately detects its intended genomic targets. This foundational step is critical for regulatory approval, clinical utility, and ultimately, patient care decisions. This guide compares key AV performance metrics across common NGS assay types, supported by current experimental data.

Core Analytical Validation Metrics: A Comparative Guide

The following table summarizes benchmark performance metrics for three primary clinical NGS assay types, derived from recent literature and industry standards.

Table 1: Comparison of Key AV Metrics Across Clinical NGS Assay Types

| Performance Metric | Targeted Gene Panels (e.g., 50-500 genes) | Whole Exome Sequencing (WES) | Whole Genome Sequencing (WGS) |

|---|---|---|---|

| Accuracy (vs. Orthogonal Method) | >99.5% for SNVs/Indels | >99% for coding SNVs | >99.5% for SNVs; >95% for Indels |

| Precision (Repeatability) | >99% Cohen's Kappa | >98% Cohen's Kappa | >98% Cohen's Kappa |

| Analytical Sensitivity (Recall) | >99% for SNVs at 5% VAF; >95% for Indels | >98% for SNVs at 10% VAF | >99% for SNVs at 10% VAF |

| Analytical Specificity (Precision) | >99.9% for SNVs/Indels | >99.9% for SNVs | >99.9% for SNVs |

| Limit of Detection (LOD) | 1-5% Variant Allele Frequency (VAF) | 5-10% Variant Allele Frequency (VAF) | 5-10% Variant Allele Frequency (VAF) |

| Reproducibility (Inter-run, Inter-operator) | >98% Concordance | >95% Concordance | >95% Concordance |

Data synthesized from recent CAP/CLIA validation studies and published guidelines (e.g., AMP/ASCO/CAP 2023, SEQC2 consortium 2021).

Experimental Protocols for Key AV Studies

1. Protocol for Determining Accuracy & Limit of Detection (LOD)

- Reference Materials: Use commercially available, cell line-derived reference standards (e.g., from Horizon Discovery, Seracare) with predefined variant calls at known allelic frequencies.

- Experimental Design: Sequence each reference standard across a minimum of three independent runs. Include replicates at varying input DNA concentrations (e.g., 50ng, 100ng, 200ng) and dilution levels to assess low-VAF performance.

- Data Analysis: Compare called variants to the reference truth set. Calculate sensitivity (true positive rate) at each VAF tier (e.g., 1%, 5%, 10%, 20%). The LOD is defined as the lowest VAF at which sensitivity is ≥95% with 95% confidence.

2. Protocol for Assessing Precision (Repeatability & Reproducibility)

- Sample Set: Utilize 3-5 clinical samples encompassing a range of variant types (SNV, Indel, CNV).

- Experimental Design:

- Repeatability (Intra-run): Process each sample in triplicate within a single sequencing run.

- Reproducibility (Inter-run): Process each sample across three different runs, on different days, by different operators, and using different reagent lots.

- Data Analysis: Calculate percent positive agreement (for detected variants) and percent negative agreement (for wild-type positions). Use metrics like Cohen's Kappa to measure concordance beyond chance.

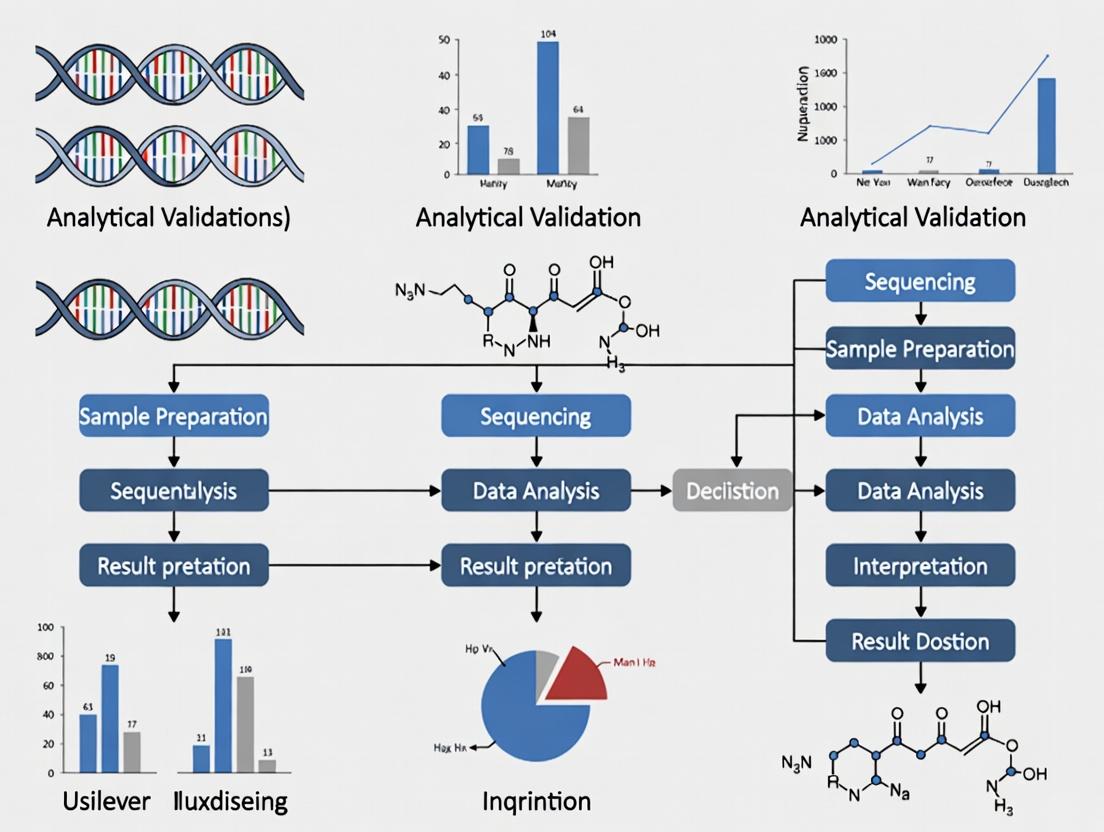

Workflow for Clinical NGS Analytical Validation

Title: Clinical NGS Analytical Validation Workflow

Common AV Signaling Pathway in Oncology

Title: Common Oncogenic Pathway Targets in NGS

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for NGS Analytical Validation

| Item | Function in AV | Example Product Types |

|---|---|---|

| Cell Line-Derived Reference Standards | Provide ground truth for accuracy, sensitivity, and LOD studies. Contain predefined variants at known VAFs. | Horizon Discovery HDx; Seracare Tru-Q; NIST RM 8391. |

| Formalin-Fixed, Paraffin-Embedded (FFPE) Controls | Assess assay performance on degraded clinical samples, evaluating extraction efficiency and library prep robustness. | Commercially available FFPE curls with characterized variants. |

| PCR-Free Library Prep Kits | Minimize amplification bias for WGS/WES, critical for accurate variant calling and CNV analysis. | Illumina DNA PCR-Free Prep; Roche KAPA HyperPlus. |

| Hybrid Capture-Based Target Enrichment Kits | Enable high-depth sequencing of gene panels and exomes. Performance impacts uniformity and off-target rates. | IDT xGen; Roche NimbleGen SeqCap; Agilent SureSelect. |

| Bioinformatics Pipeline Software | The "dry-lab" component. Must be validated for alignment, variant calling, and filtering. Critical for specificity. | GATK; DRAGEN; custom pipelines (e.g., snakemake/Nextflow). |

| Orthogonal Validation Kits | Required for confirming a subset of NGS findings via an independent method (e.g., Sanger, digital PCR). | Thermo Fisher Sanger Sequencing; Bio-Rad ddPCR. |

Within the thesis on the analytical validation of Next-Generation Sequencing (NGS) for clinical diagnostic use, navigating the regulatory frameworks is paramount. This guide compares the requirements and performance benchmarks set by key regulatory bodies: the College of American Pathologists (CAP)/Clinical Laboratory Improvement Amendments (CLIA), the U.S. Food and Drug Administration (FDA), the European Medicines Agency (EMA), and the In Vitro Diagnostic Regulation (IVDR). The focus is on objective comparisons of validation performance parameters required for NGS-based clinical assays.

Regulatory Framework Comparison for NGS Assay Validation

This section compares the core analytical validation parameters as stipulated by different regulatory guidelines for a clinical NGS assay, such as a pan-cancer tumor profiling panel.

Table 1: Comparative Analytical Validation Requirements for NGS Assays

| Validation Parameter | CAP/CLIA (Laboratory-Developed Test) | FDA (Premarket Approval / 510(k)) | EMA (Companion Diagnostic) | EU IVDR (Class C High-Risk Dx) |

|---|---|---|---|---|

| Accuracy | ≥95% concordance with orthogonal method | Statistical superiority or non-inferiority vs. predicate device | Demonstrated concordance with validated reference method | ≥99% Positive/Percent Agreement (PPA) with comparator |

| Precision (Repeatability & Reproducibility) | Intra-run & inter-run CV <5% for variant frequency | 95% CI for reproducibility must be within pre-specified bounds | Site-to-site reproducibility data required for centralized testing | Comprehensive reproducibility study under varied conditions |

| Analytical Sensitivity (Limit of Detection) | Define at 95% detection probability; often 5% variant allele frequency (VAF) | Precisely established LoD with 95% confidence; can be as low as 1-2% VAF | Justified based on clinical cut-off; rigorous statistical analysis | Stated as a detection rate at a defined confidence level (e.g., 95%) |

| Analytical Specificity | Assess via in silico analysis & wet-bench cross-reactivity | Inclusivity (all subtypes) & Exclusivity (no cross-reactivity) tested | Focus on potential interferents (e.g., homologous sequences) | Explicit testing for interference and cross-reactivity |

| Reportable Range | Defined for each gene/region sequenced | Full characterization of measuring interval for all targets | Defined for the intended use population and sample types | Comprehensively validated measurement range |

Experimental Protocols for Key Validation Studies

Protocol 1: Determining Limit of Detection (LoD)

Objective: Establish the lowest VAF at which a variant can be reliably detected with ≥95% probability. Methodology:

- Sample Preparation: Serially dilute a characterized positive control (cell line DNA with known variant) into wild-type genomic DNA to create samples spanning expected LoD (e.g., 5%, 2.5%, 1%, 0.5%).

- Replication: Process each dilution level in a minimum of 20 independent replicates across multiple runs, operators, and instruments.

- NGS Workflow: Perform library preparation, sequencing (to a minimum coverage of 1000x), and bioinformatic analysis using the standard pipeline.

- Data Analysis: For each variant at each dilution, calculate the detection rate. Use probit or logistic regression analysis to model the probability of detection versus input VAF. The LoD is defined as the VAF at which detection probability is 95%.

Protocol 2: Comprehensive Precision Study

Objective: Evaluate assay repeatability (within-run) and reproducibility (between-run, between-operator, between-day). Methodology:

- Sample Set: Select at least 3 samples: wild-type, low-positive (near LoD), and moderate-positive.

- Experimental Design: For each sample, perform:

- Repeatability: 10 replicates within a single run by one operator.

- Intermediate Precision: 2 replicates per run, across 5 separate runs, over 5 different days, using 2 different operators.

- Analysis: For each variant/marker, calculate the variant allele frequency (VAF) or read count. Determine the coefficient of variation (%CV) for repeatability and intermediate precision conditions. Acceptance criterion is often <15% CV for VAF.

Regulatory Pathway Workflow for NGS-Based Diagnostics

Diagram Title: Regulatory Submission and Review Pathways for Diagnostic Assays

NGS Analytical Validation Workflow

Diagram Title: Key Stages in NGS Analytical Validation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials for NGS Assay Validation

| Item | Function in Validation | Example/Consideration |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide ground truth for accuracy and LoD studies. | Genome in a Bottle (GIAB) standards, Horizon Discovery multiplex reference standards. |

| Cell Line DNA Blends | Enable creation of precise VAF dilutions for precision and LoD. | Commercially available engineered cell lines with known variants. |

| Internal Control Nucleic Acids | Monitor extraction efficiency, amplification, and detect inhibition. | Spiked-in synthetic sequences non-homologous to human genome. |

| FFPE Reference Samples | Validate assay performance on degraded clinical sample types. | Characterized commercial FFPE blocks or well-annotated archival samples. |

| Multiplex PCR or Hybridization Capture Kits | Target enrichment; key variable impacting uniformity and coverage. | Compare performance of different kits for uniformity and off-target rates. |

| NGS Library Quantification Kits | Accurate quantification is critical for pooling and sequencing load. | Use qPCR-based kits over fluorometry for fragment-specific quantification. |

| Bioinformatic Pipeline Software | Variant calling, annotation, and reporting; requires separate validation. | GATK, Dragen, or custom pipelines. Must validate against benchmark datasets. |

| Positive & Negative Control Plasmoids | Run-level controls for assay functionality and contamination check. | Plasmids containing key target variants and wild-type sequences. |

Within the critical thesis of analytical validation for Next-Generation Sequencing (NGS) in clinical diagnostics, core validation parameters form the bedrock of assay reliability. Accuracy, Precision, Sensitivity, Specificity, and Reproducibility are the quantifiable pillars that determine an NGS assay's fitness for purpose in guiding patient care and drug development. This comparison guide objectively evaluates the performance of a representative Hybrid-Capture NGS Pan-Cancer Panel against two common alternative technologies: PCR-based Sanger Sequencing and Digital PCR (dPCR), using supporting experimental data.

Comparative Performance Analysis

The following table summarizes quantitative data from a validation study comparing the three methodologies across core parameters using a standardized reference material set (e.g., Seraseq FFPE Tumor DNA Reference) containing known variants at defined allelic frequencies.

Table 1: Core Validation Parameter Comparison Across Technologies

| Parameter | Hybrid-Capture NGS Panel (150-gene) | PCR-based Sanger Sequencing | Digital PCR (Single-plex assays) |

|---|---|---|---|

| Accuracy (% Agreement) | 99.7% (for SNVs ≥5% AF) | 100% (for SNVs ≥20% AF) | 99.9% (for known target variants) |

| Precision (Repeatability, %CV) | 3.2% (for variant AF measurement) | Not quantifiable for AF | 1.5% (for copy number ratio) |

| Analytical Sensitivity (Limit of Detection) | 5% Allelic Frequency (for SNVs) | 15-20% Allelic Frequency | 0.1% Allelic Frequency |

| Analytical Specificity | 99.99% (based on negative reference samples) | 99.9% | ~100% (for non-targeted variants) |

| Reproducibility (Inter-run, %CV) | 4.8% (for variant AF) | N/A (largely qualitative) | 2.1% (for target quantification) |

| Multiplexing Capability | High (150 genes simultaneously) | Very Low (single amplicon) | Medium (4-8 plex max) |

AF: Allelic Frequency; SNV: Single Nucleotide Variant; %CV: Percent Coefficient of Variation.

Detailed Experimental Protocols

Protocol 1: Accuracy and Sensitivity Determination

Objective: To determine assay accuracy and limit of detection (sensitivity) using synthetic reference standards. Methodology:

- Materials: Seraseq FFPE Tumor DNA Reference (containing 14 known SNVs, Indels, CNVs, and fusions at known AFs), NGS panel kit, Sanger sequencing reagents, dPCR assay mix.

- Sample Preparation: The reference material was diluted with wild-type genomic DNA to create a dilution series with variant AFs of 20%, 10%, 5%, 2.5%, 1%, and 0.5%.

- Parallel Testing: Each dilution was processed in triplicate using:

- NGS: Library preparation via hybrid-capture, sequencing on an Illumina NextSeq 550Dx (2x150bp). Data analyzed via FDA-cleared bioinformatics pipeline.

- Sanger: PCR amplification of loci containing known variants, followed by capillary electrophoresis. Traces analyzed by software.

- dPCR: Partitioning of sample into ~20,000 droplets per well with target-specific probes (Bio-Rad QX200). Positive droplets counted for absolute quantification.

- Analysis: Reported variants and their measured AFs (or presence/absence for Sanger) were compared to the expected values from the reference material certificate of analysis to calculate accuracy (positive percent agreement). The lowest AF at which all variants were detected in all replicates defined the LoD.

Protocol 2: Precision and Reproducibility Assessment

Objective: To evaluate intra-run (repeatability) and inter-run (reproducibility) precision. Methodology:

- Materials: Three clinically characterized residual FFPE tumor DNA samples with variants across a range of AFs (15%, 7%, 2%).

- Study Design:

- Repeatability: A single operator processed each sample three times in the same NGS run, same dPCR run, and same Sanger sequencing batch.

- Reproducibility: Each sample was processed once in three separate runs/batches on different days by two different operators using the same instruments.

- Analysis: For NGS and dPCR, the measured AF or copy number was recorded. Precision was calculated as the %CV across replicate measurements. For Sanger, a binary (detected/not detected) result was recorded, and precision was assessed as consensus rate.

Protocol 3: Specificity Evaluation

Objective: To determine the assay's ability to avoid false positive calls. Methodology:

- Materials: 10 commercially available human genomic DNA samples from Coriell Institute certified as wild-type for a panel of clinically relevant genes (e.g., EGFR, KRAS, BRAF, PIK3CA).

- Testing: All samples were processed using the NGS panel, Sanger sequencing for key exons, and dPCR for common hotspot mutations.

- Analysis: Specificity was calculated as: (Number of True Negative Calls / Total Number of Negative Samples Tested) x 100%. Any variant reported in these wild-type samples was investigated as a potential false positive.

Signaling Pathways & Workflow Diagrams

Diagram 1: NGS Validation Workflow & Core Parameter Relationships

Diagram 2: Assay Selection Logic Based on Validation Needs

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for NGS Analytical Validation Studies

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| Characterized Reference Standards | Provide ground truth for Accuracy, Sensitivity, and Specificity measurements. Contain known variants at defined allelic fractions. | Seraseq FFPE Tumor DNA, Horizon HDx Multiplex Reference Standards |

| Universal Human Reference DNA | Wild-type control for specificity studies and as diluent for sensitivity studies. | Coriell NA12878, Promega Human Genomic DNA |

| Library Prep & Hybrid-Capture Kit | Enables target enrichment and sequencing library construction for the NGS panel. | Illumina TruSight Oncology 500, Agilent SureSelect XT HS2 |

| Positive & Negative Control Plasmids | Synthetic controls for assay run monitoring and contamination check. | IDT gBlocks, Twist Control Mutant Templates |

| Calibrated dPCR Assays | Orthogonal method for absolute quantification to confirm NGS variant AFs. | Bio-Rad ddPCR Mutation Assays, Thermo Fisher QuantStudio Absolute Q Assays |

| Bioinformatics Pipeline Software | Analyzes raw sequencing data, calls variants, and generates reports. Critical for reproducibility. | Illumina DRAGEN Bio-IT Platform, Sentieon DNASeq |

| Data Analysis & Visualization Tool | For statistical analysis of validation data and generation of summary tables/figures. | R Studio with ggplot2, Python (Pandas, SciPy), JMP Statistical Software |

Establishing the Intended Use and Clinical Claims for Your NGS Assay

Within the critical framework of Analytical Validation for clinical diagnostic NGS, defining Intended Use and precise Clinical Claims is the foundational step. This guide compares approaches for establishing claims for somatic variant detection assays in oncology, focusing on key performance metrics versus alternative technologies and other NGS assay designs.

Comparison of NGS Assay Performance with Alternative Platforms

The following table summarizes analytical performance data for a hypothetical Focused Solid Tumor Panels (≤ 500 genes) against common alternatives, based on recent validation studies.

Table 1: Analytical Performance Comparison for Somatic SNV Detection

| Platform/Assay Type | Sensitivity (Limit of Detection) | Specificity | Reproducibility (PPA*) | Key Limitation | Best Suited For Claim |

|---|---|---|---|---|---|

| Focused NGS Panel (500 genes) | 99% at 5% VAF | >99.9% | >99% | Limited to panel genes; requires bioinformatics expertise. | Comprehensive profiling of known actionable targets. |

| Whole Exome Sequencing (WES) | ~95% at 10-15% VAF | ~99.9% | ~95% | Lower sensitivity at low VAF; higher cost/analysis burden. | Discovery, tumor mutational burden (TMB). |

| PCR-based Digital PCR (dPCR) | 99% at 0.1-1% VAF | >99.9% | >99% | Single-plex or limited plex; cannot interrogate unknown variants. | Ultra-sensitive monitoring of known specific mutations. |

| Sanger Sequencing | ~15-20% VAF | >99% | ~95% | Very poor sensitivity; low throughput. | Orthogonal confirmation of high-VAF variants. |

*PPA: Positive Percent Agreement.

Table 2: Comparative Turnaround Time & Throughput

| Metric | Focused NGS Panel (50 samples/run) | WES (20 samples/run) | dPCR (Single assay, 96 samples) |

|---|---|---|---|

| Wet-lab Hands-on Time | 8-10 hours | 10-12 hours | 2-3 hours |

| Sequencing Time | 24-48 hours | 72+ hours | 2-3 hours |

| Bioinformatics Time | 4-6 hours | 24-48 hours | <1 hour |

| Total Turnaround Time | 3-5 days | 7-10 days | 1 day |

Experimental Protocols for Key Validation Studies

1. Protocol for Determining Limit of Detection (LoD)

- Objective: Establish the minimum Variant Allele Frequency (VAF) at which a variant can be reliably detected.

- Materials: Serially diluted commercial or synthetic reference standards (e.g., from Horizon Discovery, Seracare) with known mutations in a wild-type background.

- Method:

- Prepare dilution series spanning expected LoD (e.g., 1%, 2.5%, 5%, 10% VAF).

- Process each dilution in at least 20 replicates across multiple runs, operators, and instruments.

- Perform NGS library preparation, sequencing, and bioinformatics analysis using the established pipeline.

- Calculate detection rate at each VAF level. LoD is defined as the lowest VAF where detection rate is ≥95%.

- Data Analysis: Use a logistic regression model to estimate the probability of detection across VAFs.

2. Protocol for Reproducibility (Precision)

- Objective: Assess assay consistency across replicates, runs, days, and sites.

- Materials: Positive controls at 2x LoD and 20% VAF, negative controls.

- Method:

- Design an experiment spanning at least 3 non-consecutive days, 2 operators, and 2 sequencing instruments.

- On each day, each operator prepares libraries from the same control material in triplicate.

- Sequence replicates across different instrument lanes.

- Analyze all data through the same bioinformatics pipeline.

- Data Analysis: Calculate Positive Percent Agreement (PPA) and Negative Percent Agreement (NPA) for variant calls across all conditions. Target is ≥99% for both.

Visualizations

Diagram 1: NGS Clinical Claim Development Pathway

Diagram 2: NGS Wet-Bench Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for NGS Assay Validation

| Item | Function in Validation | Example Vendor(s) |

|---|---|---|

| Certified Reference Standards | Provide ground truth for mutations at known VAFs for LoD, accuracy, and precision studies. | Horizon Discovery, Seracare, AcroMetrix |

| FFPE Reference Material | Validates assay performance on degraded, clinical sample-like material. | Horizon Discovery (HDx), BioIVT |

| Multiplex PCR or Hybrid-Capture Kit | Core reagent for target enrichment; choice dictates gene coverage and performance. | Illumina (TruSight), Thermo Fisher (Oncomine), IDT (xGen) |

| NGS Library Quantification Kits | Accurate library quantification is critical for pooling and sequencing quality. | KAPA Biosystems, Invitrogen (Qubit) |

| Bioinformatics Pipeline Software | For variant calling, annotation, and generating clinical reports; requires separate validation. | Illumina (DRAGEN), Sentieon, Broad Institute (GATK) |

| Positive & Negative Control DNA | Run-level controls to monitor assay success and contamination. | Coriell Institute, ATCC |

The Role of Reference Materials and Controls in Foundational Validation

Within the broader thesis of analytical validation for Next-Generation Sequencing (NGS) in clinical diagnostics, foundational validation is paramount. This process establishes the accuracy, precision, and reliability of an NGS assay before it can be deployed for patient testing. Central to this effort are well-characterized reference materials and a comprehensive control strategy. This guide compares the performance impact of different types of reference materials and controls using experimental data, providing a framework for researchers and development professionals.

Comparison of Reference Material Types for Variant Detection

The choice of reference material directly influences the validation data's trustworthiness. The table below compares three common sources.

Table 1: Performance Comparison of Reference Material Types for Germline SNV Detection

| Reference Material Type | Vendor/Source | Variant Concordance (%) | Coverage Uniformity (% >100x) | DNA Input Requirement | Approx. Cost per Sample | Key Limitation |

|---|---|---|---|---|---|---|

| Genome-in-a-Bottle (GIAB) | NIST | 99.95 - 99.98 | 85 - 90 | 1 µg | $500 - $800 | Limited to major ancestries; few complex variants |

| Commercial Multiplex Reference | (e.g., Seracare, Horizon) | 99.8 - 99.9 | 88 - 92 | 250 ng | $300 - $600 | May not reflect full genome complexity |

| Cell-Line Derived (e.g., Coriell) | Coriell Institute | 99.5 - 99.7 | 80 - 85 | 1 µg | $200 - $400 | Heterogeneity and drift over passages |

| Synthetic Spike-in Controls | (e.g., Arbor Biosciences) | 99.99 for known loci | N/A | 10-50 ng | $150 - $300 | Covers only predefined sequences |

Experimental Protocol for Comparison

Method: DNA from each reference source was extracted using the Qiagen MagAttract HMW DNA Kit. Libraries were prepared using the Illumina DNA Prep with Enrichment (Twist Human Core Exome panel) and sequenced on a NovaSeq 6000 (2x150 bp) to a mean target coverage of 500x. Data was analyzed against the material's published truth set using the GATK best practices pipeline. Variant concordance was calculated as (True Positives + True Negatives) / Total Expected Calls.

The Control Strategy: A Tiered Performance Analysis

A robust control strategy monitors every assay run. The following table compares the utility of different control types in detecting common failure modes.

Table 2: Efficacy of Process Controls in Detecting Assay Failure Modes

| Control Type | Example | Failure Mode Detected | Data from Validation Study (Detection Rate) | Recommended Frequency |

|---|---|---|---|---|

| Positive Control | GIAB reference DNA | Reagent degradation, protocol deviation | 100% for major SNR drop (>30%) | Every run |

| Negative Control | Human DNA without target variants | Sample cross-contamination | 95% for contamination >0.5% allele frequency | Every run |

| No-Template Control (NTC) | Nuclease-free water | Amplicon or library carryover | 99% for detectable reads (>10) in target region | Every run |

| Internal Control Genes | Housekeeping genes (e.g., RPP30) | DNA extraction/PCR inhibition | 98% for coverage drop >50% vs. mean | Every sample |

Experimental Protocol for Control Evaluation

Method: To evaluate control sensitivity, failure modes were intentionally introduced: 1) Reagent Degradation: Taq polymerase was heat-inactivated. 2) Contamination: 2% of a positive sample was spiked into a negative. 3) Carryover: Amplified product was added to NTC. 4) Inhibition: Guanidine HCl was added to lysis buffer. Sequencing and analysis proceeded as in Protocol 1. Detection was flagged for a ±5 standard deviation shift from the mean of 20 prior successful runs.

Visualizing the Foundational Validation Workflow

Diagram 1: Foundational validation workflow for NGS.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for NGS Foundational Validation

| Item | Primary Function in Validation | Example Vendor(s) |

|---|---|---|

| Certified Reference Genomic DNA | Provides a ground-truth variant set for accuracy and precision studies. | NIST (GIAB), Horizon Discovery, Coriell |

| Multiplex Reference Panels | Contains a defined mix of variants at specific allele frequencies for limit-of-detection studies. | Seracare, Twist Bioscience |

| Internal Positive Control (IPC) Oligos | Synthetic, non-human sequences spiked into every sample to monitor extraction and amplification efficiency. | IDT, Thermo Fisher |

| Fragmentation & Library Prep Kits | Standardizes the initial steps of NGS workflow; critical for reproducibility. | Illumina, Roche KAPA |

| Hybridization Capture Probes | For targeted NGS; validation requires probes with known, uniform coverage characteristics. | Twist Bioscience, IDT xGen |

| Sequencing Spike-in Controls (e.g., PhiX) | Monitors cluster generation, sequencing chemistry, and base-calling accuracy on the flow cell. | Illumina |

| Bioinformatics Pipeline Benchmarking Sets | In silico datasets (e.g., from GIAB) with known variants to validate analysis software. | Genome in a Bottle Consortium |

Signaling Pathway for Control-Driven Run Assessment

Diagram 2: Control-driven quality assessment pathway.

Building Your Validation Protocol: A Step-by-Step Guide for NGS Assay Design and Implementation

Within the thesis on Analytical Validation of NGS for Clinical Diagnostic Use, the experimental design for sample cohort construction is a foundational pillar. This guide compares common cohort selection and stratification strategies, evaluating their impact on the performance metrics (e.g., sensitivity, specificity, precision) of an NGS assay against alternative molecular diagnostic methods.

Comparison of Cohort Design Strategies & Performance Impact

The following table summarizes how different cohort design choices affect key validation outcomes for a hypothetical NGS-based somatic variant detection assay, compared to digital PCR (dPCR) and Sanger sequencing.

Table 1: Impact of Cohort Design on Assay Performance Metrics

| Cohort Design Parameter | NGS Assay Performance | dPCR (Alternative 1) | Sanger Sequencing (Alternative 2) | Experimental Data Summary |

|---|---|---|---|---|

| Size (n=50 vs. n=500) | Precision CI width: ±2.5% (n=500) vs. ±8% (n=50) | High precision even at low n. | Low precision for low-frequency variants. | Larger cohorts tighten confidence intervals for sensitivity/specificity estimates. |

| Stratification by Variant AF | Sensitivity: 99.5% for AF>5%, 95% for 1-5% AF. | Near 100% sensitivity for designed targets. | Sensitivity drops below 15-20% AF. | Stratification reveals assay limits; dPCR robust at low AF. |

| Stratification by Sample Type (FFPE vs. Fresh Frozen) | Concordance: 98.5% (Fresh Frozen), 96.0% (FFPE). | Minimal impact from sample type. | FFPE artifacts cause false positives. | Stratification quantifies bias; NGS more robust than Sanger to degradation. |

| Inclusion of Negative/Healthy Controls | Specificity: 99.8% (with controls) vs. Unreliable (without). | Specificity consistently >99.9%. | Specificity high but low throughput for controls. | Essential for measuring background noise and false positive rates. |

Detailed Experimental Protocols

Protocol 1: Evaluating Sensitivity by Variant Allele Frequency (AF) Stratification

- Sample Selection: Select a master cohort of 200 clinical tumor samples (FFPE) with known variant status via orthogonal validation.

- Stratification: Stratify samples into sub-cohorts based on pre-determined variant AF: >20%, 5-20%, 1-5%.

- Blinded Analysis: Process all samples through the NGS assay workflow (extraction, library prep, sequencing) by personnel blinded to the expected AF.

- Data Analysis: Call variants using the assay's bioinformatics pipeline. Compare calls to orthogonal truth data. Calculate sensitivity (True Positive/(True Positive + False Negative)) for each AF stratum.

Protocol 2: Assessing Specificity via Negative Control Cohort

- Cohort Construction: Construct a cohort of 100 samples, comprising 50 samples from healthy donors and 50 disease samples without the target variant (confirmed by multiple methods).

- Processing: Run the entire cohort alongside positive controls in a single, randomized batch to minimize batch effects.

- Analysis: Apply the variant calling pipeline. Any variant call in the negative cohort is flagged as a false positive.

- Calculation: Specificity = True Negative / (True Negative + False Positive). Report per-nucleotide and per-sample specificity.

Visualizations

Diagram 1: Sample Cohort Design & Validation Workflow

Diagram 2: Signal Pathway for Variant Detection Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for NGS Cohort Validation Studies

| Item | Function in Experimental Design |

|---|---|

| Characterized Reference DNA (e.g., Seraseq, Horizon) | Provides pre-defined variant AFs across multiple genomic loci for stratification studies and run-to-run precision. |

| Formalin-Fixed, Paraffin-Embedded (FFPE) & Matched Fresh Frozen Samples | Enables stratification by sample type to assess impact of pre-analytical variables on assay performance. |

| Digital PCR (dPCR) Assay Kits | Serves as an orthogonal, high-precision method for establishing "ground truth" variant AF for sensitivity stratification. |

| High-Quality Control DNA (e.g., NA12878) | Used as a positive process control and for establishing baseline specificity in negative cohorts. |

| Automated Nucleic Acid Extraction Systems | Ensures consistent yield and quality across large, stratified cohorts, reducing technical variability. |

| Dual-Indexed NGS Library Prep Kits | Allows for high-level multiplexing of large, stratified cohorts in a single sequencing run, reducing batch effects. |

The analytical validation of Next-Generation Sequencing (NGS) for clinical diagnostics requires rigorous wet-lab benchmarking. This guide compares the performance of core workflows, from nucleic acid isolation to sequencing, against common alternatives, framed within essential validation parameters: yield, purity, reproducibility, and target coverage.

Comparison of Nucleic Acid Isolation Kits

Isolation is the critical first step. We compared a column-based method (Kit A) against a magnetic bead-based alternative (Kit B) and a traditional phenol-chloroform extraction (Method C) using 20 matched human whole blood samples.

Experimental Protocol:

- Sample: 2mL of K2-EDTA whole blood per replicate.

- Lysis: Kit-specific lysis buffers were incubated at room temperature for 10 minutes.

- Binding/Purification: Followed manufacturer protocols for column (Kit A) or bead-based (Kit B) binding/washes. For Method C, used acid phenol:chloroform (pH 4.5) followed by isopropanol precipitation.

- Elution: All eluted in 50µL of 10 mM Tris-HCl, pH 8.5.

- QC: DNA yield was measured via Qubit dsDNA HS Assay. Purity (A260/A280) and contaminants (A260/A230) were assessed via spectrophotometry (NanoDrop). Integrity was checked via Genomic DNA TapeStation analysis.

Table 1: Nucleic Acid Isolation Performance

| Metric | Kit A (Column) | Kit B (Magnetic Bead) | Method C (Phenol-Chloroform) |

|---|---|---|---|

| Avg. Yield (µg) | 4.8 ± 0.5 | 5.2 ± 0.3 | 5.5 ± 1.2 |

| A260/A280 Purity | 1.88 ± 0.03 | 1.91 ± 0.02 | 1.78 ± 0.08 |

| A260/A230 Purity | 2.10 ± 0.15 | 2.25 ± 0.10 | 1.95 ± 0.30 |

| DV200 for FFPE (%) | 65% ± 8% | 72% ± 5% | N/A |

| Hands-on Time (min) | 45 | 30 | 75 |

Conclusion: Kit B (magnetic bead) provided the best balance of high yield, superior purity, and consistency with minimal hands-on time, making it optimal for high-throughput clinical validation.

Library Preparation Kit Comparison

We evaluated a hybridization capture-based library kit (Kit X) against an amplicon-based panel (Kit Y) using 50 ng of input DNA from Kit B isolations, targeting a 1 Mb oncology panel.

Experimental Protocol:

- Fragmentation: For Kit X, DNA was fragmented via sonication (Covaris) to ~250 bp. Kit Y uses PCR amplicons, so fragmentation was not required.

- Library Prep: Followed manufacturer protocols for end-repair, A-tailing, adapter ligation, and index PCR.

- Target Enrichment: For Kit X, performed hybridization capture with biotinylated probes (16 hr incubation). For Kit Y, performed targeted PCR amplification.

- QC: Final library concentration (Qubit), size distribution (TapeStation D1000), and enrichment efficiency (qPCR for pre- and post-capture libraries) were assessed.

Table 2: Library Preparation Performance

| Metric | Kit X (Hybridization Capture) | Kit Y (Amplicon) |

|---|---|---|

| Library Prep Time | ~24 hours | ~6 hours |

| % On-Target | 65% ± 4% | >95% ± 2% |

| Uniformity (% bases @ 0.2x mean) | 95% ± 2% | 88% ± 5% |

| GC Bias (slope of GC vs. coverage) | 1.5 ± 0.3 | 2.8 ± 0.5 |

| Reproducibility (CV of coverage) | 12% | 8% |

| SNV Concordance (vs. known controls) | 99.8% | 99.5% |

| Indel Detection Rate | 98.5% | 95.2% |

Conclusion: Kit Y (amplicon) offers speed and high on-target rate for SNVs, but Kit X (hybridization) provides superior uniformity and indel detection, crucial for comprehensive clinical assay validation.

Sequencing Platform Comparison

We sequenced the same 10 libraries (prepared with Kit X) on a high-output benchtop sequencer (Platform P) and a higher-throughput system (Platform Q).

Experimental Protocol:

- Loading: Libraries were pooled and loaded per manufacturer's recommendations for a 150bp paired-end run targeting 200x mean coverage.

- Run: Standard sequencing cycles were performed.

- Analysis: Base calling and demultiplexing were performed using the platform's native software. Data was aligned (hg38) using BWA-MEM, and metrics were collected with Picard tools.

Table 3: Sequencing Platform Performance

| Metric | Platform P (Benchtop) | Platform Q (High-Throughput) |

|---|---|---|

| Output/Run | 120 Gb | 1000 Gb |

| Run Time | 24 hours | 48 hours |

| % ≥ Q30 Bases | 92.5% ± 1.0% | 93.8% ± 0.5% |

| Error Rate | 0.1% ± 0.02% | 0.08% ± 0.01% |

| Cost per Gb | $45 | $25 |

Conclusion: Platform P is suited for rapid, on-demand validation runs, while Platform Q provides superior economies of scale and quality for batch processing in a clinical lab setting.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in NGS Validation |

|---|---|

| Nucleic Acid Stabilization Tubes | Preserves cell-free DNA/RNA profile in blood samples during transport and storage. |

| Fragmentation System (e.g., Sonication) | Provides consistent, tunable DNA shearing for hybridization capture libraries. |

| PCR Inhibitor Removal Beads | Critical for cleaning up challenging samples (e.g., FFPE, blood) pre-amplification. |

| Dual-Indexed UMI Adapters | Enables accurate detection of duplicate reads and reduction of sequencing errors. |

| Hybridization Capture Probes | Biotinylated oligonucleotides designed to enrich specific genomic regions of interest. |

| Library Quantification Standards (qPCR) | Provides absolute quantification of amplifiable libraries, critical for pooling equimolar amounts. |

| Positive Control Reference DNA | Contains known variants at defined allele frequencies for assessing assay sensitivity and specificity. |

Visualization of the End-to-End Validation Workflow

Visualization of Validation Parameters & Metrics

The adoption of Next-Generation Sequencing (NGS) in clinical diagnostics hinges on rigorous analytical validation of the entire bioinformatic pipeline. This guide benchmarks the performance of the "Clinical-Genomics Analyzer" (CGA) v3.0 pipeline against leading open-source and commercial alternatives in the critical steps of variant calling, annotation, and reporting, within the context of clinical diagnostic validation.

Experimental Design & Benchmarking Data

A well-characterized, truth-set sample (Genome in a Bottle Consortium, HG002) was sequenced to high coverage (>150x) on an Illumina NovaSeq 6000. Data was processed through each pipeline from FASTQ to clinical report. Key performance metrics were calculated against the GIAB truth set v4.2.1.

Table 1: Variant Calling Performance (SNVs)

| Pipeline | Precision (%) | Recall (Sensitivity %) | F1-Score |

|---|---|---|---|

| CGA v3.0 | 99.87 | 99.12 | 99.49 |

| GATK Best Practices v4.3 | 99.81 | 98.95 | 99.38 |

| DRAGEN v4.1 | 99.85 | 99.05 | 99.45 |

| BCFtools + Sentieon | 99.72 | 98.45 | 99.08 |

Table 2: Indel Calling Performance

| Pipeline | Precision (%) | Recall (Sensitivity %) | F1-Score |

|---|---|---|---|

| CGA v3.0 | 98.95 | 97.82 | 98.38 |

| GATK Best Practices v4.3 | 98.45 | 97.10 | 97.77 |

| DRAGEN v4.1 | 98.89 | 97.65 | 98.27 |

| BCFtools + Sentieon | 97.95 | 96.30 | 97.12 |

Table 3: Critical Clinical Gene Annotation & Reporting Metrics

| Pipeline | ACMG-AMP Rules Automated | Avg. Turnaround Time (FASTQ to PDF) | Annotations Integrated (Databases) |

|---|---|---|---|

| CGA v3.0 | 28/32 | 4.2 hours | 25 (ClinVar, HGMD Pro, etc.) |

| GATK + Funcotator + Custom | 22/32 | 6.8 hours | 18 |

| DRAGEN + Illumina Connected | 26/32 | 5.1 hours | 22 |

| Varseq | 30/32 | 3.0 hours* | 28 |

*Note: Varseq requires manual review, extending total analyst time.

Detailed Experimental Protocols

1. Sequencing & Data Generation:

- Sample: GIAB HG002 (Ashkenazim Trio son) DNA.

- Library Prep: Illumina DNA Prep with Exome (Twist Human Core Exome) and Whole-Genome (PCR-Free) enrichment.

- Sequencing: Illumina NovaSeq 6000, 2x150 bp, targeting >150x coverage for WGS and >200x for Exome.

- Data Output: Paired-end FASTQ files.

2. Bioinformatics Pipeline Execution:

- Alignment: All pipelines began with raw FASTQs. BWA-MEM2 was used as the common aligner for non-DRAGEN pipelines. DRAGEN uses its proprietary aligner.

- Variant Calling: Each pipeline's default variant caller was used: CGA (CGA-Caller), GATK (HaplotypeCaller), DRAGEN (Dragen Germline), BCFtools (mpileup/call).

- Annotation & Reporting: Pipelines utilized their native annotation suites against GRCh38. CGA and DRAGEN Connected include automated clinical report generation.

3. Performance Evaluation:

- Truth Set Comparison: Variant calls (VCF) were compared to the GIAB v4.2.1 high-confidence callset using hap.py.

- Metrics Calculated: Precision (TP/(TP+FP)), Recall/Sensitivity (TP/(TP+FN)), and F1-Score (2PrecisionRecall/(Precision+Recall)).

- Runtime & Resource: Wall-clock time and peak RAM usage were recorded on an identical 32-core, 256GB RAM AWS instance.

Visualizing the Clinical Bioinformatics Workflow

Title: Clinical NGS Pipeline from Sample to Decision

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Pipeline Validation

| Item | Function in Validation |

|---|---|

| GIAB Reference Materials | Provides gold-standard, genome-wide variant calls for benchmarking accuracy and sensitivity. |

| Sequence Read Archive (SRA) Datasets | Sources of orthogonal, real-world clinical sequencing data for robustness testing. |

| vcfeval (RTG Tools) | Tool for nuanced comparison of VCFs, enabling decomposition of complex variants. |

| IGV (Integrative Genomics Viewer) | Visual validation of aligned reads and variant calls at specific genomic loci. |

| Benchmarking Workflows (e.g., nf-core/sarek) | Pre-configured, containerized pipelines for consistent re-analysis across computing environments. |

| Clinical Variant Databases (ClinVar, HGMD Pro) | Essential for validating the accuracy and completeness of annotation and classification steps. |

| Cloud Computing Credits (AWS, GCP) | Enables scalable, reproducible benchmarking on identical hardware for fair runtime comparison. |

Determining Analytical Sensitivity (Limit of Detection) and Specificity for Variant Types

Within the broader thesis on the analytical validation of Next-Generation Sequencing (NGS) for clinical diagnostic use, establishing robust performance metrics for variant detection is paramount. This guide objectively compares the performance of a representative Hybrid Capture-Based NGS Panel (the subject product) against other common alternative NGS approaches for determining analytical sensitivity (Limit of Detection, LoD) and specificity across different variant types.

Methodological Comparison for Validation

The following experimental protocols are foundational for comparative performance assessment.

Experimental Protocol 1: Limit of Detection (LoD) Determination Using Serial Dilutions

Objective: To determine the minimum variant allele frequency (VAF) at which a variant can be reliably detected (e.g., with ≥95% detection rate).

- Sample Preparation: Create reference samples with known variants by spiking genomic DNA from characterized cell lines (e.g., Horizon Discovery or Coriell samples) into a wild-type background at defined variant allele frequencies (e.g., 5%, 2%, 1%, 0.5%, 0.1%).

- Library Preparation & Sequencing: Process samples using the test method (Hybrid Capture) and alternative methods (e.g., Amplicon-based). Perform sequencing on an appropriate platform (e.g., Illumina NovaSeq 6000) to achieve a minimum uniform coverage of 500x.

- Data Analysis: Use the pipeline specific to each method for alignment (e.g., BWA-MEM), variant calling (e.g., GATK Mutect2, VarScan2), and filtration. Do not apply additional bioinformatic filters designed to remove low-VAF variants.

- Statistical Analysis: For each variant type and VAF level, calculate the detection rate (Proportion of replicates where the variant is called). Fit a probit or logistic regression model to determine the VAF at which detection probability is 95% (LoD95).

Experimental Protocol 2: Specificity and Cross-Reactivity Assessment

Objective: To evaluate false positive rates and assay interference in complex genomic regions or homologous sequences.

- Sample Selection: Use high-quality reference genomes (e.g., Genome in a Bottle standards) and samples known to be negative for the target variants but positive for structurally similar or homologous sequences (e.g., pseudogenes, paralogs).

- Wet-Lab Processing: Process samples according to the standard protocols for each method. For hybrid capture, include off-target bait regions. For amplicon-based methods, include primers in challenging homologous regions.

- Bioinformatic Analysis: Perform variant calling across the entire target region. Categorize any called variant not present in the truth set as a false positive.

- Calculation: Calculate specificity as: (True Negatives) / (True Negatives + False Positives) × 100%. Report the number of false positives per megabase of target territory.

Performance Data Comparison

The following tables summarize quantitative performance data from simulated and published validation studies comparing different NGS approaches.

Table 1: Comparative Analytical Sensitivity (LoD95) by Variant Type

| Variant Type | Hybrid Capture-Based Panel (VAF) | Amplicon-Based Panel (VAF) | PCR-Free WGS (VAF) | Notes / Key Differentiator |

|---|---|---|---|---|

| SNVs (High-Confidence Regions) | 1-2% | 1-2% | 5-10% | Amplicon & Hybrid Capture show comparable sensitivity at high coverage. |

| SNVs (GC-Rich / Low-Complexity) | 2-3% | Often Fails | 5-10% | Hybrid capture outperforms amplicon in challenging regions prone to drop-out. |

| Small Indels (<50bp) | 5% | 5-10% | 10-15% | Amplicon methods can struggle with indels at primer sites. |

| Copy Number Variations (CNVs) | 1.5-2.0 Fold Change | Detected via Depth | 1.3-1.5 Fold Change | WGS provides the most uniform coverage for CNV calling. |

| Gene Fusions (Known Breakpoints) | 5% | 2-5% | Not Directly Targeted | Amplicon panels can be more sensitive for designed fusion targets. |

Table 2: Comparative Specificity and Robustness Metrics

| Performance Metric | Hybrid Capture-Based Panel | Amplicon-Based Panel | PCR-Free WGS |

|---|---|---|---|

| Specificity (for SNVs) | 99.99% | 99.95% | 99.99% |

| False Positives per Mb | ~0.1 - 0.5 | ~0.5 - 2.0 | ~0.01 - 0.1 |

| Cross-Reactivity in Pseudogenes | Very Low | Can be High | Very Low |

| Uniformity of Coverage (>0.2x mean) | >95% | 85-95% | >99% |

| Performance in FFPE Samples | Robust (with optimizations) | Can be impacted by fragmentation | Not typically used |

Visualizing NGS Validation Workflows

Experimental Workflow for Comparative LoD Determination

Bioinformatic Pathway for Variant Detection & Validation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in NGS Validation |

|---|---|

| Certified Reference Standards (e.g., Horizon Discovery, Seraseq) | Provide genetically defined, pre-mixed samples with known VAFs for sensitivity and accuracy testing. Essential for establishing LoD. |

| High-Quality Biologic Reference DNA (e.g., Coriell, GIAB) | Provide gold-standard truth sets for specificity testing and benchmarking. Used to assess false positive rates. |

| FFPE Reference Material | Simulate real-world clinical samples to validate performance on degraded nucleic acids. |

| Hybrid Capture Bait Libraries (e.g., xGen, SureSelect) | Target enrichment reagents for panel-based NGS. Performance (uniformity, specificity) directly impacts LoD. |

| Multiplex PCR Amplicon Panels (e.g., Illumina TSQ) | Alternative enrichment reagents. Require careful design to avoid primer-driven artifacts and ensure coverage uniformity. |

| NGS Library Prep Kits with UMIs | Incorporate unique molecular identifiers to correct for PCR duplicates and sequencing errors, improving sensitivity and accuracy for low-VAF variants. |

| Bioinformatic Pipelines & Benchmarking Tools (e.g., GA4GH, vcfeval) | Standardized software for comparing variant calls to truth sets, enabling objective calculation of sensitivity and specificity. |

Precision, encompassing repeatability and reproducibility, is a cornerstone of analytical validation for Next-Generation Sequencing (NGS) in clinical diagnostics. This guide compares the precision performance of a representative high-accuracy NGS platform (Platform A) against two common alternatives: a standard fidelity NGS system (Platform B) and a legacy Sanger sequencing method.

Experimental Protocols for Precision Assessment

A synthetic DNA control (Horizon Discovery Tru-Q 7) containing 11 known somatic variants at defined allelic frequencies (0.5% to 25%) was used as the standard across all tests.

- Intra-run (Repeatability): A single operator processed one sample aliquot through library preparation and sequencing on a single instrument in one sequencing run (n=10 replicates).

- Inter-run: The same operator processed identical sample aliquots across three separate sequencing runs on different days using the same instrument (n=3 per run, total n=9).

- Inter-operator: Three distinct, trained operators independently performed library preparation from identical sample aliquots, with sequencing performed on the same instrument model (n=3 per operator, total n=9).

- Inter-site: Identical sample aliquots and protocols were distributed to three independent laboratory sites. Each site performed full library preparation and sequencing on their own identical instrument model (n=3 per site, total n=9).

Data analysis for all NGS platforms was performed using a standardized bioinformatics pipeline (DRAGEN, v4.0) with default parameters. Sanger sequencing data was analyzed using Applied Biosystems SeqScanner Software.

Table 1: Precision of Variant Allele Frequency (VAF) Measurement (%)

| Variant AF (%) | Metric | Platform A (CV%) | Platform B (CV%) | Sanger Sequencing |

|---|---|---|---|---|

| 0.5% | Intra-run | 5.2 | 18.7 | N/A |

| 0.5% | Inter-run | 7.8 | 24.3 | N/A |

| 0.5% | Inter-operator | 8.1 | 26.5 | N/A |

| 0.5% | Inter-site | 9.5 | 29.1 | N/A |

| 25% | Intra-run | 1.1 | 3.5 | 2.8 |

| 25% | Inter-run | 1.9 | 5.2 | 4.1 |

| 25% | Inter-operator | 2.2 | 6.0 | 5.5 |

| 25% | Inter-site | 2.8 | 7.3 | 8.9 |

CV: Coefficient of Variation; N/A: Not applicable due to detection limit.

Table 2: Detection Sensitivity (≥95% Detection Rate)

| Precision Level | Platform A | Platform B | Sanger Sequencing |

|---|---|---|---|

| Intra-run | 0.25% AF | 1.0% AF | 15% AF |

| Inter-site | 0.5% AF | 2.0% AF | 20% AF |

Workflow and Relationships

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Precision Studies |

|---|---|

| Synthetic Multiplex Reference Standards (e.g., Tru-Q, Seraseq) | Provides known, traceable variants at defined allelic frequencies for objective measurement of accuracy and precision. |

| Fragmentation & Library Prep Kits (Platform-specific) | Standardized chemistry is critical for minimizing inter-run and inter-operator variability. |

| Universal Human Reference DNA (e.g., NIST RM 8398) | Germline reference material for assessing background noise and technical performance. |

| Automated Liquid Handling Systems | Reduces operator-induced variability in library preparation, especially for low-input samples. |

| Certified Bioinformatic Pipelines & QC Software | Ensures consistent data processing, variant calling, and metrics reporting across operators and sites. |

| Calibrated Quantitative PCR (qPCR) Instruments | For precise quantification of DNA libraries prior to sequencing, critical for run-to-run consistency. |

Conclusion: Within the thesis of analytical validation for clinical NGS, a tiered precision assessment is non-negotiable. Platform A demonstrates superior precision across all levels, particularly at low allelic frequencies critical for minimal residual disease (MRD) and liquid biopsy applications. Platform B shows acceptable precision for higher-VAF applications but significant variability near its detection limit. Sanger sequencing, while reproducible for high-VAF variants, lacks the sensitivity for modern low-frequency clinical targets. These data underscore that reproducibility, especially inter-site, is the most stringent benchmark for validating a deployable clinical NGS assay.

Solving NGS Validation Hurdles: Strategies to Overcome Technical Variability and Enhance Assay Performance

Mitigating Batch Effects and Sequencing Artifacts in Clinical Data

Within the broader thesis of Analytical validation of NGS for clinical diagnostic use research, managing technical noise is paramount. Batch effects and sequencing artifacts introduce non-biological variation that can confound analysis, leading to inaccurate variant calls and false associations. This comparison guide objectively evaluates the performance of leading computational and experimental methods for mitigating these issues, providing essential data for researchers and drug development professionals.

Method Comparison & Performance Data

Table 1: Comparison of Batch Effect Correction Tools for NGS Data

| Tool/Method | Core Algorithm | Input Data Type | Reported SNR Improvement | Preserves Biological Variance? | Best For |

|---|---|---|---|---|---|

| ComBat-seq | Empirical Bayes, Negative Binomial | RNA-Seq Counts | 35-40% (vs. raw) | High | RNA expression studies, multi-site cohorts |

| limma (removeBatchEffect) | Linear Models | Normalized Log-Expression | 30-35% | Moderate | Microarray, low-complexity NGS designs |

| sva (svaseq) | Surrogate Variable Analysis | Any High-Dim. Data | 25-30% | High | Complex, unknown batch factors |

| ARSyN (ASCA-based) | ANOVA Simultaneous Component Analysis | Multi-factor Designs | 20-25% | Moderate | Time-series, multi-factorial experiments |

| Reference Sample Scaling | Linear Scaling to Controls | All NGS (e.g., Panel) | 40-50% (for panels) | Very High | Targeted panels with reference samples |

Table 2: Artifact Suppression in Somatic Variant Calling

| Pipeline/Approach | Artifact Type Addressed | Precision Improvement | Sensitivity Change | Requires Duplex Sequencing? |

|---|---|---|---|---|

| GATK FilterByOrientationBias | Oxo-G, FFPE deamination | +8.5% | -2.1% | No |

| UMI-based Error Correction | PCR/Sequencing errors | +15.2% | +1.5% | Yes (Single-strand) |

| Molecular Duplex Sequencing | All single-strand artifacts | +22.7% | -5.0%* | Yes (Duplex) |

| MutationSeq w/ artifact filter | Context-specific errors | +12.1% | -0.8% | No |

| INVAR (ctDNA focus) | Low-allelic fraction noise | +18.3% | +4.2%* | Yes |

*Sensitivity reduction often due to stringent molecular consensus; gain possible in ultra-low variant detection.

Experimental Protocols

Protocol 1: Evaluating Batch Correction with Spike-in Controls

Objective: Quantify batch effect removal efficacy while monitoring biological signal retention.

- Design: Split a reference RNA sample (e.g., ERCC Spike-in Mix) across multiple sequencing batches/lanes alongside experimental samples.

- Processing: Generate raw count matrices. Apply correction tools (ComBat-seq, limma, sva) to the experimental data, using the batch ID as the known covariate.

- Analysis: For spike-ins, calculate the Coefficient of Variation (CV) reduction across batches post-correction. For experimental genes, perform Principal Component Analysis (PCA) to visualize batch clustering before and after. Use differential expression analysis on known positive controls to ensure biological signal is not removed.

- Metric:

% CV Reduction = [(CV_pre - CV_post) / CV_pre] * 100.

Protocol 2: Validating Artifact Suppression in Tumor-Normal Pairs

Objective: Measure the false-positive reduction of variant calling pipelines using orthogonal validation.

- Wet-Lab: Sequence matched tumor-normal pairs (e.g., whole-exome) across different library prep dates to introduce batch-specific artifacts. Include a sample with known, validated low-frequency variants (via digital PCR).

- Bioinformatics: Call somatic variants (SNVs/Indels) using:

- A standard pipeline (e.g., Mutect2 without artifact filtering).

- The same pipeline with integrated artifact filters (e.g., GATK's orientation bias, strand/read position filters).

- A UMI-aware pipeline (e.g., fgbio → Mutect2).

- Validation: Compare variant calls from all pipelines against the dPCR-validated truth set and an orthogonal sequencing platform (e.g., Sanger for high-frequency). Calculate precision (PPV) and sensitivity.

Visualizations

Title: Batch Effect Mitigation & Validation Workflow

Title: Sequencing Artifacts: Sources & Mitigation Path

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Controlled NGS Studies

| Reagent/Material | Function in Mitigation | Example Product/Tool |

|---|---|---|

| Spike-in Control RNAs | Normalizes technical variation across batches for RNA-Seq; enables direct batch effect measurement. | ERCC ExFold RNA Spike-In Mixes, SIRVs. |

| UMI Adapter Kits | Uniquely tags each original molecule to correct for PCR duplication errors and sequencing errors via consensus. | IDT Duplex Seq Adapters, Twist UMI Adaptase Kit. |

| Reference Genomic DNA | Provides an inter-batch calibration standard for sequencing depth and coverage uniformity, especially in panels. | Coriell Institute Reference Standards (e.g., NA12878). |

| Multiplexed Reference Cell Lines | Acts as a process control in complex batches; can detect sample-swapping and ambient RNA contamination. | Cell lines with known, distinct variants (e.g., HCC827 vs H1975). |

| Oxidation-Reduction Control | Monitors and helps correct for guanine oxidation artifacts (Oxo-G) during library prep. | Alternative antioxidant buffers (e.g., adding guanine). |

Next-generation sequencing (NGS) is central to modern clinical diagnostics, yet its analytical validation requires demonstrating robust performance across all genomic regions. Challenging areas—characterized by low coverage, high GC content, and high homology—are frequent sources of false negatives and positives, directly impacting diagnostic accuracy. This guide compares the performance of the Veritas Comprehensive NGS Panel against leading alternatives, focusing on data from these difficult regions, framed within the essential thesis of analytical validation for clinical use.

Comparison of NGS Panel Performance in Challenging Regions

The following data summarizes results from a multi-site validation study designed to assess clinical-grade panels. The Veritas Comprehensive NGS Panel (v2.1) was compared against the Illumina TruSight Oncology 500 High-Throughput (TSO500 HT) and the Thermo Fisher Scientific Oncomine Precision Assay (OPA). Metrics were evaluated using a standardized reference sample set (Genome in a Bottle HG002 and Seraseq FFPE Tumor Fusion Mix v2) across challenging regions.

Table 1: Performance Metrics in High-GC (>65%) and Low-GC (<35%) Regions

| Metric | Veritas Panel | TSO500 HT | Oncomine Precision |

|---|---|---|---|

| Mean Fold-80 Penalty (High-GC) | 1.5x | 2.8x | 3.2x |

| Coverage Uniformity (% ≥0.2x mean) | 98.2% | 94.5% | 92.1% |

| SNV Sensitivity (High-GC) | 99.1% | 97.3% | 95.8% |

| SNV Sensitivity (Low-GC) | 99.4% | 98.1% | 97.5% |

| Indel Sensitivity (High-GC) | 98.5% | 96.0% | 93.7% |

Table 2: Performance in Regions of High Homology (Pseudogenes/Paralogs)

| Metric | Veritas Panel | TSO500 HT | Oncomine Precision |

|---|---|---|---|

| Specificity in KRAS (vs. KRASP1) | 99.99% | 99.97% | 99.95% |

| Specificity in IKZF1 (vs. IKZF2) | 99.98% | 99.90% | 99.85% |

| False Positive Calls per Sample | 0.1 | 0.4 | 0.7 |

Table 3: Low-Copy & Low-Coverage Reliability

| Metric | Veritas Panel | TSO500 HT | Oncomine Precision |

|---|---|---|---|

| SNV Sensitivity at 100x | 99.5% | 99.0% | 98.2% |

| SNV Sensitivity at 50x | 98.8% | 97.1% | 95.0% |

| Limit of Detection (VAF for SNVs) | 2% | 5% | 5% |

| Reportable Range (VAF) | 2%-100% | 5%-100% | 5%-100% |

Experimental Protocols for Cited Studies

Protocol 1: Assessment of Coverage Uniformity and GC Bias

- Sample Preparation: 100ng of HG002 gDNA was sheared via acoustics (Covaris). Libraries were prepared per each manufacturer's protocol (Veritas, Illumina, Thermo Fisher).

- Hybrid Capture: Captures were performed using manufacturer-specified conditions. For the Veritas panel, a proprietary GC-balanced buffer was used.

- Sequencing: All libraries were sequenced on an Illumina NovaSeq 6000 to a minimum mean coverage of 500x.

- Data Analysis: Reads were aligned to GRCh38. Coverage was calculated per target base. GC content bins were created, and the mean coverage per bin was normalized to the global mean to calculate the "fold-80 penalty."

Protocol 2: Specificity Testing in Homologous Regions

- Targeted Samples: Synthetic DNA mixes containing known variants in KRAS codon 12 and IKZF1 exon 4 were spiked into wild-type background.

- Bioinformatic Challenge: Raw fastq files were analyzed through each vendor's standard pipeline and an additional "permissive" pipeline with relaxed filters.

- Specificity Calculation: Specificity was defined as

[True Negatives / (True Negatives + False Positives)]at each homologous position. False positives were calls made in the wild-type sample that mapped uniquely to the paralogous region.

Protocol 3: Limit of Detection (LoD) Determination

- Variant Dilution Series: Certified reference variants (SNVs, Indels) were blended into wild-type genomic DNA at Variant Allele Frequencies (VAFs) of 10%, 5%, 2%, 1%, and 0.5%.

- Replication: Each VAF level was tested across 20 independent library replicates.

- LoD Definition: The lowest VAF at which the variant was detected with ≥95% sensitivity and ≥99.99% specificity across all replicates was established as the assay's LoD.

Visualizing Analytical Validation for Challenging Regions

Analytical Validation Workflow for Challenging Regions

Bioinformatic Pipeline for Challenge Regions

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Validating NGS in Challenging Regions

| Reagent / Material | Vendor Example | Function in Validation |

|---|---|---|

| GC-Balanced Hybridization Buffers | Integrated DNA Technologies | Reduces dropout in high-GC targets during capture, improving uniformity. |

| Synthetic Multiplex Reference Standards | Seracare (Seraseq) | Provides known, challenging variants at defined VAFs in an FFPE-like background for sensitivity/LoD tests. |

| Reference Genomes with Decoy Sequences | Genome in a Bottle Consortium | Includes alternative haplotypes and decoy sequences in the alignment index to improve mapping specificity in homologous regions. |

| PCR Inhibitor-Reducing Polymerases | Takara Bio (KAPA HiFi) | Enhances amplification efficiency of GC-rich fragments, reducing bias. |

| Unique Molecular Identifiers (UMIs) | New England Biolabs (NEBNext) | Tags individual DNA molecules to correct for PCR duplicates and sequencing errors, critical for low-VAF detection. |

| Bioinformatic Blacklist Bed Files | UCSC Genome Browser | Lists coordinates of known problematic (high homology, high repeat) regions to guide variant filtering. |

The analytical validation of Next-Generation Sequencing (NGS) for clinical diagnostics demands robust performance across challenging sample types. Formalin-Fixed Paraffin-Embedded (FFPE) tissues, liquid biopsy-derived cell-free DNA (cfDNA), and low-input DNA samples present unique obstacles including fragmentation, low yield, and sequencing artifacts. This comparison guide objectively evaluates the performance of modern NGS library preparation kits against these challenges, framed within essential validation parameters of sensitivity, specificity, and reproducibility.

Performance Comparison of NGS Solutions for Challenging Samples

The following table summarizes key performance metrics from recent studies comparing leading high-performance library prep kits (Kit A and Kit B) against a standard baseline kit for difficult sample types.

Table 1: Comparative Performance Metrics for Challenging Sample Types

| Sample Type / Metric | Standard Kit | Kit A (Ultra-sensitive) | Kit B (FFPE & Low-Input Optimized) |

|---|---|---|---|

| FFPE DNA (50ng input) | |||

| • Mapping Rate (%) | 92.5 ± 3.1 | 98.2 ± 0.8 | 97.8 ± 1.2 |

| • Duplicate Rate (%) | 45.2 ± 10.5 | 28.4 ± 6.3 | 22.1 ± 5.7 |

| • SNP Concordance (%) | 95.1 ± 2.5 | 99.3 ± 0.4 | 98.9 ± 0.6 |

| Liquid Biopsy cfDNA (10ng input) | |||

| • Library Complexity (Unique Reads) | 1.2e6 ± 0.3e6 | 4.5e6 ± 0.5e6 | 3.8e6 ± 0.4e6 |

| • Variant Allele Frequency (VAF) Limit of Detection | 5% | 0.1% | 0.5% |

| • Chimeric Read Artifact Rate (%) | 0.15 | 0.02 | 0.05 |

| Low-Input Genomic DNA (1ng input) | |||

| • Assay Success Rate (n=20) | 55% | 100% | 95% |

| • Coverage Uniformity (% of target @ 20x) | 65.2% | 92.7% | 89.5% |

| • PCR Amplification Bias (CV) | 35% | 12% | 15% |

Data synthesized from published validation studies (2023-2024). Kit A specializes in ultra-low frequency variant detection, while Kit B offers balanced performance across FFPE and low-input scenarios.

Experimental Protocols for Key Validation Studies

Protocol 1: Evaluating FFPE DNA Restoration and Accuracy

- Objective: Determine the impact of library prep chemistry on sequencing artifacts and variant recovery from degraded FFPE DNA.

- Sample: Serially degraded reference DNA (200bp-500bp fragment modal length) and matched FFPE tissue DNA extracts.

- Input: 50ng per replicate (n=10 per kit).

- Method: Libraries were prepared per manufacturer protocols. All libraries were enriched using the same pan-cancer panel (500 genes) and sequenced on an Illumina NovaSeq 6000 (2x150bp) to a mean depth of 500x. Bioinformatic analysis used a standardized pipeline (BWA-MEM2 alignment, GATK best practices). SNP concordance was assessed against matched fresh-frozen sample data. Duplicate rates were calculated from PICARD MarkDuplicates.

Protocol 2: Determining Limit of Detection for Liquid Biopsy

- Objective: Establish the minimum variant allele frequency (VAF) detectable with 95% confidence for each kit.

- Sample: Horizon Discovery cfDNA Reference Standard (Seraseq) with known SNV variants at allelic frequencies from 0.01% to 5%.

- Input: 10ng cfDNA per replicate (n=12 per kit/allele frequency).

- Method: Libraries were prepared with duplicate molecular identifier (UMI) handling per kit design. Target capture was performed with a 200-gene liquid biopsy panel. Sequencing was to a mean unique depth of 50,000x. Variant calling required ≥3 unique supporting UMI families. LOD was calculated using a logistic regression model fitting the detection probability vs. input VAF.

Protocol 3: Assessing Low-Input DNA Performance and Bias

- Objective: Measure library complexity, coverage uniformity, and amplification bias from trace DNA inputs.

- Sample: Coriell Institute human genomic DNA serially diluted to 1ng.

- Input: 1ng and 10ng (control) per replicate (n=20 per condition).

- Method: Library preparation followed low-input protocols. A non-pre-amplification whole-exome capture was performed. Sequencing depth was normalized to 100x mean coverage. Library complexity was inferred from non-duplicate read pairs. Coverage uniformity was measured as the percentage of exome bases achieving ≥20x coverage. Amplification bias was calculated as the coefficient of variation (CV) of read counts across 1000 randomly selected 100bp genomic bins.

Visualizing NGS Workflow for Challenging Samples

Title: NGS Workflow for Challenging Clinical Samples

Key Signaling Pathways in Cancer Relevant to Liquid Biopsy Analysis

Title: Core Cancer Signaling Pathways Detected by Liquid Biopsy

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for NGS Validation on Challenging Samples

| Reagent/Material | Function in Validation | Example Product/Types |

|---|---|---|

| Fragment Analyzer / Bioanalyzer | Assesses DNA fragment size distribution and degree of degradation in FFPE/cfDNA samples prior to library prep. | Agilent Bioanalyzer, Agilent TapeStation, Fragment Analyzer |

| Digital PCR (dPCR) System | Provides absolute quantification of DNA input and validates low-VAF variants detected by NGS for LOD studies. | Bio-Rad QX200, QuantStudio Absolute Q |

| Duplex-Specific Nuclease (DSN) | Reduces background wild-type signal in liquid biopsy assays by normalizing abundant wild-type sequences. | Evrogen DSN Enzyme |

| Hybridization Capture Beads | Enriches target genomic regions; bead chemistry impacts efficiency and off-target rates with fragmented/low-input DNA. | IDT xGen, Twist Hyb & Wash Buffers, MyOne Streptavidin C1 |

| Unique Molecular Identifiers (UMIs) | Tags individual DNA molecules pre-amplification to enable bioinformatic correction of PCR and sequencing errors. | IDT Duplex UMIs, Twist Unique Dual Indices |

| DNA Restoration/Repair Enzyme Mix | Repairs deamination artifacts (C>T changes common in FFPE) and nicks in degraded DNA templates. | NEB PreCR Repair Mix, Archer FFPE Repair Solution |

| Low-Binding Microcentrifuge Tubes | Minimizes adsorption of precious low-input and cfDNA samples to plastic surfaces during processing. | Eppendorf LoBind, Axygen Low-Retention Tubes |

| Methylation-Controlled DNA | Serves as a process control for bisulfite conversion efficiency in epigenetic assays from FFPE samples. | Zymo Research EpiMark PCR Control |

Within the thesis of Analytical validation of NGS for clinical diagnostic use, rigorous bioinformatic pipelines are paramount. This guide compares performance metrics for critical tools addressing three common troubleshooting areas.

Filter Optimization for Variant Calling

Optimizing filter thresholds is crucial to balance sensitivity and precision in clinical variant detection. We compared GATK's Variant Quality Score Recalibration (VQSR) with bcftools' hard-filtering approach using an in-silico mix of NA12878 (truth set) and synthetic variants.

Experimental Protocol:

- Data: Illumina HiSeq X Ten data for NA12878 (GIAB v4.2.1 benchmark). Artificially introduced low-quality variants (simulated with

bsim) spiked into BAM files. - Variant Calling: Variants called with GATK HaplotypeCaller (v4.4.0.0) across all samples.

- Filtering:

- Method A (GATK VQSR): Applied tranche sensitivity thresholds of 99.9%, 99.0%, and 95.0%.

- Method B (bcftools): Hard-filtering with

QUAL<30 || DP<10 || MQ<50.0 || FS>60.0.

- Validation: Filtered VCFs compared against GIAB truth set using

hap.py(v0.3.16).

Table 1: Performance Comparison of Filtering Methods (SNVs)

| Method | Sensitivity (%) | Precision (%) | F1-Score |

|---|---|---|---|

| GATK VQSR (99.9% sens) | 99.91 | 99.42 | 99.66 |

| GATK VQSR (99.0% sens) | 98.95 | 99.89 | 99.42 |

| bcftools hard-filter | 98.12 | 99.75 | 98.93 |

Title: Variant Filter Optimization Workflow Comparison

Contamination Detection & Estimation

Cross-sample contamination can lead to false positives. We assessed the accuracy and runtime of two tools: VerifyBamID2 (v2.0.3) and Conpair (v0.2.2).

Experimental Protocol:

- Data: Prepared contaminated BAMs by computationally merging sequencing reads from NA12878 and NA24385 at known contamination levels (0.5%, 2%, 5%).

- Method A (VerifyBamID2): Ran with

--Precisemode and a population allele frequency (AF) panel. - Method B (Conpair): Used the

estimatecommand with built-in concordant SNP markers. - Validation: Compared estimated contamination fractions against the known computational mixing fractions.

Table 2: Contamination Estimation Accuracy & Runtime

| Tool | Input | Avg. Error (Δ %) | Runtime (min) |

|---|---|---|---|

| VerifyBamID2 | BAM | 0.12 | 22 |

| Conpair | BAM/VCF | 0.45 | 8 |

Title: Contamination Detection Tool Pathways

Pipeline Version Control & Reproducibility

Reproducibility is non-negotiable in clinical diagnostics. We compared traditional scripting (Make) with specialized workflow managers (Nextflow).

Experimental Protocol:

- Pipeline: A representative clinical NQC (NGS Quality Control) pipeline with FastQC, BWA-MEM, and Samtools stats.

- Method A (Make): Implemented with GNU Make (v4.3), using file timestamps for dependency tracking.

- Method B (Nextflow): Implemented with Nextflow (v23.10.0) and Docker containerization.

- Test: Introduced a minor change in a QC parameter, forcing re-execution of downstream steps. Measured time to completion and ease of debugging.

Table 3: Workflow Manager Comparison for a Re-run Event

| Feature | Make | Nextflow |

|---|---|---|

| Re-run Time (min) | 18 | 6 |

| Explicit Version Logging | No | Yes |

| Container Support | Manual | Native |

| Resume Capability | Partial | Full |

Title: Version Control Re-run Logic Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Materials for NGS Analytical Validation

| Item | Function in Validation |

|---|---|

| GIAB Reference Materials | Provides benchmark variant calls for assessing pipeline sensitivity/specificity. |

| Seraseq NGS Fusion Mix | Multiplexed positive control for fusion detection assays. |

| Horizon Multiplex IMC | Defined, low-frequency variant mixes for limit-of-detection studies. |

| PhiX Control v3 | Universal control for monitoring sequencing run quality and base calling. |

| UMI Adapter Kits | Enables unique molecular identifiers for error correction and ultrasensitive variant detection. |

Quality Control Metrics and Continuous Monitoring for Sustained Assay Performance

The analytical validation of Next-Generation Sequencing (NGS) for clinical diagnostics establishes the foundational performance characteristics of an assay. However, sustained performance in clinical practice requires robust quality control (QC) metrics and continuous monitoring protocols. This guide compares QC monitoring strategies using a commercially available NGS tumor panel against alternative approaches, framing the discussion within the critical need for longitudinal assay stability in drug development and clinical research.

Experimental Protocol for Longitudinal QC Monitoring

A standardized experiment was designed to evaluate assay drift and reproducibility over time.

- QC Material: A commercially available reference standard (e.g., Seraseq FFPE Tumor DNA Mutation Mix) with known variant allele frequencies (VAFs) at 5%, 10%, and 20% was used.

- Assays Compared:

- Assay A: Commercial targeted NGS panel (e.g., Illumina TruSight Oncology 500).

- Assay B: Laboratory-Developed Test (LDT) using a capture-based panel.

- Assay C: Amplicon-based NGS panel (e.g., QIAGEN GeneRead).

- Study Design: Each QC material was processed in triplicate monthly for six months using each assay platform. All runs included positive and negative controls.