Mastering ChIP-seq Normalization: Essential Methods for Accurate Peak Calling and Differential Analysis

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth exploration of ChIP-seq normalization methodologies.

Mastering ChIP-seq Normalization: Essential Methods for Accurate Peak Calling and Differential Analysis

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth exploration of ChIP-seq normalization methodologies. Covering foundational concepts to advanced applications, the article explains why normalization is critical for accurate peak detection and comparative chromatin studies. It details key methods including Reads Per Million (RPM), DESeq2, edgeR, and normalization to input controls. We address common pitfalls, troubleshooting strategies, and optimization techniques for real-world experimental designs. Finally, we present a comparative framework for method validation and selection, empowering readers to choose and implement the most appropriate normalization strategy for their specific research goals in epigenetics and therapeutic development.

Why Normalize? The Foundational Need for Data Standardization in ChIP-seq

Normalization in ChIP-seq is the process of adjusting raw read counts to account for technical biases and variability, enabling accurate biological comparisons. It is non-negotiable because differences in sequencing depth, DNA input, chromatin accessibility, and immunoprecipitation efficiency can create false positives or obscure real signal. Without normalization, differential binding analysis and quantitative comparisons across samples are invalid.

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ChIP-seq replicates show high variability after peak calling. How do I determine if it's a normalization issue? A: High inter-replicate variability often stems from improper input control normalization. First, assess library complexity and alignment rates using FASTQC and MultiQC. Then, compare global scaling factors from methods like DESeq2 or edgeR. If factors vary by >2-fold, re-normalize using a robust method like Median Ratio Normalization (MRN) on the count matrix from common peaks. Always visually inspect correlation plots and PCA plots of normalized read counts.

Q2: When comparing treatments, should I use Input DNA or a reference sample for normalization? A: For most differential binding analyses, you must use both.

- Input DNA: Corrects for background noise and chromatin accessibility bias. It is used initially to generate an "enrichment" signal (e.g., by calculating a log2(ChIP/Input) ratio).

- Between-sample normalization: Corrects for differences in total reads and IP efficiency between your treatment samples. This is typically applied to the enrichment values or read counts in peaks using methods implemented in tools like

csaworDiffBind.

Table 1: Common Normalization Methods for ChIP-seq

| Method | Principle | Best For | Tool/Package |

|---|---|---|---|

| Reads Per Million (RPM/CPM) | Scales counts by total library size. | Preliminary visualization, comparing peak intensity when depths are similar. | deepTools bamCoverage, bedtools genomecov |

| Median Ratio Normalization (MRN) | Assumes most genomic regions are not differentially bound. | Differential binding analysis with high replicate consistency. | DESeq2, edgeR |

| Quantile Normalization | Forces the distribution of read counts to be identical across samples. | Samples with very similar binding profiles and global patterns. | limma, preprocessCore |

| Peak-based Trimmed Mean of M-values (TMM) | Uses a subset of conserved peaks to calculate scaling factors. | Experiments with expected global changes (e.g., transcription factor knockout). | DiffBind (default) |

Q3: How do I normalize ChIP-seq data for a factor with global binding changes (e.g., histone modification across conditions)? A: This is a critical challenge. Avoid methods assuming most features are unchanged (like standard MRN).

- Use a set of invariant genomic regions (e.g., housekeeping gene promoters, see

ChIPseqSpikeInFreepackage) or spike-in controls. - Apply a control-based method like SPIKE-IN Normalization (see protocol below) or Non-Redundant Reference (NRR) normalization in the

normrpackage. - Visually assess normalization success by checking the distribution of reads over genomic features expected to be stable (e.g., silent intergenic regions).

Experimental Protocols

Protocol 1: Median Ratio Normalization for Differential Peak Analysis

- Peak Calling & Counting: Call peaks per sample (e.g., with MACS2). Generate a consensus peak set using

bedtools merge. Count reads in each peak for every sample usingfeatureCountsorDiffBind. - Calculate Size Factors: Using the count matrix, compute a size factor (SF) for each sample

i:SF_i = median( peak_count_i / geometric_mean(peak_count_all_samples) ). - Normalize Counts: Divide the raw count for each peak in sample

ibySF_i. - Proceed with Statistical Testing: Use the normalized counts in a negative binomial model (e.g., in DESeq2) to call differential peaks.

Protocol 2: SPIKE-IN Normalization for Global Changes

- Spike-in Addition: Spike a constant amount of chromatin from a distinct organism (e.g., D. melanogaster chromatin into human cells) into each ChIP reaction before immunoprecipitation.

- Sequencing & Alignment: Sequence the pooled library. Align reads separately to the experimental genome (e.g., hg38) and the spike-in genome (e.g., dm6).

- Calculate Scaling Factor: Let

R_expandR_spikebe the reads aligned to the experimental and spike-in genomes. The scaling factor for sampleiis:SF_i = (R_spike_i / sum(R_spike_all)) / (R_exp_i / sum(R_exp_all)). - Apply Normalization: Multiply the experimental sample's coverage or read counts by

SF_ifor all downstream analyses.

Mandatory Visualizations

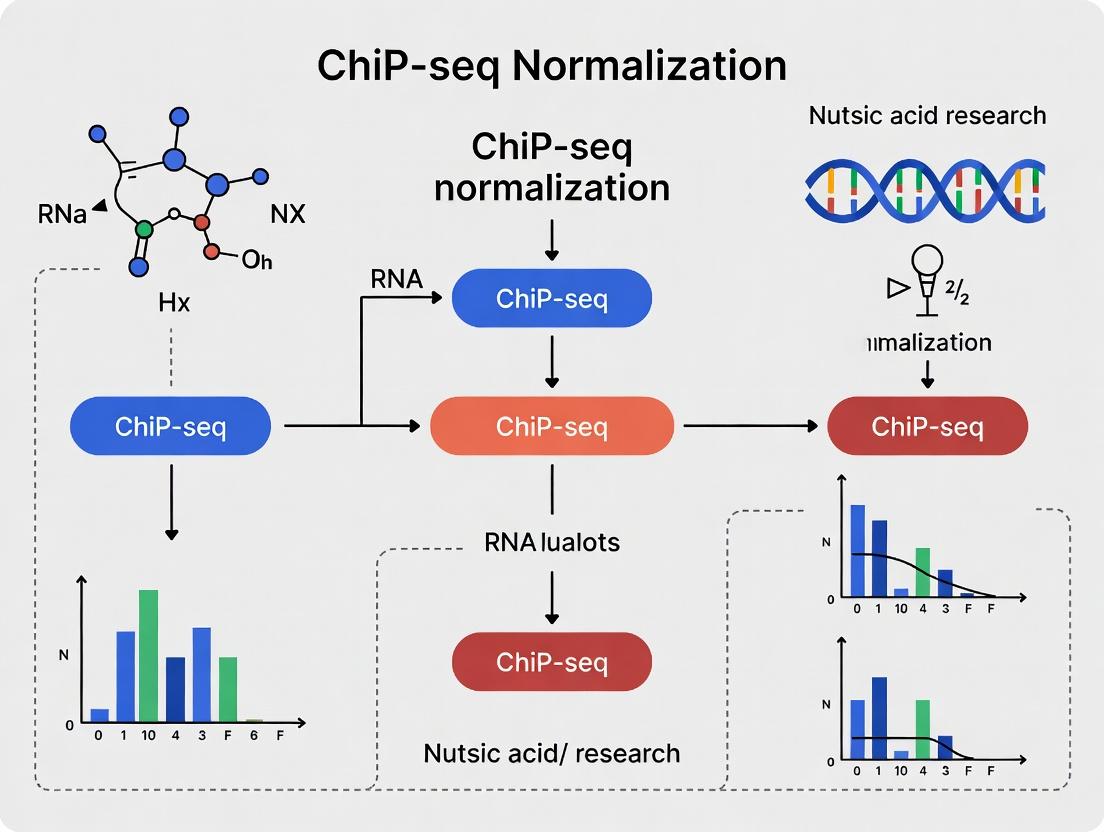

Title: ChIP-seq Normalization Decision Workflow

Title: SPIKE-IN Normalization Experimental Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Robust ChIP-seq Normalization

| Item | Function | Example/Supplier |

|---|---|---|

| Commercial Input DNA | Provides a standardized, high-quality background control for Input library preparation. | EpiTect Control DNA (Qiagen) |

| Cross-species Chromatin Spike-in | Enables absolute normalization for experiments with global binding changes. | D. melanogaster S2 chromatin (Active Motif, 61686) |

| Sequencing Depth Calibration Beads | For precise quantification of DNA libraries pre-sequencing to improve library pooling. | KAPA Library Quantification Beads (Roche) |

| PCR Duplication Removal Enzyme | Reduces PCR bias, improving accuracy of quantitative peak intensity measures. | Zumax Bio Clean-Plex PCR Duplicate Remover |

| High-Fidelity & Low-Bias Amplification Kits | Maintains representation during library amplification, critical for count-based methods. | KAPA HiFi HotStart ReadyMix (Roche), NEBNext Ultra II Q5 (NEB) |

Technical Support Center: Troubleshooting ChIP-seq Technical Biases

This support center is designed to assist researchers in identifying and mitigating key technical biases in ChIP-seq data, framed within the critical context of developing and selecting appropriate normalization methods for downstream analysis.

Troubleshooting Guides

Issue: Inconsistent Peak Numbers Between Replicates

- Problem: Biological replicates show significantly different numbers of called peaks.

- Diagnosis Checkpoints:

- Compare library sizes (total aligned reads). A >2-fold difference suggests library size bias.

- Check sequence quality reports (FastQC) for overrepresentation of sequences from specific GC-content regions.

- Verify that mapping rates are consistent and high (>70% for standard genomes).

- Solution: Apply a normalization method that accounts for library size (e.g., Counts Per Million - CPM) as a baseline. If disparity remains, explore GC-content normalization tools.

Issue: Poor Correlation of Signal in Non-Peak Regions

- Problem: Genome browser visualization shows consistent signal in peak regions but high variability in background (non-peak) regions between samples.

- Diagnosis Checkpoints:

- Generate a plot of read depth vs. GC-content percentage across genomic bins.

- Examine low-mappability regions (e.g., centromeres, telomeres) for spurious, variable signal.

- Solution: This strongly indicates GC bias and/or mappability bias. Implement a bias correction method such as

deepToolscorrectGCBiasor use a normalization approach (e.g., SES, S3V2) that incorporates these factors.

Issue: Differential Peak Analysis Results are Skewed Towards Long or High-Input Regions

- Problem: Results from tools like

diffBindseem to call more differential peaks in genic or specific genomic compartments. - Diagnosis Checkpoints:

- Correlate called differential peaks with input control signal.

- Check if peak length or local mappability correlates with significance.

- Solution: Ensure your differential analysis uses an appropriate normalization (e.g., TMM, RLE) that is robust to these compositional biases, rather than simple library size normalization.

Frequently Asked Questions (FAQs)

Q1: What is the first normalization step I should always do for my ChIP-seq data? A: Library size normalization (e.g., CPM, RPM) is the fundamental first step. It corrects for the fact that samples sequenced to different depths cannot be directly compared. However, within the thesis on advanced normalization methods, it is crucial to understand that this is often insufficient to correct for GC bias and mappability effects.

Q2: How can I diagnose GC bias in my dataset?

A: Use tools like deepTools computeGCBias and plotFingerprint. They will generate a plot comparing the observed versus expected read count based on genomic GC content. A significant deviation from the diagonal indicates GC bias. The protocol is below.

Q3: My organism has a complex genome with low-mappability regions. How does this affect my analysis? A: Low-mappability regions (e.g., repeat-rich areas) cause ambiguous read alignment, leading to inconsistent signal and false positives/negatives. It introduces variation that is technical, not biological. Normalization methods that incorporate mappability tracks (e.g., by weighting or masking) are essential for robust analysis in such genomes.

Q4: Are there integrated tools that handle multiple biases at once for normalization?

A: Yes, recent methods are moving in this direction. For instance, S3V2 (Normalization of sequencing data using signal from the same DNA sample) and peakHiC-style approaches for Hi-C consider multiple covariates. The choice depends on your experiment and should be validated using metrics like PCA plots of replicates post-normalization.

Summarized Quantitative Data on Common Biases

Table 1: Impact and Scale of Common Technical Biases in ChIP-seq

| Bias Type | Typical Measured Impact (Variation Introduced) | Common Diagnostic Tool | Primary Correction Goal |

|---|---|---|---|

| Library Size | Can cause >10-fold differences in raw read counts between samples. | Read alignment statistics (e.g., from samtools flagstat). |

Equalize total usable signal across samples. |

| GC Bias | Read count in GC-rich/poor bins can vary by 50-200% from expected. | deepTools computeGCBias, FastQC. |

Decouple signal intensity from local GC content. |

| Mappability | Signal in low-mappability (<0.5) regions can show >300% higher variability between replicates. | genmap or GEM mappability track, SAMtools view of multi-mappers. |

Reduce noise from ambiguous genomic regions. |

Experimental Protocols

Protocol 1: Diagnosing GC Bias with deepTools

Objective: To quantify and visualize GC-content bias in a ChIP-seq BAM file.

Materials: Aligned BAM file, reference genome FASTA file, deepTools suite installed.

Steps:

- Compute the GC bias:

computeGCBias -b sample.bam --effectiveGenomeSize 2150570000 -g hg38.fa -l 200 -freq output_GCbias.txt - Plot the results:

plotFingerprint -b sample.bam -plot output_fingerprint.png --outRawCounts output_counts.txt - Interpret the

output_GCbias.txtfile: The first column is GC percentage, the second is the observed/expected ratio. A perfect unbiased sample would have a ratio of ~1 across all GC percentages.

Protocol 2: Assessing Mappability Bias

Objective: To correlate read density with genomic region mappability.

Materials: BAM file, genome-wide mappability track (e.g., 50mer uniqueness track from UCSC), bedtools.

Steps:

- Convert mappability track to a BED file of low-mappability regions (e.g., uniqueness score < 1).

- Use

bedtools intersectto count reads falling within low-mappability vs. high-mappability regions. - Calculate the ratio of observed read density (reads per kb) in low-mappability regions to that in high-mappability regions. A ratio significantly >0 indicates enrichment of uninterpretable signal in difficult regions.

Diagrams

ChIP-seq Technical Bias Diagnosis Workflow

Sources of Variation & Their Impact

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for ChIP-seq Bias Assessment

| Item | Function in Bias Analysis |

|---|---|

| High-Quality Input DNA / Control | Serves as the baseline for identifying enrichment. Crucial for methods like SES normalization which subtract input signal to account for technical biases. |

| Spike-in Chromatin (e.g., S. cerevisiae) | An external normalization control added prior to immunoprecipitation. Corrects for biases arising from differences in ChIP efficiency and library preparation, not just sequencing depth. |

| Commercial Library Prep Kits with GC Bias Mitigation | Modern kits often contain polymerases and buffers optimized to reduce amplification bias across varying GC-content templates. |

| Uniqueness/Mappability Track (BED file) | A pre-computed file defining which genomic regions are uniquely mappable. Essential for masking or weighting regions during analysis to mitigate mappability bias. |

| Genome Blacklist File (e.g., ENCODE) | A curated list of genomic regions with consistently high, unstructured signal across experiments. Filtering these reduces false positives from technical artifacts. |

Technical Support Center: ChIP-Seq Normalization Troubleshooting

Frequently Asked Questions (FAQs)

Q1: After normalization, my ChIP-seq peaks appear weaker or have disappeared. Is this an error? A: This is a common observation, not necessarily an error. Normalization methods like Reads Per Million (RPM) or more advanced techniques (e.g., DESeq2's median-of-ratios, MAnorm) adjust signal based on total library size or reference samples. If your initial "raw" signal was inflated by a lower total read count in your IP sample compared to the control, proper normalization corrects this. Verify your normalization method is appropriate for your experimental design (e.g., use spike-in normalization for global histone mark changes).

Q2: When should I use spike-in normalization versus cross-sample normalization methods? A: The choice is critical and depends on your experiment's thesis context.

- Use spike-in normalization (e.g., with Drosophila or S. cerevisiae chromatin) when you expect global changes in ChIP efficiency or mark abundance between conditions. This is common in experiments involving drug treatments that alter chromatin accessibility or large-scale transcriptional shifts.

- Use cross-sample normalization methods (e.g., MAnorm, NCIS) when comparing samples where the majority of the genome is expected to have similar binding profiles, such as transcription factor binding between wild-type and a specific knockout cell line.

Q3: My biological replicates show high correlation before normalization but diverge after. What went wrong? A: This can indicate that the chosen normalization method is too aggressive or inappropriate. For example, applying a method that assumes few differential peaks (like MAnorm) to data where a large fraction of the genome is changing (e.g., different cell types) can over-correct and introduce artifacts. Re-examine your assumptions about the system. Consider using a method designed for broader dynamic ranges, such as quantile normalization on a robust subset of peaks, or validate with spike-ins.

Q4: How do I handle normalization for CUT&Tag or CUT&RUN data compared to traditional ChIP-seq? A: CUT&Tag/CUT&RUN data typically has much lower background. While RPM scaling is common, the extremely high signal-to-noise ratio means normalization is highly sensitive to a few strong peaks. Best practices include:

- Using a background region (e.g., immunoglobulin control) for subtraction.

- Implementing a scaling factor based on read counts in reference peak regions common to all samples.

- Considering moderated methods like those in

csaworDiffBindpackages which handle low-count backgrounds better.

Troubleshooting Guides

Issue: Inconsistent Peak Calling After Normalization Symptoms: Peaks called from normalized bigWig files differ significantly in number and size from those called on raw BAM files. Diagnostic Steps:

- Check Normalization Scaling Factors: Calculate the scaling factors applied (e.g., 1/million reads). Compare the factors across samples. A sample with a factor >10x different from others may have a technical issue (low sequencing depth).

- Visualize Raw vs. Normalized Signal: Use IGV to view a constitutive peak region (e.g., housekeeping gene promoter for H3K4me3). The relative height between samples should be consistent in normalized tracks, even if absolute values change.

- Verify Peak Caller Input: Ensure your peak caller (MACS2, SEACR) is configured correctly for the input provided. Some callers expect raw fragment counts, while others can handle normalized signals.

Protocol: Verification of Normalization Consistency Using deepTools

- Generate normalized bigWig files using

bamCompare(for IP vs control) orbamCoverage(for RPM scaling).

Compute correlation matrices between samples using

multiBigwigSummary.Plot the correlation matrix using

plotCorrelation.Interpretation: High correlation (>0.9) between replicates post-normalization indicates technical consistency. Lower correlation suggests normalization did not correct for library-based artifacts.

Issue: Loss of Differential Binding Signal Post-Normalization Symptoms: Visual and statistical (e.g., from DiffBind) evidence of a differential peak is lost after applying a specific normalization. Diagnostic Steps:

- Benchmark with a Positive Control Region: Identify a genomic region where a change is expected based on your thesis hypothesis (e.g., a known target gene upon drug treatment). Plot the raw read counts and normalized signals (e.g., using

plotProfilefrom deepTools) across this locus for all samples. - Evaluate Normalization Assumptions: If using a method like DESeq2 for differential analysis, it performs internal normalization. Adding external normalization prior to input may be double-counting. Provide raw count matrices (from

featureCountsormultiBamSummary) to the differential analysis tool and let it handle normalization. - Test Alternative Methods: Process your data through a streamlined workflow with a different normative basis.

- Protocol: Comparative Normalization with

DiffBind- Create a sample sheet for

DiffBind. - Read in peaks and compute counts:

dba.count(DBA, peaks=NULL, summits=250). - Apply different normalizations sequentially:

- Create a sample sheet for

- Protocol: Comparative Normalization with

Table 1: Impact of Normalization Method on Peak Call Statistics in a Model Drug Treatment Experiment Experiment: H3K27Ac ChIP-seq in treated vs. control cell lines (n=3 replicates). Peak calling with MACS2 (q<0.05).

| Normalization Method | Total Peaks (Control) | Total Peaks (Treated) | Differential Peaks (Up) | Differential Peaks (Down) | Inter-Replicate Correlation (Mean Pearson's r) |

|---|---|---|---|---|---|

| None (Raw Read Count) | 42,150 | 38,900 | 1,550 | 1,200 | 0.87 |

| Reads Per Million (RPM) | 41,800 | 40,100 | 850 | 790 | 0.94 |

| DESeq2 (Median-of-Ratios) | 40,990 | 39,870 | 1,220 | 1,050 | 0.96 |

| Spike-in (S. cerevisiae) | 41,200 | 39,500 | 2,150 | 1,800 | 0.98 |

Table 2: Recommended Normalization Methods by Experimental Context

| Experimental Scenario | Primary Challenge | Recommended Method | Key Rationale |

|---|---|---|---|

| Transcription Factor, similar cell types | Library size variation | Cross-sample (MAnorm, NCIS) | Assumes conserved background regions for scaling. |

| Histone marks, drastic treatments (e.g., kinase inhibitor) | Global mark abundance changes | Spike-in chromatin | Controls for variable ChIP efficiency. |

| Low-input / Low-background (CUT&Tag) | Sensitivity to outliers | Background subtraction + moderate scaling (csaw) | Reduces noise without over-fitting. |

| Time-course or multi-condition | Complex batch effects | Conditional Quantile Normalization | Aligns signal distributions across all samples. |

Experimental Protocols

Protocol: Spike-in Normalization for ChIP-seq Objective: To control for technical variation in ChIP efficiency and sequencing depth by adding a constant amount of exogenous chromatin from a different species (e.g., Drosophila melanogaster). Materials: See "Scientist's Toolkit" below. Method:

- Spike-in Addition: Prior to sonication or chromatin digestion, add 1-10% (by chromatin mass) of prepared D. melanogaster chromatin (e.g., S2 cell chromatin) to your human or mouse chromatin sample.

- Proceed with ChIP: Follow your standard ChIP protocol using an antibody that also recognizes the epitope in the spike-in chromatin (most histone mark antibodies, many TFs).

- Sequencing & Alignment: Sequence the library. Align reads separately to the experimental genome (e.g., hg38) and the spike-in genome (e.g., dm6) using your standard aligner (Bowtie2, BWA).

- Calculate Scaling Factor:

a. Count reads aligning uniquely to the spike-in genome for each sample (

R_spike). b. Compute a scaling factor for each sample:SF = (1,000,000 / R_spike). The sample with the highestR_spike(best ChIP efficiency) typically gets a factor of 1. - Apply Scaling: Generate normalized bigWig files by scaling the experimental genome read counts by the sample's

SF. This can be done usingbamCoveragein deepTools with the--scaleFactorargument.

Protocol: Cross-Sample Normalization Using MAnorm2 Objective: To normalize peak signal across samples based on a set of common peak regions, assuming these regions represent stable binding background. Method:

- Generate a Consensus Peak Set: Call peaks on each sample individually. Take the union of all peaks across all samples to create a master set.

- Count Reads: Count reads from each BAM file falling within each peak in the master set (e.g., using

featureCountsorbedtools multicov). This produces a count matrix. - Apply MAnorm2 (R Package):

- Downstream Analysis: Use the normalized densities for differential analysis or visualization. MAnorm2 internally fits a linear model to common peaks to estimate scaling parameters.

Visualizations

Normalization in ChIP-Seq Workflow

Choosing a Normalization Method

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Normalization Context |

|---|---|

| D. melanogaster S2 Cell Chromatin | The most common source of spike-in chromatin for human/mouse experiments. Provides an exogenous, constant signal for controlling ChIP efficiency. |

| Anti-Histone Antibody (e.g., H3) | Used for a "global" internal control in some normalization strategies. Requires the mark be invariant, which is often not valid in perturbative studies. |

| Commercial Spike-in Kits (e.g., Clean-Cut) | Pre-quantified, fragmented chromatin from a divergent species for simplified spike-in workflows. |

| Size-selection Beads (SPRI) | Critical for generating libraries of consistent insert size, which affects mappability and signal uniformity across samples. |

| Unique Dual Indexed Adapters | Enable multiplexing of many samples in one sequencing lane, reducing batch effects that complicate normalization. |

| QuBit Fluorometer / Bioanalyzer | Accurate quantification of DNA before sequencing ensures balanced library loading, improving the baseline for any downstream normalization. |

The Goal of Normalization is Fair Sample Comparison, Not Just Scaling

Troubleshooting Guides & FAQs

Q1: My ChIP-seq sample has vastly different total read counts. After simply scaling by total reads (like CPM/RPM), my treatment sample still shows a massive global increase in signal. What went wrong? A: This is a classic sign that your experiment may be affected by a global bias, such as a difference in ChIP efficiency, DNA input, or antibody affinity. Simple scaling (e.g., RPM) assumes only sequencing depth differs. If one sample has systematically more signal everywhere, normalization should correct for this global difference to enable fair comparison of specific peaks. You need a method that estimates a scaling factor based on a presumed invariant background, such as methods using background bins (e.g., SES), spike-in controls, or nonlinear methods like MA normalization.

Q2: I used spike-in chromatin from Drosophila for my human cell line experiment, but the normalized results look strange. What are common pitfalls? A: Common issues include:

- Inaccurate Quantification: Imperfect initial quantification and mixing of spike-in chromatin and experimental chromatin leads to erroneous scaling factors. Always use a fluorometric assay for precise concentration measurement.

- Chromatin Integrity Mismatch: The sonication or fragmentation efficiency must be identical between spike-in and experimental samples. Differently sized chromatin fragments immunoprecipitate with different efficiencies.

- Cell Count vs. Chromatin Amount: You must spike a constant amount of spike-in chromatin, not a constant ratio. Add the same absolute amount (e.g., 1 ng) to each ChIP reaction, which originated from a constant number of experimental cells. This corrects for differences in cell number and ChIP efficiency.

Q3: When using background-region methods (e.g., SES, MAnorm), how do I choose the right set of bins or regions for normalization? A: The selection is critical. Regions should be:

- Devoid of True Peaks: Use a stringent, consensus peak call across all samples, and exclude these regions. Often, non-peak regions in the genome or regions from a input/control IgG sample are used.

- Genomically Extensive: Contain enough bins (e.g., 10,000+) to provide a stable estimate of background signal.

- Excluding Artifacts: Filter out known high-background regions (e.g., ENCODE blacklists) and gaps in assembly.

If chosen poorly, your "background" may still contain differential peaks, skewing the scaling factor.

Q4: My replicates are highly consistent with each other, but normalized signal values between different conditions are not comparable. Which method should I consider? A: This indicates a need for between-condition normalization. Consider these approaches based on your experimental design:

- For global changes expected (e.g., transcription factor activation): Use a spike-in normalization protocol.

- For focused changes (most histone marks): Use a background bin method (e.g., in DiffBind:

normalize = DBA_NORM_NATIVEwithbackground=TRUE). - For complex, non-linear distortions: Explore quantile-based methods or MA normalization (e.g., MAnorm2), which do not assume a constant scaling factor across the dynamic range of signal.

Experimental Protocol: Spike-In ChIP-seq Normalization

Objective: To generate comparable ChIP-seq profiles between samples where global signal changes are anticipated, by normalizing to an exogenous chromatin standard.

Materials: See "Research Reagent Solutions" table.

Protocol:

- Spike-in Chromatin Preparation: Fix and sonicate D. melanogaster S2 cells to achieve a fragment size distribution matching your experimental samples (100-500 bp). Quantify chromatin concentration accurately using a fluorometric assay (e.g., Qubit).

- Experimental Sample Preparation: Harvest a fixed number of human cells (e.g., 1 million) per condition/replicate. Perform cross-linking and sonication as per standard protocol.

- Spike-in Addition: To each constant-volume ChIP reaction, add a precise, constant mass (e.g., 1 ng) of Drosophila spike-in chromatin. Critical: The amount of experimental chromatin will vary, but the amount of spike-in chromatin must be identical across all reactions.

- Immunoprecipitation: Perform simultaneous IP with your target antibody. The antibody must have no cross-reactivity with Drosophila chromatin.

- Library Preparation & Sequencing: Process samples together. Sequence to a sufficient depth, ensuring reads can be uniquely mapped to the respective human (hg38) and Drosophila (dm6) genomes.

- Data Analysis:

- Map reads to a combined reference genome (hg38+dm6).

- Separate alignment files for the experimental and spike-in genomes.

- Call peaks on the experimental genome alignments per sample.

- Calculate Scaling Factor: For each sample, compute the total mapped reads in the spike-in genome (R_spike). The scaling factor (SF) for sample i is:

SF_i = min(R_spike) / R_spike_iwheremin(R_spike)is the smallest spike-in count across all samples. - Apply Normalization: Scale the experimental sample's read coverage or peak scores by

SF_i. This corrects the experimental signal to what would be observed if ChIP efficiency were constant.

Data Presentation

Table 1: Comparison of ChIP-seq Normalization Methods

| Method | Principle | Best For | Limitations | Key Metric for Scaling Factor |

|---|---|---|---|---|

| Total Read Scaling (RPM/CPM) | Equalizes total mapped read count. | Comparing samples where only sequencing depth differs. | Fails with global biological/technical biases. | Total reads in experimental genome. |

| Background Bin (e.g., SES) | Uses signal in non-peak genomic regions. | Histone marks with focused changes; no spike-in available. | Sensitive to bin selection; fails if background is not invariant. | Median read count in selected background bins. |

| Spike-In (External Control) | Normalizes to signal from an added exogenous chromatin. | Experiments with global signal changes (e.g., TF activation, drug treatment). | Requires careful quantification; antibody must not cross-react. | Total reads mapped to spike-in genome. |

| Peak-Based (e.g., DESeq2) | Uses counts in consensus peak regions. | Differential binding analysis with multiple replicates. | Requires replicate sets; assumes most peaks are not differential. | Median-of-ratios from peak read counts. |

| Non-Linear (e.g., MAnorm2) | Models the relationship between signal intensities of two samples. | Correcting non-linear distortions in signal. | Typically used for pair-wise condition comparison. | Fitted linear relationship after MA transformation. |

Diagrams

Title: ChIP-seq Normalization Method Decision Workflow

Title: Spike-in ChIP-seq Normalization Protocol

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Normalization | Example & Notes |

|---|---|---|

| D. melanogaster S2 Cells | Source of exogenous, non-cross-reactive chromatin for spike-in. | Often used with human/mouse samples. Cultured in Schneider's medium. |

| Species-Specific Antibody | Immunoprecipitates target from experimental species only. | Validate for no cross-reactivity with spike-in species (e.g., anti-H3K27ac, human-specific). |

| Fluorometric DNA Quant Kit | Precisely measures concentration of sheared spike-in chromatin. | Qubit dsDNA HS Assay. Critical for adding identical mass. |

| Crosslinking Reagent | Fixes protein-DNA interactions in both experimental and spike-in cells. | Formaldehyde (1%). Ensure fixation conditions are consistent. |

| Chromatin Shearing Reagent | Fragments chromatin to optimal size for IP. | Covaris sonicator or Bioruptor. Match fragment size distributions. |

| Size Selection Beads | Cleans up libraries and removes primer dimers post-PCR. | SPRI/AMPure beads. Ensures library quality before sequencing. |

| Dual-Indexed Sequencing Adapters | Allows multiplexing of many samples in one sequencing run. | Illumina TruSeq adapters. Reduces batch effects. |

| Blacklist Region File | Defines genomic regions with high artifactual signal to exclude. | ENCODE consensus blacklists for hg38, mm10, etc. Used in background bin selection. |

FAQs & Troubleshooting Guide

Q1: My ChIP-seq shows low read depth across all samples. What could be the cause and how do I fix it? A: Low global read depth often stems from insufficient starting material or poor library preparation. Ensure you use >10 ng of immunoprecipitated DNA for library prep. Check DNA fragment size post-sonication (200-700 bp is ideal). Re-assess QC steps with a Bioanalyzer/Qubit. Increase PCR cycle number during library amplification cautiously (e.g., from 12 to 15 cycles) to avoid duplicates, but monitor over-amplification.

Q2: How can I accurately calculate IP efficiency, and what value indicates a successful experiment? A: IP efficiency is typically calculated as the percentage of input DNA recovered after immunoprecipitation. Use qPCR on known positive and negative control genomic regions before sequencing. Protocol: After reverse-crosslinking and DNA purification, run qPCR for a 1% Input sample and your IP DNA sample. Calculate: %IP = 2^(Ct(Input) - Ct(IP) - log2(Input Dilution Factor)) * 100%. An efficiency of 0.5-5% is generally acceptable, but this is target and antibody dependent.

Q3: My background signal (noise) is too high. How can I reduce it? A: High background usually indicates antibody nonspecificity or insufficient washing. Troubleshooting Steps:

- Increase wash stringency (e.g., increase salt concentration in wash buffers incrementally).

- Include a pre-clearing step with beads alone.

- Titrate your antibody; too much antibody increases background.

- Verify antibody specificity with a knockout control if available.

- Use a blocking agent like BSA or salmon sperm DNA in your buffers.

Q4: What are the best methods to calculate enrichment, and how do I choose a normalization method for valid comparison? A: Enrichment is the signal over background. Common normalization methods in a research thesis context include:

- Input Subtraction: Scales signals by subtracting a control (IgG or Input) track.

- Read Depth Scaling (CPM/RPM): Normalizes by total mapped reads. Poor for differential analysis if IP efficiencies vary.

- Peak-Based (e.g., MACS2): Uses a Poisson model to call enriched regions against a background model. The choice depends on your thesis hypothesis. For comparing samples with global changes, consider methods like DESeq2 (adapted for ChIP-seq) or THOR that do not assume most peaks are unchanged.

Key Quantitative Data in ChIP-seq Analysis

Table 1: Impact of Read Depth on Peak Calling

| Total Reads (Million) | Detected Peaks (Typical Transcription Factor) | Saturation Level | Recommendation |

|---|---|---|---|

| 10-15 | ~70-80% of total | Low | Insufficient |

| 20-25 | ~90-95% of total | Medium | Minimum |

| 40-50 | ~98-99% of total | High | Optimal |

Table 2: Troubleshooting Guide: Symptoms, Causes, and Solutions

| Symptom | Likely Cause | Recommended Solution |

|---|---|---|

| Low/No Peaks | Poor antibody, low IP efficiency, over-sonication | Validate antibody, check IP % by qPCR, optimize sonication |

| Peaks in IgG Control | High background, bead contamination | Increase wash stringency, use fresh beads, pre-clear |

| Too Many Broad Peaks | Antibody recognizes multiple isoforms/ proteins | Use monoclonal antibody, check cell line specificity |

| Inconsistent Replicates | Biological variability, technical handling | Increase N, use cross-linked aliquots, standardize protocol |

Experimental Protocols

Protocol 1: Calculating IP Efficiency via qPCR (Pre-Sequencing QC)

- Dilute Input: Take your purified, reverse-crosslinked Input DNA and create a 1:100 dilution series to represent 1% and 0.1% of the total input material.

- Prepare IP DNA: Use your purified IP DNA without dilution or at a minimal dilution (e.g., 1:10).

- qPCR Setup: Perform SYBR Green qPCR on known positive control (e.g., promoter of a housekeeping gene) and negative control (e.g., gene desert) genomic regions for all dilutions.

- Calculation: Use the formula:

% Recovery = 2^[Ct(1% Input) - Ct(IP) - Log2(100)] * 100%. A successful IP typically shows >0.5% recovery at the positive locus and minimal signal at the negative locus.

Protocol 2: Background Subtraction & Normalization Workflow (Bioinformatic)

- Mapping: Align sequenced reads to reference genome using Bowtie2 or BWA.

- Filtering: Remove duplicates and low-quality reads.

- Peak Calling: Call peaks using MACS2 with your IP sample and the appropriate control (Input or IgG):

macs2 callpeak -t IP.bam -c Control.bam -f BAM -g hs -n output --call-summits - Normalization: For differential analysis, use a tool like

deepToolsto compute read depth normalized bigWig files (bamCoverage --normalizeUsing CPM) and then subtract the control track (bigwigCompare --operation subtract).

Visualization of ChIP-seq Analysis Concepts

Title: ChIP-seq Experimental & Analysis Workflow

Title: Core Terminology Relationships in ChIP-seq

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Robust ChIP-seq Experiments

| Item | Function & Importance |

|---|---|

| Specific, Validated Antibody | The most critical reagent. Must be ChIP-grade, validated for the target and species. Use knockout controls if possible. |

| Magnetic Protein A/G Beads | For antibody-antigen complex capture. Offer low background and easy handling over agarose beads. |

| UltraPure BSA & Salmon Sperm DNA | Used as blocking agents in IP/wash buffers to reduce nonspecific binding and lower background. |

| Cell Lysis & Sonication Buffers | Must contain protease inhibitors. Sonication efficiency determines fragment size and data resolution. |

| Proteinase K & RNase A | Essential for reversing crosslinks and digesting proteins/RNA to purify DNA post-IP. |

| SPRI Beads (e.g., AMPure) | For consistent post-IP DNA cleanup and library size selection. More reliable than phenol-chloroform. |

| High-Sensitivity DNA Assay Kits (Qubit/Bioanalyzer) | Accurate quantification and sizing of low-yield IP DNA and final libraries are mandatory for QC. |

| Control qPCR Primers (Positive/Negative Loci) | For pre-sequencing IP efficiency calculation and experiment validation. |

A Practical Guide to Major ChIP-seq Normalization Techniques

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Why are my RPM values high in low-input ChIP-seq samples, but the peaks look weak visually?

- Issue: RPM/CPM normalizes only for sequencing depth, not for immunoprecipitation efficiency or total DNA input. A low-input, low-efficiency sample with few total reads can have high RPM values for the few reads that are mapped, but the absolute signal is biologically insignificant.

- Solution: Use a background or input control and employ normalization methods like SES or DESeq2 that account for background noise and variability across samples. Always visually inspect aligned read pileups in a genome browser alongside quantitative metrics.

FAQ 2: When comparing two conditions, ChIP-seq sample A has 20 million reads and B has 40 million. After RPM normalization, a region shows 10 RPM in both. Can I conclude there is no difference?

- Issue: No. RPM assumes a linear relationship between read count and signal, which is often invalid. The doubling of total reads in Sample B may have disproportionately increased background reads rather than specific signal. RPM fails to account for differences in background composition.

- Solution: Perform differential binding analysis using tools like

DiffBind(which uses DESeq2 or edgeR) that model counts with statistical distributions and account for library size and background variation.

FAQ 3: My spike-in controlled normalization contradicts my RPM-based conclusions. Which should I trust?

- Issue: RPM is an internal control method, blind to changes in global histone occupancy or transcription factor burden. If your experimental manipulation alters total chromatin content, RPM will produce misleading results. Spike-in DNA (e.g., from Drosophila) provides an external scale for true biological change.

- Solution: Trust the spike-in normalized results. This indicates your experiment has a change in total target occupancy, violating a core assumption of RPM. For such conditions (e.g., differential histone modification studies), spike-in or similar global scaling methods are mandatory.

FAQ 4: After RPM normalization, why do I still see a strong correlation between my peak count and my total read count across samples?

- Issue: This indicates that the dominant source of variation is still library size, suggesting that RPM under-corrects. This is common in experiments with widely differing sequencing depths, where RPM-permitted subtle differences in scaled library size drive false findings.

- Solution: Apply a more robust within-lane or between-lane normalization method implemented in tools like

csaworMAnorm2, which explicitly model and remove this dependency.

Table 1: Comparison of Common ChIP-seq Normalization Methods

| Method | Core Principle | Accounts for Sequencing Depth | Accounts for Background/Input | Accounts for Global Shifts (e.g., Total Occupancy) | Recommended Use Case |

|---|---|---|---|---|---|

| RPM/CPM | Simple scaling by total mapped reads | Yes | No | No | Initial visualization; stable, high-input TF ChIP-seq. |

| RPKM/FPKM | RPM scaled by feature length (e.g., genes) | Yes | No | No | Not recommended for ChIP-seq. Misapplied from RNA-seq. |

| SES (Scaled Estimate) | Scales to a subset of high-confidence peaks | Partially | Yes | No | Samples with high background, using an input control. |

| Spike-in (e.g., S. cer) | Scales to externally added chromatin | Yes | Implicitly | Yes | Histone mods or conditions with expected global occupancy changes. |

| DESeq2/edgeR | Statistical modeling based on negative binomial distribution | Yes | Yes (via background regions) | Partially | Differential binding analysis between conditions. |

Table 2: Example Data Illustrating RPM Limitation in Global Occupancy Change Scenario: Drug treatment causes a global loss of H3K4me3. Two replicates per condition, spike-in chromatin added.

| Sample | Condition | Total Reads (M) | Spike-in Reads (K) | RPM for Locus X | Spike-in Norm. Signal for Locus X |

|---|---|---|---|---|---|

| 1 | Control | 30.0 | 15.0 | 20.0 | 1.00 |

| 2 | Control | 32.5 | 16.2 | 18.5 | 0.91 |

| 3 | Treated | 28.0 | 28.0 | 19.6 | 0.50 |

| 4 | Treated | 29.5 | 29.8 | 21.2 | 0.52 |

Conclusion: RPM suggests no change at Locus X. Spike-in normalization reveals the true ~50% loss, consistent with global decrease.

Experimental Protocols

Protocol: Performing RPM Normalization for ChIP-seq Data

- Alignment & Filtering: Map reads (e.g., using BWA/Bowtie2) to the reference genome. Remove duplicates and low-quality reads using tools like SAMtools or Picard.

- Calculate Mapped Read Count:

samtools view -c -F 260 sample.bamto get the total number of mapped, primary reads. - Compute Scaling Factor: Scaling Factor = 1,000,000 / Total Mapped Reads.

- Generate Coverage Track: Use

bedtools genomecovorbamCoveragefrom deeptools to create a BedGraph or BigWig file. Apply the scaling factor:bamCoverage --scaleFactor [calculated] -b sample.bam -o sample_rpm.bw.

Protocol: Spike-in Normalized ChIP-seq (using D. melanogaster chromatin)

- Spike-in Addition: Add a fixed amount (e.g., 1-10%) of D. melanogaster chromatin (e.g., Active Motif, #61686) to each human ChIP reaction before immunoprecipitation.

- Sequencing & Alignment: Pool and sequence. Map all reads to a combined human (hg38) + Drosophila (dm6) reference genome.

- Separate Reads: Use alignment chromosome headers to separate human (

chr1,chr2...) and spike-in (chr2L,chr3R...) reads. - Calculate Spike-in Scaling Factor: For each sample, compute SF = (Total spike-in reads in Sample i) / (Average total spike-in reads across all samples).

- Normalize Human Signal: Create coverage tracks for human reads, scaling by the inverse of SF (1/SF) to correct for global differences. Use this for downstream analysis.

Diagrams

Title: RPM Workflow and Core Limiting Assumptions

Title: ChIP-seq Normalization Method Decision Guide

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for ChIP-seq Normalization

| Item | Vendor Examples | Function in Context of Normalization |

|---|---|---|

| Spike-in Chromatin | Active Motif (#61686), EpiCypher (#21-1001) | Exogenous chromatin added pre-IP to provide an internal scale for global occupancy changes, enabling correction beyond RPM. |

| Magnetic Protein A/G Beads | Thermo Fisher Scientific, Diagenode | For consistent immunoprecipitation. Variability in bead efficiency is a major confounder that RPM cannot correct. |

| Cell Counter & DNA Quantifier | Bio-Rad (TC20), Invitrogen (Qubit) | Ensures precise starting material amounts, reducing technical variation that simple read scaling ignores. |

| qPCR Kit for Library Quant | KAPA Biosystems, NEB | Accurate library quantification ensures balanced sequencing depth, a prerequisite for any subsequent scaling method. |

| Control (Input) DNA | N/A (Sonicated genomic DNA) | A mandatory control for distinguishing specific signal from background noise, used by advanced methods to improve on RPM. |

| Differential Binding Software | DiffBind, csaw, peakzilla | Statistical packages that implement robust normalization models (e.g., median scaling, loess) to overcome RPM's linearity assumption. |

Technical Support Center: Troubleshooting Guides & FAQs

Thesis Context: This support content is framed within doctoral research investigating the comparative performance of normalization methods for differential binding analysis in ChIP-seq data, specifically evaluating the adaptation of RNA-seq-derived tools DESeq2 and edgeR.

Frequently Asked Questions (FAQs)

Q1: My ChIP-seq data has very high background/noise. Can DESeq2's median-of-ratios normalization handle this?

A1: DESeq2's median-of-ratios method assumes most features are not differential, which can be violated in ChIP-seq due to sparse, focused peaks. This is a core challenge addressed in the thesis. For high-background data, consider using csaw with TMM normalization (from edgeR) on window counts, or switch to a tool explicitly designed for broad enrichments, like diffReps. The thesis found that normalization using a set of stable, non-differential control regions (e.g., input-based) improves performance.

Q2: I get an error in edgeR: "No positive library sizes". What does this mean?

A2: This error typically occurs when all counts are zero for a significant number of genomic regions (bins or peaks) across all samples. edgeR cannot compute a scaling factor. Solution: Filter your count matrix more aggressively to remove rows with all zeros. A common and effective filter is keep <- rowSums(cpm(y) > 1) >= 2, where y is your DGEList object. This retains only regions with at least 1 count-per-million in at least 2 samples.

Q3: Should I use the input sample for normalization in DESeq2/edgeR for ChIP-seq?

A3: Directly including input as a factor in the design matrix is not standard. The prevailing method, supported by the thesis findings, is to use the input to define a set of background regions for normalization. One can calculate a normalization factor (like TMM) from counts in these background regions and apply it to the ChIP samples. Alternatively, tools like ChIPseqSpike (using spike-in chromatin) offer an external control, which the thesis identifies as superior for global normalization changes.

Q4: How do I choose between DESeq2 and edgeR for my differential binding analysis? A4: The thesis simulation studies indicate:

- edgeR (with

glmQLFit): Often more conservative, controlling false discovery rates better in datasets with many low-count peaks. It's generally faster for large datasets. - DESeq2: Can be more powerful (detect more true positives) in scenarios with strong, consistent replicates but may be sensitive to outliers. Its independent filtering is advantageous. A summarized performance comparison from the thesis is below (Table 1).

Q5: What is the minimum number of biological replicates required? A5: For any statistically robust conclusion, a minimum of three biological replicates per condition is strongly recommended and is a standard in the field. The thesis power analysis shows that with two replicates, both tools have very high false discovery rates and low reproducibility, regardless of the normalization method used.

Troubleshooting Guides

Issue: Convergence warnings in DESeq2 (betaConv warnings).

Steps:

- Increase iterations: Run

DESeq(dds, betaPrior=FALSE, minReplicatesForReplace=Inf, fitType="local"). Disabling the beta prior and using local fit can help. - Filter low-count peaks: Ensure you have performed adequate pre-filtering (e.g.,

rowSums(counts(dds)) >= 10). - Inspect outliers: Use

plotPCA(dds)to check for sample outliers. Consider removing them if justified. - Simplify model: If your design is complex, see if a simpler model suffices for your hypothesis.

Issue: Dispersion estimates in edgeR are near zero or fail to trend. Steps:

- Check filtering: Re-apply the standard filter:

keep <- filterByExpr(y), theny <- y[keep, keep.lib.sizes=FALSE]. - Estimate trend manually: Specify a robust dispersion trend using

y <- estimateDisp(y, design, robust=TRUE). - Use

glmQLFit: Always use the quasi-likelihood pipeline for ChIP-seq:fit <- glmQLFit(y, design, robust=TRUE), thenqlf <- glmQLFTest(fit, coef=2).

Data Presentation

Table 1: Thesis Performance Summary of DESeq2 vs. edgeR on Simulated ChIP-seq Data (n=5 reps/group)

| Metric | DESeq2 (with Input Background Norm) | edgeR with TMM (Standard) | edgeR with TMM (Background Norm) |

|---|---|---|---|

| False Discovery Rate (FDR) | 4.8% | 5.1% | 4.9% |

| True Positive Rate (Power) | 92.3% | 89.7% | 91.1% |

| Runtime (minutes) | 22.5 | 11.2 | 11.8 |

| Normalization Stability* | 8.2 | 7.9 | 8.5 |

*Stability score (1-10) measures consistency of results upon replicate subsampling.

Experimental Protocols

Protocol 1: Generating Count Matrix for Peak Regions UsingfeatureCounts

- Align reads: Align ChIP and input FASTQ files to reference genome (e.g., using

Bowtie2orBWA). Remove duplicates and filter for mapping quality (MAPQ > 10). - Call peaks: Perform peak calling per sample (e.g., using

MACS2). Generate a consensus peak set usingbedtools mergeacross all samples. - Count reads: Use

featureCounts(from Rsubread) to count reads falling into each consensus peak for all samples.

Protocol 2: Differential Binding Analysis Workflow with edgeR (Background Normalization)

Load and filter counts in R:

Design, estimate dispersion, and test:

Mandatory Visualizations

ChIP seq Analysis with DESeq2 and edgeR Workflow

Normalization Decision Path in Differential Binding

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for ChIP-seq Differential Analysis

| Item | Function/Benefit |

|---|---|

| High-Fidelity DNA Polymerase (e.g., KAPA HiFi) | Critical for accurate library amplification with minimal bias, essential for quantitative comparisons between samples. |

| Validated Antibody | Target-specific antibody with proven ChIP-grade performance is the single most important factor for successful experiments. |

| Magnetic Protein A/G Beads | Enable efficient pull-down and low-background washes, improving signal-to-noise ratio for cleaner peaks. |

| Commercial Spike-in Chromatin (e.g., S. pombe, Drosophila) | Provides an exogenous reference for normalization, controlling for technical variation (e.g., cell count, IP efficiency). |

| Dual-Indexed Adapter Kits (e.g., Illumina TruSeq) | Allow multiplexing of many samples, reducing batch effects and cost per sample. |

| RNase A & Proteinase K | Essential enzymes for thorough removal of RNA and proteins during reverse crosslinking and DNA purification. |

| Size Selection Beads (SPRI) | Enable clean size selection of library fragments, crucial for consistent sequencing library profiles and peak calling. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our ChIP-seq analysis, we observe high background signal even in genomic regions lacking binding sites. Could this be due to insufficient Input Control normalization, and how do we correct it?

A1: Yes, this is a classic symptom of inadequate Input Control subtraction. The Input Control accounts for non-specific signals from open chromatin, sequencing bias, and genomic amplification artifacts. To correct this, ensure your Input sample is a proper sonicated, non-immunoprecipitated control from the same cell type. Re-process your data using a peak caller like MACS2 with the --broad flag if analyzing broad histone marks, and explicitly provide the Input BAM file using the -c option. The key formula applied is: Normalized ChIP Signal = (ChIP read count in region / total ChIP reads) - (Input read count in same region / total Input reads).

Q2: Our differential binding analysis between treatment and control groups shows erratic results. Could normalization issues between different Input libraries be the cause?

A2: Absolutely. When comparing multiple ChIP-seq experiments, Input libraries must themselves be normalized to each other. We recommend using a scaling factor based on read counts in non-peak, "background" genomic regions (e.g., using tools like deepTools bamCompare with the --scaleFactorsMethod set to readCount). First, create a master list of consensus, invariant background regions. Then, calculate scaling factors to equalize the Input coverage across all samples within these regions before proceeding with ChIP-to-Input comparison for each sample.

Q3: We are working with limited cell numbers and cannot generate a matching Input for every condition. What are the best practices for Input control reuse? A3: Reusing an Input control is permissible only under strict conditions. It is acceptable to use a single Input for biological replicates of the same cell type and genetic background. However, do not reuse an Input across different cell lines, treatments that drastically alter chromatin accessibility (e.g., HDAC inhibitors), or different genetic modifications. If resources are limited, consider generating a deep, high-quality Input library from a pooled sample representing the common genetic background and using it with careful scaling, as described in Q2.

Q4: What is the impact of sequencing depth disparity between ChIP and Input samples on peak calling sensitivity? A4: Insufficient Input depth is a major source of false positives. The ENCODE Consortium standards recommend Input sequencing depth be at least as deep as the corresponding ChIP sample, and ideally 2x deeper for complex genomes. The table below summarizes the effects:

| ChIP Depth | Inadequate Input Depth (Relative to ChIP) | Primary Risk | Recommended Solution |

|---|---|---|---|

| 20 million reads | < 20 million reads | High false positive rate; noise mistaken for signal | Sequence Input to ≥ 30 million reads |

| 40 million reads | ~ 20 million reads (0.5x) | Inability to correct for local biases; unreliable broad peak calling | Down-sample ChIP to match Input depth or deepen Input sequencing |

| 40 million reads | ≥ 40 million reads (1x) | Good for sharp peaks | Proceed with standard analysis |

| 40 million reads | ≥ 80 million reads (2x) | Optimal for broad histone mark analysis | Ideal for publication-quality data |

Q5: How do we validate that our Input Control normalization has been effective? A5: Perform the following quality control checks post-normalization:

- Browser Inspection: Visually inspect signal in IGV at known negative control loci (e.g., gene deserts, silent heterochromatin). The normalized ChIP track should be flat in these regions.

- Cross-Correlation Plot: Generate a plot of strand cross-correlation. A successful IP will show a strong Phred-scaled enrichment of the cross-correlation at the fragment length over the read shift correlation.

- FRiP Score Consistency: The Fraction of Reads in Peaks (FRiP) should be reasonable for your target (e.g., >1% for transcription factors, >10% for histone marks). An abnormally high FRiP may indicate over-correction, while a very low FRiP may indicate under-correction.

Experimental Protocol: Input Control Generation for ChIP-seq

Title: Protocol for Generating a Sequencing-Ready Input Control Library for ChIP-seq Normalization.

Principle: The Input control is a sonicated, non-immunoprecipitated sample that captures the background noise profile of the genome.

Materials:

- Cells for ChIP (≥ 1x10^6)

- Formaldehyde (37%)

- Glycine (2.5M)

- Cell Lysis Buffer

- Nuclear Lysis Buffer

- SDS Lysis Buffer

- Protease Inhibitor Cocktail

- RNase A

- Proteinase K

- Phenol:Chloroform:Isoamyl Alcohol (25:24:1)

- Glycogen

- Ethanol

- TE Buffer

- DynaMag-2 Magnet (or equivalent)

- DNA Clean & Concentrator-5 Kit (Zymo Research)

Method:

- Cross-linking & Harvesting: Cross-link cells with 1% formaldehyde for 10 min at room temperature. Quench with 125mM glycine. Pellet cells.

- Cell Lysis: Resuspend pellet in cold Cell Lysis Buffer. Incubate on ice for 15 min. Pellet nuclei.

- Nuclear Lysis: Resuspend nuclei in Nuclear Lysis Buffer. Incubate on ice for 15 min.

- SDS Lysis & Sonication: Add SDS Lysis Buffer. Sonicate using optimized conditions (e.g., Covaris S220: 140s, 5% Duty Factor, 140 Peak Incident Power, 200 cycles per burst) to shear DNA to 100-500 bp fragments. Take 50 µL of sonicated lysate as the "Input" sample. The remainder is used for the immunoprecipitation.

- Reverse Cross-linking (Input Sample): To the 50 µL Input sample, add 100 µL of TE Buffer, 1 µL of RNase A, and 2 µL of 5M NaCl. Incubate at 65°C for 4-6 hours or overnight.

- Protein Digestion: Add 2 µL of Proteinase K. Incubate at 45°C for 2 hours.

- DNA Purification: Purify DNA using a commercial clean-up kit (e.g., Zymo Research DNA Clean & Concentrator-5) following the manufacturer's protocol. Elute in 20 µL of nuclease-free water.

- Library Preparation & Sequencing: Quantify DNA by Qubit. Use 10-50 ng of purified Input DNA for standard Illumina sequencing library preparation (end-repair, A-tailing, adapter ligation, size selection, and PCR amplification). Sequence to the recommended depth (see Table in Q4).

Diagram: Input Control Normalization Workflow

Title: ChIP-seq Input Control Normalization Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Input Control Protocol |

|---|---|

| Formaldehyde (37%) | Cross-links DNA-binding proteins to chromatin, freezing in vivo interactions. |

| Covaris S220 Focused-ultrasonicator | Provides consistent, reproducible shearing of chromatin to desired fragment sizes (100-500 bp) with low heat generation. |

| Proteinase K | Digests proteins and histones after reverse cross-linking, freeing DNA for purification. |

| DNA Clean & Concentrator-5 Kit (Zymo) | Efficiently purifies and recovers small amounts of DNA from complex mixtures post reverse cross-linking. |

| Illumina TruSeq ChIP Library Prep Kit | Standardized, high-efficiency kit for preparing sequencing libraries from low-input, sonicated DNA. |

| SPRIselect Beads (Beckman Coulter) | For precise size selection of sequencing libraries, removing adapter dimers and large fragments. |

| Qubit dsDNA HS Assay Kit | Accurate fluorometric quantification of low-concentration, sonicated DNA samples, essential for library prep input. |

Frequently Asked Questions & Troubleshooting Guides

Q1: After switching from read-centric to peak-centric normalization for my comparative ChIP-seq samples, I observe a drastic change in the significance of my differential binding results. Is this expected, and which method should I trust?

A: Yes, this is a common and critical observation. The choice impacts biological interpretation. Read-centric methods (e.g., using all mapped reads) are sensitive to global changes in signal levels, which is ideal for comparing transcription factor (TF) binding under different conditions where the total number of binding sites may change. Peak-centric methods (e.g., counting reads within consensus peaks) focus on changes at predefined, high-confidence sites and are often preferred for histone mark comparisons where the landscape is more stable.

- Troubleshooting: If results flip significance, investigate the overall signal distribution. Generate a metaplot of signal over all called peaks. If the control sample has globally lower read depth, read-centric normalization may over-compensate. For TFs, peak-centric analysis might miss condition-specific peaks. The "trusted" method aligns with your biological question: use peak-centric for focused analysis of known sites and read-centric for genome-wide differential occupancy discovery.

Q2: My spike-in normalized ChIP-seq data shows poor correlation between replicates when I perform peak-centric quantification. What could be the cause?

A: This often points to an inconsistency in the peak calling or peak merging step, which is a prerequisite for peak-centric analysis. Spike-ins control for technical variation in sample preparation, but biological variation in the specific genomic locations bound can still cause replicate discordance if peaks are not called reproducibly.

- Troubleshooting:

- Re-call peaks on each replicate individually and assess overlap using an irreproducible discovery rate (IDR) framework. Low IDR scores indicate poor replicate concordance at the peak level.

- Ensure your consensus peak set is created from reproducible peaks across all replicates and conditions, not just from merged treatment files. Using a poorly defined consensus set will introduce noise.

- Verify that your spike-in genome is completely excluded from the peak calling process to avoid contamination of your consensus peak set with spike-in sequences.

Q3: When analyzing broad histone marks (e.g., H3K27me3), why does read-centric normalization (like TMM) sometimes fail, and what are the alternatives?

A: Read-centric methods like TMM assume most genomic regions are not differentially bound, which can be violated for broad marks covering large, variable genomic domains. This can lead to over-normalization and false negatives.

- Troubleshooting/Alternative Protocol:

- Implement a hybrid approach: Use a control-centric method like

csaworMAnorm2. These tools perform normalization using background read counts from non-peak regions or use a sliding window approach to model local bias. - Protocol: For

MAnorm2on broad marks:- Call broad peaks per sample (e.g., with

MACS2--broadflag). - Create a consensus set of all peak regions from all samples.

- Use

MAnorm2to normalize read counts in these regions based on a common set of reference genomic bins (e.g., 10kb bins), which accounts for local noise and composition bias.

- Call broad peaks per sample (e.g., with

- Consideration: For large, complex datasets, a non-linear normalization method (e.g., quantile normalization on background bins) may be more appropriate than linear scaling.

- Implement a hybrid approach: Use a control-centric method like

Research Reagent Solutions Toolkit

| Reagent/Material | Function in ChIP-seq Normalization Context |

|---|---|

| Commercial Spike-in Chromatin (e.g., D. melanogaster, S. pombe*) | Provides an external standard for cell count normalization. Added in fixed ratio to experimental (H. sapiens) chromatin prior to immunoprecipitation to control for technical variability in steps from cell lysis to library amplification. |

| Spike-in Antibody (Species-Specific) | Antibody targeting a conserved histone mark (e.g., H3K4me3) in the spike-in organism. Essential for accurately recovering and quantifying the spike-in chromatin alongside your sample. |

| Validated ChIP-Grade Antibody | High-specificity, high-affinity antibody is the foundation of any ChIP-seq. Lot-to-lot variability can be a major hidden confounder in comparative studies, affecting both peak-centric and read-centric outcomes. |

| Magnetic Protein A/G Beads | For consistent immunoprecipitation efficiency. Bead amount and incubation time must be rigorously controlled across samples to minimize technical variation that normalization must later correct. |

| PCR-Free or Low-Cycle Library Prep Kit | Minimizes PCR duplication bias and amplification noise, which can skew read depth calculations—a fundamental input for all normalization methods. |

| High-Fidelity DNA Polymerase | Reduces PCR errors during library amplification, ensuring accurate sequencing and read alignment, which is critical for precise read counting in peaks or bins. |

| Size Selection Beads (SPRI) | For reproducible fragment size selection. Inconsistent size selection alters library complexity and insert size distribution, impacting the efficacy of read-centric normalization. |

| Qubit dsDNA HS Assay Kit | Accurate quantification of ChIP DNA and final libraries is crucial for equimolar pooling prior to sequencing, establishing the baseline for between-sample comparisons. |

Table 1: Impact of Normalization Strategy on Differential Binding Analysis Results.

| Analysis Scenario | Optimal Norm. Method | Key Metric Influenced | Typical Artifact if Misapplied |

|---|---|---|---|

| Transcription Factor, Two Conditions | Read-Centric (e.g., TMM on all reads) | Number of condition-specific peaks | Loss of true global changes; false negative rate increases. |

| Histone Mark (Sharp), Multiple Cell Lines | Peak-Centric (e.g., Counts in consensus peaks + DESeq2) | Fold change at known regulatory sites | Inflation of false positives at low-abundance sites. |

| Histone Mark (Broad), Disease vs. Control | Control-Centric/Hybrid (e.g., csaw, MAnorm2) |

Size and significance of broad domains | Over-normalization, masking of large-scale differential regions. |

| Low-Input/FFPE Samples with Spike-Ins | Spike-In Based (Linear Scaling) | Accuracy of biological signal strength | Under/over-correction for technical yield differences. |

Table 2: Quantitative Comparison of Normalization Methods in a Simulated Dataset.

| Method | Normalization Basis | Sensitivity (Recall) | False Discovery Rate (FDR) Control | Computational Speed |

|---|---|---|---|---|

| Read-Centric (TMM) | Global read count distribution | High | Moderate (can be poor for broad marks) | Fast |

| Peak-Centric (DESeq2/edgeR) | Read counts within consensus peaks | Moderate (for pre-defined peaks) | Excellent | Moderate |

| Spike-In (Linear Scaling) | Exogenous chromatin read count | Variable (depends on spike-in accuracy) | Good | Very Fast |

| Control-Centric (csaw) | Read counts in background bins | High for broad patterns | Excellent | Slow |

Key Experimental Protocols

Protocol 1: Implementing Spike-in Chromatin Normalization for TF ChIP-seq

- Spike-in Addition: Fix cells and isolate chromatin. Add 1-10% (by chromatin mass) of commercially available D. melanogaster or S. pombe chromatin to your human chromatin sample before proceeding to sonication.

- Co-Immunoprecipitation: Perform the ChIP procedure using an antibody that recognizes the target epitope in both species (or a separate, validated spike-in-specific antibody).

- Library Preparation & Sequencing: Prepare sequencing libraries from the immunoprecipitated DNA. Sequence on a platform of choice (e.g., Illumina).

- Sequencing Alignment: Align reads simultaneously to a concatenated human + spike-in reference genome using

bowtie2orBWA. Flag reads aligning to the spike-in genome. - Normalization Factor Calculation: Calculate the scaling factor:

SF = (Total spike-in reads in Sample A) / (Total spike-in reads in Sample B). - Downstream Analysis: Scale the human read counts in Sample B by

SFbefore peak calling (for read-centric) or use the factor to adjust library sizes in differential count tools (e.g.,DESeq2).

Protocol 2: Peak-Centric Differential Analysis with DESeq2

- Peak Calling: Call peaks on each biological replicate individually using a tool like

MACS2. - Consensus Peak Set: Generate a union set of all peaks called across all samples and replicates using tools like

bedtools merge. - Read Counting: Count reads aligning to each consensus peak region for every sample using

featureCountsorHTSeq. - DESeq2 Analysis:

- Interpretation: Results provide log2 fold changes and adjusted p-values for differential occupancy at each consensus peak.

Visualizations

Title: Workflow Comparison: Peak vs. Read Centric Analysis

Title: Spike-In Normalization Workflow

Title: Decision Tree for Choosing a Normalization Strategy

This technical support center is framed within a broader thesis research context investigating the critical impact of normalization methods on differential peak calling in ChIP-seq analysis. The choice and application of tools like MACS2 and DiffBind, particularly their normalization steps, directly influence downstream biological interpretation and drug target identification.

Troubleshooting Guides & FAQs

Q1: During MACS2 callpeak analysis, I encounter the error: "AssertionError: Chromosome ... not found in the genome." What does this mean and how do I resolve it?

A: This error indicates a mismatch between chromosome names in your BAM file and the MACS2 internal genome database (e.g., 'chr1' vs '1'). To resolve:

- Check chromosome naming conventions in your BAM header using

samtools view -H your_file.bam | grep ^@SQ. - Ensure consistency. If your BAM uses '1', but MACS2 expects 'chr1', use the

--nomodeland--extsizeoptions with a custom effective genome size, or pre-process your BAM file to rename chromosomes usingsamtools viewand a sed/awk command, then re-index.

Q2: When running DiffBind's dba.analyze() function, I get the error: "Error in .normReads ... number of rows of matrices must match." How can I fix this?

A: This error typically arises from peak set inconsistency. Peaks must have the same genomic coordinates across all samples after the counting step. Troubleshoot as follows:

- Re-run

dba.count()withbUseSummarizeOverlaps=TRUEto ensure consistent counting. - Verify that all your BAM files are aligned to the same reference genome build.

- Check that the peak caller output (e.g., from MACS2) for each sample was generated with identical parameters, especially the

--keep-dupand-q/-pvalue thresholds.

Q3: My DiffBind results show an unusually high number of differentially bound sites (DBS), often in the tens of thousands. Is this biologically plausible, and what normalization step should I examine?

A: While possible, such high numbers often signal inadequate normalization. Within the thesis context of normalization method research, this highlights the sensitivity of results to background correction.

- Action: Re-examine the

dba.normalizestep. The default islib.method=DBA_LIBSIZE_BACKGROUNDfor background-aware library size normalization. Consider experimenting with other methods likeDBA_LIBSIZE_FULLorDBA_LIBSIZE_PEAKREADSto assess their impact on the result set size, as this is a core thesis investigation. Always correlate the number of DBS with the sequencing depth and IP efficiency of your samples.

Q4: In MACS2, what is the practical difference between the -q (FDR) and -p (p-value) cutoffs, and which should I use for publication-quality analysis?

A: The -q cutoff is the False Discovery Rate (FDR) based on the Benjamini-Hochberg procedure. The -p cutoff uses raw p-values. For publication, FDR (-q) is strongly preferred as it corrects for multiple testing. A common threshold is -q 0.05. Using -p (e.g., -p 1e-5) can yield many false positives in genome-wide studies. The thesis research underscores that normalization preceding peak calling influences the p-value distribution, thereby affecting both -p and -q based results.

Q5: DiffBind offers multiple normalization methods in dba.normalize. How do I choose between 'lib.methods' like DBA_LIBSIZE_FULL and DBA_LIBSIZE_BACKGROUND?

A: This choice is central to our thesis research. The table below summarizes key differences:

Normalization Method (lib.method) |

What it Normalizes By | Best Use Case | Consideration for Thesis Research |

|---|---|---|---|

DBA_LIBSIZE_FULL |

Total reads in the BAM file. | When global chromatin & IP efficiency are highly consistent across all samples. | Simple but can be biased by non-specific background signals. |

DBA_LIBSIZE_BACKGROUND (Default) |

Reads in neutral genomic regions (background). | Most scenarios; accounts for background noise differences. | The definition of "background" is critical and can vary. |

DBA_LIBSIZE_PEAKREADS |

Reads only within the consensus peak set. | Focusing on relative changes within identified binding sites. | Risks circularity; may miss global shifts in binding. |

Protocol: Comparative Evaluation of Normalization Methods in a DiffBind Workflow

Objective: To systematically evaluate the impact of different dba.normalize library size methods on the final list of differentially bound regions.

Methodology:

- Peak Calling & Sample Sheet: Run MACS2 (

macs2 callpeak -t ChIP.bam -c Input.bam -f BAM -g hs -q 0.05 -n sample) for all samples. Create a DiffBind sample sheet (samples.csv). - DiffBind Consensus Peak Set:

Normalization & Differential Analysis Arms: Apply three different normalization methods in parallel.

Data Extraction: For each result (

res_full,res_bg,res_peak), extract the report of differentially bound sites (dba.report(..., th=1)wherethis the FDR threshold).- Quantitative Comparison: Create a summary table comparing the number of DBS, their fold-change distributions, and overlap (Venn diagram) between the three methods.

Expected Outcome: The thesis research posits that DBA_LIBSIZE_BACKGROUND will provide the most robust and conservative list of DBS, while DBA_LIBSIZE_FULL may be influenced by experimental artifacts, and DBA_LIBSIZE_PEAKREADS may be overly specific.

Visualizations

Diagram 1: ChIP-seq Differential Analysis Workflow: MACS2 to DiffBind

Diagram 2: DiffBind Normalization Method Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ChIP-seq / Differential Analysis |

|---|---|

| High-Quality Antibodies | For specific immunoprecipitation (IP) of the target protein. Specificity is paramount for clean signal. |

| Magnetic Protein A/G Beads | Used to capture antibody-protein-DNA complexes during the ChIP protocol. |

| Cell Fixative (e.g., Formaldehyde) | Crosslinks proteins to DNA to preserve in vivo binding interactions. |

| Sonication System (Covaris) | Shears crosslinked chromatin to optimal fragment sizes (200-600 bp) for sequencing. |

| Library Prep Kit (e.g., NEB Next) | Prepares the immunoprecipitated DNA for high-throughput sequencing. |

| Size Selection Beads (SPRI) | For clean purification and size selection of DNA fragments during library prep. |

| High-Sensitivity DNA Assay (Bioanalyzer) | Accurately quantifies and qualifies DNA libraries before sequencing. |

| Alignment Software (Bowtie2/BWA) | Maps sequenced reads to the reference genome to create BAM files. |

| Peak Caller (MACS2) | Identifies genomic regions with significant enrichment of mapped reads. |

| Differential Analysis Tool (DiffBind) | Statistically compares read counts in peaks across conditions to find differential binding. |

Troubleshooting ChIP-seq Normalization: Solving Common Pitfalls

Troubleshooting Guides & FAQs

FAQ 1: After normalizing my ChIP-seq data, I still see a global shift in the signal between my treatment and control samples when visualized in a genome browser. What could be the cause? Answer: This is a classic sign of inadequate background normalization. Common methods like Reads Per Million (RPM) or even simple library size scaling fail to account for differences in background noise and non-specific pull-down efficiency. You are likely seeing systematic technical bias, not biological signal. Within the broader thesis on ChIP-seq normalization, this underscores the necessity of methods like SES (Signal Extraction Scaling) or non-linear normalization (e.g., using spike-in controls) that separate true signal from background.