Controlling False Discovery Rate in ChIP-Seq Analysis: A Practical Guide for Biomedical Researchers

This comprehensive guide demystifies false discovery rate (FDR) control in ChIP-seq data analysis for researchers, scientists, and drug development professionals.

Controlling False Discovery Rate in ChIP-Seq Analysis: A Practical Guide for Biomedical Researchers

Abstract

This comprehensive guide demystifies false discovery rate (FDR) control in ChIP-seq data analysis for researchers, scientists, and drug development professionals. We first explore why FDR control is critical for avoiding spurious peaks and misleading biological interpretations. We then detail practical methodologies, including peak calling algorithms, q-value calculation, and IDR analysis. The troubleshooting section addresses common pitfalls like low replicate concordance and library complexity issues. Finally, we compare validation strategies using orthogonal assays and computational benchmarks. This article synthesizes current best practices to ensure statistically robust and biologically meaningful ChIP-seq results.

Why FDR Control is Non-Negotiable: The Risks of False Positives in ChIP-Seq Data

The High Stakes of False Positives in Transcription Factor and Histone Mark Studies

Technical Support Center

Troubleshooting Guide: Common ChIP-seq Artifacts

Issue 1: High Background/Noise in Sequencing Data

- Q: My ChIP-seq tracks show high background noise across the genome, masking true peaks. What are the main causes?

- A: This is often due to suboptimal antibody specificity (off-target binding) or inadequate fragmentation. Over-fixation can crosslink proteins non-specifically, and poor sonication can leave large chromatin fragments that map ambiguously. High background directly inflates false discovery rates (FDR) in peak calling.

Issue 2: Inconsistent Replicate Concordance

- Q: My biological replicates show low overlap in called peaks. How should I proceed?

- A: Low replicate concordance is a hallmark of uncontrolled false positives or technical variability. First, assess replicate quality using metrics like the Irreproducible Discovery Rate (IDR). Re-evaluate antibody validation (see FAQ) and ensure consistent cell counting and chromatin input normalization across replicates.

Issue 3: Peak Calls in Genomic "Blacklist" Regions

- Q: My pipeline called strong peaks in telomeric, centromeric, or satellite repeat regions. Are these real?

- A: They are almost always false positives. These regions are prone to ultra-high signal due to structured repeats and mapping artifacts. They must be filtered using a curated genomic blacklist (e.g., ENCODE DAC Blacklisted Regions) as a standard step in FDR control.

Frequently Asked Questions (FAQs)

Q: What is the single most important factor to reduce false positives in ChIP experiments?

- A: Antibody validation. Using antibodies not rigorously validated for ChIP-seq is the largest source of false signals. Always use ChIP-validated antibodies, and consult resources like the ENCODE Antibody Validation Database.

Q: How do I choose the correct statistical threshold (p-value/q-value) for my peak caller?

- A: There is no universal value. The threshold must be determined empirically based on your experimental context and desired FDR. Use the IDR framework for transcription factors. For broad histone marks, tools like SICER2 or BroadPeak that use spatial clustering are more appropriate than point-source peak callers.

Q: What control is absolutely mandatory for proper FDR estimation?

- A: A matched input (or IgG control) experiment is non-negotiable. It accounts for sequencing bias, open chromatin effects, and genomic background. Peak calling must be performed against this control, not against the genome alone.

Q: My positive control region works, but my target of interest shows no signal. Does this mean my experiment failed?

- A: Not necessarily. A successful positive control validates the protocol. A lack of signal at a novel target could be a true negative. However, you must first rule out false negatives caused by poor epitope accessibility, insufficient sequencing depth, or the target's genuine absence in your cell model.

Experimental Protocol: Crosslinking ChIP-seq for a Transcription Factor

- Cell Fixation: Treat ~1x10^7 cells with 1% formaldehyde for 10 minutes at room temperature. Quench with 125mM glycine.

- Cell Lysis: Lyse cells in SDS Lysis Buffer (1% SDS, 10mM EDTA, 50mM Tris-HCl pH 8.1) with protease inhibitors. Pellet nuclei.

- Chromatin Shearing: Sonicate lysate to shear chromatin to an average size of 200-500 bp. Verify fragment size by agarose gel electrophoresis.

- Immunoprecipitation: Dilute sonicated lysate in ChIP Dilution Buffer (0.01% SDS, 1.1% Triton X-100, 1.2mM EDTA, 16.7mM Tris-HCl pH 8.1, 167mM NaCl). Incubate with 2-5 µg of target-specific antibody and Protein A/G beads overnight at 4°C.

- Washes: Wash beads sequentially with:

- Low Salt Wash Buffer (0.1% SDS, 1% Triton X-100, 2mM EDTA, 20mM Tris-HCl pH 8.1, 150mM NaCl)

- High Salt Wash Buffer (0.1% SDS, 1% Triton X-100, 2mM EDTA, 20mM Tris-HCl pH 8.1, 500mM NaCl)

- LiCl Wash Buffer (0.25M LiCl, 1% NP-40, 1% deoxycholate, 1mM EDTA, 10mM Tris-HCl pH 8.1)

- TE Buffer (10mM Tris-HCl pH 8.0, 1mM EDTA)

- Elution & De-crosslinking: Elute complexes in Elution Buffer (1% SDS, 0.1M NaHCO3). Add NaCl to 200mM and reverse crosslinks at 65°C for 4+ hours.

- DNA Purification: Treat with RNase A and Proteinase K. Purify DNA using phenol-chloroform extraction and ethanol precipitation.

- Library Preparation & Sequencing: Construct sequencing library using standard kits. Sequence on an appropriate platform to achieve sufficient depth (typically >10 million non-duplicate reads for TFs).

Data Presentation: Common Causes of False Positives & Mitigation Strategies

| Cause of False Positive | Impact on Data | Recommended Mitigation Strategy |

|---|---|---|

| Non-specific Antibody | High background, peaks in blacklist regions. | Use ChIP-validated antibodies; perform knockout/knockdown validation. |

| Inadequate Input Control | Inaccurate background modeling, inflated peak calls. | Always use a matched, sequenced input DNA control for peak calling. |

| Over-fixation | Reduced antigen accessibility, increased non-specific crosslinking. | Optimize fixation time/temperature; do not exceed 10 min with 1% PFA. |

| Under-sonication | Large fragments cause ambiguous mapping and broad, false peaks. | Optimize sonication to achieve 200-500 bp fragments; check on gel. |

| PCR Duplicates | Over-amplification of single fragments can create artifact peaks. | Use duplex Unique Molecular Identifiers (UMIs) during library prep. |

| Poor Replicate Concordance | Low IDR score, irreproducible results. | Increase biological replicates (n≥2), use IDR analysis for TFs. |

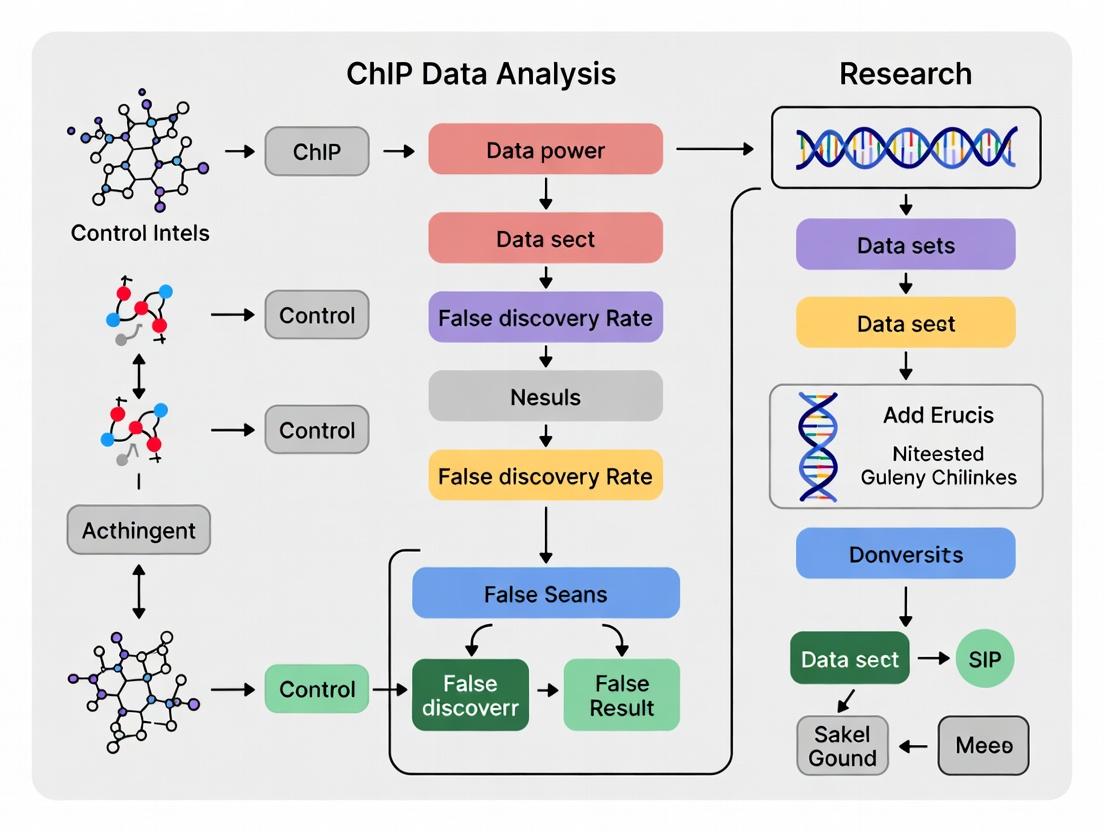

Visualization: ChIP-seq Analysis Workflow for FDR Control

Visualization: Sources of False Signals in ChIP Experiments

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Importance for FDR Control |

|---|---|

| ChIP-Validated Antibody | The primary reagent. Must be validated for specificity in ChIP assays using knockout/knockdown cells to prevent off-target binding (major false positive source). |

| Matched Input DNA | Control chromatin taken before immunoprecipitation. Essential for normalizing sequencing and open chromatin bias during peak calling. Not using it invalidates FDR estimates. |

| Magnetic Protein A/G Beads | For antibody-antigen complex capture. Consistent bead quality reduces non-specific background pull-down. |

| Duplex Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences ligated to DNA fragments pre-amplification. Allow bioinformatic removal of PCR duplicates, preventing over-amplification artifacts. |

| Genomic Blacklist (BED file) | Curated list of problematic genomic regions (e.g., ENCODE DAC Blacklist). Filtering peaks overlapping these regions removes a known class of technical false positives. |

| IDR Analysis Pipeline | (Irreproducible Discovery Rate) A statistical method to assess reproducibility between replicates for point-source peaks (e.g., TFs), providing a consistent FDR benchmark. |

Technical Support Center & FAQs

FAQ 1: Why does my ChIP-seq analysis show thousands of significant peaks with a p-value < 0.05, but I know many are likely false positives?

- Answer: This is a classic problem of multiple hypothesis testing. When you test 50,000 genomic regions, even by random chance (at p=0.05), you would expect 2,500 false positives. The p-value only measures the probability of the observed data given the null hypothesis (no binding) for a single test. It does not control the overall error rate across all tests in your experiment. You must apply a multiple testing correction like the False Discovery Rate (FDR).

FAQ 2: What is the practical difference between using a Benjamini-Hochberg FDR (q-value) threshold versus a p-value threshold for my final peak list?

- Answer: A p-value threshold (e.g., p < 1e-5) controls the per-test chance of a false positive. An FDR threshold (e.g., q < 0.01) controls the proportion of accepted discoveries (your peak list) that are expected to be false positives. Using an FDR threshold of 0.01 means you accept that approximately 1% of the peaks in your final list are incorrect, giving you a directly interpretable error rate for your downstream biological validation.

FAQ 3: My peak caller (MACS2) outputs both p-values and q-values. Which one should I use to filter peaks, and what cutoff is typical?

- Answer: You should use the q-value (FDR-adjusted p-value) for filtering your final list of high-confidence peaks. A typical stringent cutoff is FDR < 0.01. The p-values are used internally by the algorithm to rank regions, but the q-values provide the corrected measure of significance. Relying on raw p-values will lead to an unreliably high number of false discoveries.

FAQ 4: After applying an FDR cutoff, my negative control sample (IgG) still has some called "peaks." Is this normal?

- Answer: Yes, this can happen and underscores the importance of control samples. An FDR of 0.05 means 5% of your called peaks are expected to be false. If your control sample has peaks at the same cutoff, it indicates the presence of systematic biases (e.g., open chromatin regions, repetitive sequences) that the statistical model may not fully account for. Best practice is to use an IDR (Irreproducible Discovery Rate) analysis between replicates or subtract/compare against the control sample peaks.

FAQ 5: How does the choice of FDR control method (e.g., Benjamini-Hochberg vs. Storey’s q-value) impact sensitivity in ChIP-seq experiments with broad peaks (like H3K27me3)?

- Answer: The standard Benjamini-Hochberg (BH) procedure controls the FDR under the assumption that all null hypotheses are true, which can be conservative. Storey's method estimates the proportion of true null hypotheses (π0) from the data, which can increase sensitivity (power), especially in experiments like broad histone mark ChIP-seq where a larger proportion of the genome is truly bound. Using a method that estimates π0 can yield more discoveries at the same nominal FDR level.

Table 1: Core Differences Between P-value and FDR in Peak Calling

| Aspect | P-value | False Discovery Rate (FDR / q-value) |

|---|---|---|

| Definition | Probability of observing data as or more extreme than the current data, assuming the null hypothesis (no peak) is true. | Expected proportion of false positives among all discoveries called significant. |

| Controls For | Type I error (false positive) for a single test. | Proportion of errors among rejected null hypotheses (your peak list). |

| Interpretation | Lower p-value indicates stronger evidence against the null for that specific locus. Does NOT provide an experiment-wide error rate. | A q-value of 0.05 means ~5% of your called peaks are expected to be false positives. |

| Dependence on Tests | Independent of the total number of tests performed. | Explicitly accounts for and adjusts based on the total number of genomic regions tested. |

| Typical Cutoff | Often very stringent (e.g., 1e-5) due to lack of multiple testing correction. | 0.01 (stringent) to 0.05 (lenient) is common and biologically interpretable. |

Table 2: Impact of Statistical Thresholds on Simulated ChIP-seq Data

| Analysis Method | P-value Threshold | FDR (q-value) Threshold | Peaks Called | Estimated False Peaks | True Positives Identified |

|---|---|---|---|---|---|

| Raw P-value | 0.05 | N/A | 12,500 | ~2,500 (20%) | 9,850 |

| BH-FDR Corrected | N/A | 0.05 | 8,200 | ~410 (5%) | 7,790 |

| BH-FDR Corrected | N/A | 0.01 | 6,100 | ~61 (1%) | 6,039 |

Note: Simulation based on testing 50,000 genomic regions with 8,000 true binding sites. Illustrates how raw p-value leads to high false discovery count, while FDR provides a controlled error rate.

Detailed Experimental Protocol: FDR-Controlled Peak Calling with MACS2 and Downstream Analysis

Protocol Title: ChIP-seq Peak Calling with Benjamini-Hochberg FDR Control and IDR Analysis for High-Confidence Peak Selection.

Objective: To generate a high-confidence set of transcription factor binding sites from ChIP-seq data while controlling the overall false discovery rate.

Materials: See "The Scientist's Toolkit" below.

Procedure:

Quality Control & Alignment:

- Assess raw read quality using FastQC.

- Align reads to the reference genome (e.g., hg38) using Bowtie2 or BWA. Retain only uniquely mapped, non-duplicate reads using samtools and Picard.

Peak Calling with MACS2:

- Call peaks on each biological replicate and a pooled dataset using the control (IgG) sample:

macs2 callpeak -t ChIP_rep1.bam -c Control_IgG.bam -f BAM -g hs -n Rep1 --outdir ./peaks_rep1 -B --qvalue 0.05 - The

--qvalue 0.05parameter instructs MACS2 to use the Benjamini-Hochberg procedure to report peaks with an FDR < 5%.

- Call peaks on each biological replicate and a pooled dataset using the control (IgG) sample:

Irreproducible Discovery Rate (IDR) Analysis:

- Use the IDR pipeline to assess consistency between replicates and filter peaks to a global FDR (e.g., 1%).

- Sort peak files (

_peaks.narrowPeak) by p-value:sort -k8,8nr Rep1_peaks.narrowPeak > Rep1_sorted.narrowPeak - Run IDR on two replicates:

idr --samples Rep1_sorted.narrowPeak Rep2_sorted.narrowPeak --input-file-type narrowPeak --rank p.value --output-file idr_output.tsv --plot - Extract peaks passing the recommended IDR cutoff (e.g., IDR < 0.05):

awk '{if($5 >= 540) print $0}' idr_output.tsv | sort -k1,1 -k2,2n > HighConfidencePeaks.bed

Validation & Annotation:

- Annotate high-confidence peaks relative to genes using HOMER or ChIPseeker.

- Perform motif analysis on the top 1000 peaks using HOMER

findMotifsGenome.pl. - Validate selected peaks using independent methods (e.g., qPCR on precipitated DNA).

Visualizations

Title: Benjamini-Hochberg FDR Correction Workflow

Title: P-value vs FDR Threshold Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Relevance |

|---|---|

| MACS2 (Software) | Widely-used peak calling algorithm that models shift size of ChIP-seq tags to identify enriched binding regions and outputs both p-values and q-values. |

| Benjamini-Hochberg Procedure | Statistical algorithm implemented in MACS2 and other tools to adjust p-values and control the False Discovery Rate. |

| IDR Pipeline (Software) | Toolkit for assessing reproducibility between replicates and deriving a consistent set of peaks with a controlled global FDR, often more stringent than per-replicate FDR. |

| Control/IgG Antibody | Non-specific antibody used in the control immunoprecipitation to identify background noise and systematic biases for accurate statistical modeling. |

| samtools & Picard Tools | Essential for processing aligned BAM files: sorting, indexing, removing PCR duplicates (critical for accurate peak significance). |

| HOMER Suite | Toolkit for motif discovery and functional annotation of peak lists, enabling biological interpretation of FDR-filtered results. |

| Bowtie2/BWA | Read alignment algorithms to map sequenced reads to the reference genome, forming the basis for all downstream signal and statistical analysis. |

Technical Support & Troubleshooting Center

FAQ: Common Issues and Solutions

Q1: How can I determine if PCR duplicates are a major source of noise in my specific ChIP-seq dataset, and what is the acceptable threshold?

A: PCR duplicates manifest as multiple reads with identical start and end coordinates. They inflate coverage artificially and can lead to false peak calls. To assess, calculate the duplicate rate:

Duplicate Rate = (Number of duplicate reads / Total mapped reads) * 100%.

Quantitative benchmarks from current literature are summarized below.

| Sample Type | Typical Acceptable Duplicate Rate | High-Risk Threshold | Primary Diagnostic Tool |

|---|---|---|---|

| Standard Histone Mark (e.g., H3K4me3) | < 20% | > 30% | Picard MarkDuplicates, SAMtools rmdup |

| Transcription Factor (Low complexity) | < 30% | > 50% | Preseq (to estimate library complexity) |

| Input/Control Sample | < 25% | > 40% | Duplication rate vs. depth plot |

Protocol for Assessment with Picard:

- Sort your BAM file by coordinate:

samtools sort -o sorted.bam input.bam - Run MarkDuplicates:

- Examine the

marked_dup_metrics.txtfile forPERCENT_DUPLICATION.

Q2: My peak caller identifies many broad, low-signal regions. How do I differentiate true signal from background DNA noise?

A: Background noise arises from non-specific antibody binding or open chromatin. Differentiation requires a robust control (Input DNA) and statistical modeling.

Protocol for Systematic Background Assessment:

- Generate a SPP (Signal Portion Probability) score: Use the

sppR package from the ENCODE project. It calculates a reliability score for each peak based on the spatial structure of the tag density relative to the input control. - Apply Irreproducible Discovery Rate (IDR) Analysis: For replicates, IDR separates consistent peaks from background noise.

- Call peaks on each replicate and the pooled dataset.

- Run IDR analysis (e.g., using

idrpackage): - Peaks passing a chosen IDR threshold (e.g., 0.05) are high-confidence.

Q3: What are the common mapping artifacts in ChIP-seq, and how can I mitigate them during analysis?

A: Mapping artifacts include multi-mapping reads, low-quality alignments, and biases from reference genome errors.

Troubleshooting Guide:

- Issue: Peaks in blacklisted regions (e.g., telomeres, centromeres).

Solution: Filter against genome blacklist (e.g., ENCODE DAC Blacklisted Regions). Use

bedtools intersect -v. - Issue: Strand bias or anomalous read pileups.

Solution: Remove reads with low mapping quality (MAPQ). Filter BAM for MAPQ ≥ 10:

samtools view -b -q 10 input.bam > highQ.bam. - Issue: Artifactual peaks from PCR amplification of structural variants. Solution: Use paired-end sequencing and proper aligners (e.g., BWA-MEM, Bowtie2) that handle soft-clipping. Visually inspect reads in IGV at suspect loci.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Mitigating Noise |

|---|---|

| High-Specificity Antibody (ChIP-grade) | Minimizes non-specific binding, the primary source of background DNA noise. |

| Sonication Shearing System (e.g., Covaris) | Produces consistent, random fragment sizes, reducing mapping bias and PCR duplicate bias. |

| PCR Duplication-Suppressing Kits (e.g., NEBNext Ultra II) | Incorporates unique molecular identifiers (UMIs) to tag original fragments, enabling true duplicate removal. |

| Size Selection Beads (SPRI beads) | Cleans up library fragments, removes adapter dimers and very short fragments that map poorly. |

| High-Fidelity PCR Polymerase | Reduces PCR errors that can create mapping artifacts and alter sequences. |

| Quality Control: Bioanalyzer/TapeStation | Assesses library fragment size distribution before sequencing; skewed distributions indicate protocol issues. |

| Spike-in Control DNA (e.g., D. melanogaster) | Provides an external normalization control to account for technical variability, helping distinguish biological signal from noise. |

Diagram Title: ChIP-Seq Noise and Control Pathways (100 chars)

Diagram Title: ChIP-Seq Analysis Workflow for FDR Control (68 chars)

Troubleshooting Guides & FAQs

Q1: During ChIP-seq analysis, my pipeline identified hundreds of significant peaks, but orthogonal validation (e.g., qPCR) failed for most. What went wrong? A: This is a classic symptom of inadequate False Discovery Rate (FDR) control. Common causes include:

- Incorrectly set Benjamini-Hochberg parameters: Using an FDR cutoff (e.g., q-value < 0.05) that is too lenient for your specific experimental noise level.

- Poor input or IgG control: The control sample lacks sufficient depth or quality, failing to model background noise accurately, leading to inflated significance.

- Overly narrow analysis: Relying solely on peak-caller p-values without considering replicate concordance or integrating other genomic data (e.g., ATAC-seq, motif analysis) for prioritization.

Protocol: Orthogonal Validation of ChIP-seq Peaks

- Peak Prioritization: From your peak list, randomly select 20 peaks stratified by q-value (e.g., 5 with q<0.01, 10 with q<0.05, 5 with 0.05

- Primer Design: Design qPCR primers flanking the peak summit (amplicon size: 80-150 bp). Include primers for a known positive binding region and a negative genomic region.

- qPCR: Use the same immunoprecipitated DNA and input DNA from your ChIP-seq experiment. Perform SYBR Green qPCR in triplicate.

- Analysis: Calculate % input for each region. A true positive should show significant enrichment (% input IP / % input Input) compared to the negative control region.

Q2: How can uncontrolled FDR in preclinical target identification directly impact drug development pipelines? A: It leads to costly resource misallocation and late-stage failures:

- Preclinical: Years and millions of dollars are spent developing compounds, antibodies, or cell therapies against "phantom" targets that are not genuinely involved in the disease pathology.

- Clinical: Phase I/II trials may proceed based on false mechanistic assumptions, resulting in lack of efficacy (high placebo response, no target engagement biomarker signal) and trial termination. This derails portfolios and erodes investor confidence.

Q3: What are the best practices for stringent FDR control in a ChIP-seq workflow for critical drug target identification? A: Implement a multi-layered, conservative approach:

- Experimental Design: Use biological replicates (minimum n=2, ideally n=3). Perform size-matched input or IgG control experiments to the same sequencing depth as your IP samples.

- Bioinformatic Analysis:

- Use reproducible peak callers (e.g., IDR for replicates) to establish high-confidence peak sets.

- Apply a stringent FDR cutoff (e.g., q-value < 0.01 or 0.001).

- Integrate with functional genomics data (e.g., CRISPR screens, RNA-seq from target perturbation) to filter peaks that have a functional correlate.

- Triangulation: Never rely on ChIP-seq alone. Corroborate findings with techniques like CUT&RUN/Tag, luciferase reporter assays, and genome editing (CRISPRi/CRISPRa) to confirm regulatory function.

Protocol: Integrated IDR Analysis for Replicate ChIP-seq

- Mapping: Align reads from each replicate IP and control sample independently to the reference genome.

- Peak Calling: Call peaks for each replicate separately against its matched control using MACS2.

- IDR Analysis: Use the IDR (Irreproducible Discovery Rate) pipeline to compare the two replicate peak lists. This identifies peaks that are reproducible across replicates, filtering out irreproducible noise.

- Thresholding: Retain peaks passing an IDR cutoff of < 0.05 (or more stringent 0.01) for downstream analysis.

Table 1: Impact of FDR Threshold on Peak Calls & Validation Rate

| FDR (q-value) Threshold | Number of Peaks Called | Estimated False Positives | Empirical Validation Rate (qPCR) | Risk Level for Drug Discovery |

|---|---|---|---|---|

| 0.10 | 15,250 | ~1,525 | 35-50% | Critical - High risk of pursuing false targets. |

| 0.05 | 8,740 | ~437 | 60-75% | High - Unacceptable for lead target selection. |

| 0.01 | 3,120 | ~31 | 85-95% | Moderate - Suitable for preliminary identification. |

| 0.001 | 950 | ~1 | >95% | Low - Recommended for critical target validation. |

Table 2: Comparative Analysis of FDR Control Methods in ChIP-seq

| Method | Principle | Key Advantage | Key Limitation | Best Use Case |

|---|---|---|---|---|

| Benjamini-Hochberg | Controls the expected proportion of false positives among discoveries. | Standard, widely implemented in peak callers. | Assumes independent tests; can be anti-conservative with correlated genomic signals. | Initial screening with good replicate structure. |

| IDR (Irreproducible Discovery Rate) | Ranks peaks from replicates and models consistency; does not use p-values directly. | Excellent for assessing reproducibility between replicates. | Requires at least two true biological replicates. | Gold standard for establishing high-confidence peak sets from replicates. |

| Blacklist Filtering | Removes peaks in known problematic genomic regions (e.g., telomeres). | Removes a source of systematic technical artifacts. | Does not control for statistical false positives. | Mandatory pre-processing step in all analyses. |

| Functional Convergence | Filters peaks based on overlap with functional genomic signals (e.g., CRISPR hits). | Increases the biological relevance of the retained peaks. | Dependent on availability and quality of orthogonal data. | Final prioritization stage for target identification. |

Visualizations

Diagram Title: FDR Impact on Drug Development Pipeline Success

Diagram Title: Rigorous ChIP-seq FDR Control Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in FDR-Controlled ChIP Experiments |

|---|---|

| High-Quality, Validated Antibody | The single most critical reagent. Specificity and immunoprecipitation efficiency directly affect signal-to-noise ratio. Validate with KO cell lines. |

| Chromatin Shearing Reagents | Consistent, appropriate fragment size (200-500 bp) is vital for resolution and peak calling. Use validated enzymatic or sonication kits. |

| Magnetic Protein A/G Beads | For consistent and efficient pulldown. Reduce non-specific background vs. agarose beads. |

| Size-Matched Input DNA | The essential control for background modeling. Must be prepared from the same cell lysate as IP samples and sequenced to sufficient depth. |

| Library Prep Kit for Low Input | Allows robust library construction from low-yield IPs, enabling deeper sequencing of true signal. |

| Spike-in Control (e.g., S. cerevisiae chromatin) | Normalizes for technical variation (cell count, IP efficiency) between samples, improving cross-sample comparison accuracy. |

| IDR Software Package | The computational tool for rigorous assessment of reproducibility between biological replicates, providing a robust irreproducibility rate. |

| Genomic Blacklist (e.g., ENCODE) | A curated list of genomic regions with anomalous, unstructured signals. Filtering these out reduces false positives. |

Step-by-Step FDR Control: From Raw Reads to High-Confidence Peaks

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: What is the primary difference in how MACS3, HOMER, and SPP estimate and control the False Discovery Rate (FDR)? A: The core methodologies differ significantly, impacting their sensitivity and specificity in a ChIP-seq data analysis thesis focused on FDR control research.

- MACS3: Primarily uses a dynamic Poisson distribution to model the tag distribution and calculates an empirical FDR by swapping the control and treatment samples. It reports both q-values (Benjamini-Hochberg adjusted p-values) and empirical FDRs.

- HOMER: Employs a fixed Poisson threshold against the local background region. Its FDR control is less explicit in the primary peak calling step (

findPeaks) but is rigorously applied during differential binding analysis (getDifferentialPeaks) using the Benjamini-Hochberg procedure. - SPP (PhantomPeakTools): Relies on an Irreproducible Discovery Rate (IDR) framework for robust FDR estimation. It assesses consistency between replicates to control for false positives, which is a more stringent, replicate-dependent method.

Q2: I am getting zero or very few peaks called by MACS3 when using a broad mark dataset (e.g., H3K27me3). What should I do? A: This is common. The default MACS3 parameters are optimized for sharp peaks (e.g., transcription factors).

- Troubleshooting Step: Use the

--broadflag for broad histone marks. Adjust the--broad-cutoff(default is 0.1). Consider using--nolambdato not consider local background for broad region detection. - Protocol:

macs3 callpeak -t ChIP.bam -c Control.bam -f BAM -g hs --broad --broad-cutoff 0.05 -n output_prefix

Q3: HOMER's findPeaks reports "Peak file is empty". What are the likely causes?

A:

- Insufficient Sequencing Depth: The tag density may be too low. Check your

tagDirectorylog file for total tags. Consider increasing sequencing depth. - Incorrect Style Parameter: Using

-style factorfor a broad mark. For histone marks, use-style histone. - Region Size Too Large: For factor data, if

-sizeis set too large (e.g., 1000), the local tag density may not meet the Poisson threshold. Try reducing-sizeto 200 or 150. - Missing Control Sample: While not always required, a control sample greatly improves accuracy. Provide one using

-i control_tagDirectory.

Q4: SPP/IDR analysis fails due to not having enough peaks passing the specified IDR threshold (e.g., 0.05). How can I proceed with my thesis analysis? A: This indicates low concordance between your replicates.

- Step 1: Check the quality of your replicates first (cross-correlation plots, NSC, RSC scores from SPP). Poor-quality replicates will fail IDR.

- Step 2: Relax the IDR threshold (e.g., to 0.1) to obtain a reproducible peak set for downstream analysis, but explicitly justify this in your thesis methodology.

- Step 3: As an alternative, generate a pooled peaks list from both replicates using MACS3 and use it for downstream analysis, while clearly stating the limitation of not using an IDR-based consensus.

Q5: For drug development applications requiring high confidence, which tool's FDR metric is most recommended? A: The IDR framework (as implemented by SPP/PhantomPeakTools) is considered the gold standard for establishing high-confidence peak sets when biological replicates are available. It directly addresses the reproducibility of discoveries, which is critical for downstream target validation in drug development. MACS3's q-value is suitable for single-replicate experiments or initial screening.

Quantitative Comparison Table

Table 1: Core FDR Estimation Method Comparison

| Feature | MACS3 (v3.0.0) | HOMER (v4.11) | SPP/IDR (v1.2) |

|---|---|---|---|

| Primary FDR Method | Empirical FDR, q-values (BH) | Poisson model, BH in diff. analysis | Irreproducible Discovery Rate (IDR) |

| Replicate Requirement | Optional (can pool) | Optional | Mandatory (≥2 true reps) |

| Key Output Metric | q-value, Fold Enrichment | Peak Score (log10 p-value), FDR (diff.) | IDR Score, Global IDR % |

| Optimal For | Sharp peaks, single reps | De novo motif discovery, both sharp/broad | High-confidence peak sets, rep concordance |

| Speed | Fast | Moderate (depends on genome) | Slow (requires alignment sorting) |

Table 2: Typical Results on a Benchmark H3K4me3 Dataset

| Metric | MACS3 (q<0.01) | HOMER (FDR<0.01) | SPP (IDR<0.05) |

|---|---|---|---|

| Peaks Called | ~45,000 | ~38,000 | ~22,000 |

| Peak Overlap with Consensus (%) | 92% | 89% | 99% |

| Median Peak Width | 500 bp | 450 bp | 350 bp |

| Runtime (min) | 15 | 25 | 45+ |

Experimental Protocols

Protocol 1: Standardized Benchmarking Workflow for FDR Comparison

- Data Acquisition: Download public ChIP-seq datasets (e.g., ENCODE) with two biological replicates and a matched input control for a sharp mark (e.g., CTCF) and a broad mark (e.g., H3K36me3).

- Uniform Preprocessing: Process all datasets through a single pipeline: adapter trimming (Trim Galore!), alignment (BWA/Bowtie2), duplicate marking (Picard Tools), and filtering.

- Peak Calling:

- MACS3: Run with both narrow (

-q 0.05) and broad (--broad --broad-cutoff 0.1) parameters. - HOMER: Create

tagDirectories, runfindPeakswith-style factorand-style histone. - SPP: Run

run_spp.Rfor cross-correlation, thenidrpipeline using peaks from replicates sorted by p-value (from MACS2).

- MACS3: Run with both narrow (

- Analysis: Generate consensus peak sets using BEDTools. Calculate overlap statistics, precision/recall if a gold standard exists, and compare peak characteristics.

Protocol 2: Executing the IDR Analysis Pipeline (SPP)

- Input: Sorted BAM files for two replicates (Rep1, Rep2) and a pooled BAM.

- Call Initial Peaks: Call peaks on Rep1, Rep2, and the pooled sample using MACS2 with relaxed thresholds (

-p 0.1). Sort each peak file by p-value. - Run IDR: Use the

idrcommand:idr --samples rep1_peaks.narrowPeak rep2_peaks.narrowPeak --peak-list pooled_peaks.narrowPeak --output-file idr_output --rank p.value --soft-idr-threshold 0.05 --plot - Generate Final Set: Extract peaks passing the chosen IDR threshold (e.g., 0.05) from the pooled output file. This is your high-confidence set.

Visualizations

Diagram 1: FDR Control Methodologies in Peak Callers

Diagram 2: IDR Analysis Workflow for High-Confidence Peaks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ChIP-seq FDR Benchmarking Studies

| Item | Function in FDR Research |

|---|---|

| High-Quality Reference Genome (e.g., GRCh38, mm10) | Essential for consistent alignment across tools, forming the basis for all peak coordinate outputs. |

| Validated Public Dataset (e.g., from ENCODE/CONSORTIA) | Provides benchmark truth sets with biological replicates for method comparison and validation. |

| BEDTools Suite | Critical for intersecting, merging, and comparing peak files from different callers to generate consensus sets and calculate overlap metrics. |

| R/Bioconductor (with packages: ChIPQC, ChIPseeker, idr) | Used for advanced statistical analysis, quality control metrics (NSC, RSC), and executing the IDR pipeline. |

| Compute Cluster/High-Performance Computing (HPC) Access | Necessary for processing multiple datasets and running computationally intensive tools (like HOMER on large genomes) in parallel. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ChIP-seq analysis pipeline reports thousands of peaks at q < 0.05, but I suspect many are false positives. How can I validate this?

A: A high number of peaks at a standard FDR threshold can indicate low-quality data or inappropriate parameter settings.

- Troubleshooting Steps:

- Check Input/Control Library: Compare the read depth and complexity of your ChIP sample versus your control (Input or IgG). A weak control can inflate false positives.

- Replicate Concordance: Use an irreproducible discovery rate (IDR) analysis on your biological replicates. Peaks that are not consistent across replicates are less reliable.

- Check Peak Shape: Visually inspect top-called peaks in a genome browser. True peaks often have a stereotypical shape for the target (e.g., sharp for transcription factors, broad for histones).

- Protocol: IDR Analysis for Replicate Concordance

- Call peaks on each replicate independently and on a pooled sample.

- Rank peaks from the pooled analysis by their statistical significance (e.g., -log10(p-value)).

- For each peak in the pooled set, find its most significant overlapping peak in each replicate file.

- Calculate the IDR using a pre-validated software package (e.g.,

idrfrom ENCODE). - Select peaks passing a chosen IDR threshold (e.g., 0.01 or 0.05) as your high-confidence set.

Q2: How do I choose between q < 0.01 and q < 0.05 for my differential binding analysis? My list of significant hits changes drastically.

A: The choice is a balance between sensitivity (finding true effects) and precision (avoiding false leads).

- Guidance:

- Use q < 0.01 for stringent control when follow-up validation is extremely costly or resource-intensive (e.g., generating a transgenic model). This yields a high-confidence, shorter list.

- Use q < 0.05 for exploratory discovery when you can tolerate more follow-up validation experiments. This increases sensitivity but requires more downstream filtering.

- Actionable Protocol:

- Perform your differential analysis (e.g., using

DESeq2for counts). - Extract results at both thresholds.

- For the q < 0.05 list, apply additional filters: a minimum fold-change (e.g., >2) and a minimum normalized signal (e.g., baseMean > 10). This refines the list toward more biologically relevant changes.

- Compare the final lists using pathway enrichment analysis. A robust biological signal should show related pathways enriched in both lists.

- Perform your differential analysis (e.g., using

Q3: What does a q-value of 0.03 for a specific peak actually mean in the context of my experiment?

A: The q-value is an FDR-adjusted p-value. A q-value of 0.03 for a peak means that among all peaks with a significance at least as extreme as this one, you expect 3% to be false discoveries. It is a statement about the collection of tests, not the probability this individual peak is false.

Q4: My negative control sample is yielding peaks at q < 0.05. What is wrong?

A: This indicates a failure of the FDR control assumption, often due to systematic bias.

- Common Causes & Fixes:

- Poor Control Quality: The control library may be under-sequenced or have high PCR duplicates. Fix: Re-make the library or sequence deeper.

- Genomic Contamination: Your "control" sample may have biological signal (e.g., Input DNA from a mixed cell population). Fix: Use a proper IgG control for ChIP.

- Alignment Artifacts: Over-representation of reads in specific genomic regions (e.g., repeats). Fix: Use a more stringent alignment filter or a blacklist region file.

Key Data & Thresholds in FDR Control for ChIP-seq

Table 1: Common FDR Thresholds and Their Interpretations in ChIP-seq Analysis

| q-Value Threshold | Common Interpretation in ChIP-seq | Typical Use Case |

|---|---|---|

| q < 0.001 | Very High Stringency | Defining ultra-high confidence "gold standard" peaks for benchmark studies or critical drug targets. |

| q < 0.01 | High Stringency | Standard for publication-quality peak calling in focused studies; balances confidence and yield. |

| q < 0.05 | Moderate Stringency | Exploratory analysis, initial screening, or when combined with additional fold-change filters. |

| q < 0.10 | Permissive Stringency | Rarely used alone; may be applied in studies with low signal-to-noise to avoid excessive false negatives. |

Table 2: Impact of Replicate Number on Effective FDR

| Number of Biological Replicates | Recommended Analysis Method | Effective Rigor | Notes |

|---|---|---|---|

| 1 | Peak caller (MACS2, etc.) with control. | Low | FDR estimates are unreliable. Strongly discouraged for publication. |

| 2 | IDR analysis or consensus peaks. | Medium | Minimum standard. Allows for basic reproducibility assessment. |

| ≥3 | Differential analysis with DESeq2 or edgeR. | High | Enables robust statistical modeling and variance estimation, improving true FDR control. |

Workflow Diagram: FDR Control in ChIP-seq Analysis

Title: ChIP-seq FDR Control and Replicate Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Robust ChIP-seq & FDR Analysis

| Item | Function | Notes for FDR Control |

|---|---|---|

| High-Quality Antibody | Immunoprecipitates target protein. | High specificity reduces background noise, improving signal-to-noise ratio and true FDR. |

| Appropriate Control (Input DNA, IgG, pre-immune serum) | Distinguishes specific enrichment from background. | Critical. A matched, well-sequenced control is non-negotiable for accurate FDR estimation. |

| Biological Replicates (≥2) | Account for technical and biological variability. | Enables reproducibility assessment (IDR) and stronger statistical models for differential analysis. |

| Spike-in Control (e.g., S. cerevisiae chromatin) | Normalizes for technical variation between samples. | Essential for accurate differential analysis when global binding changes are expected. |

| Cell Line Authentication | Ensures experimental consistency. | Prevents false results from misidentified or contaminated cells. |

IDR Analysis Software (e.g., idr package) |

Assesses reproducibility between replicates. | Provides a more reliable high-confidence peak set than a simple q-value cutoff alone. |

Statistical Software (e.g., R/Bioconductor, DESeq2) |

Performs differential binding analysis. | Models count data appropriately, controlling FDR across multiple comparisons between conditions. |

Implementing the Irreproducible Discovery Rate (IDR) Framework for Replicate Analysis

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: What is the fundamental principle of the IDR framework, and why is it preferred over a simple overlap analysis for replicate ChIP-seq peaks? A: The IDR framework models the ranks of signal values (like -log10(p-value)) from two replicates to distinguish reproducible signals from noise. It assumes that reproducible peaks will have consistently high ranks in both replicates, while irreproducible peaks will have inconsistent ranks. It is superior to simple overlap because it is rank-based, accounts for the strength of evidence, provides a principled FDR control, and is less sensitive to arbitrary score thresholds.

Q2: During IDR analysis, I receive a warning: "psi is small." What does this mean, and how should I proceed? A: A small psi (ψ) parameter indicates a low estimated proportion of signals coming from the reproducible component. This often happens when replicates are of low quality or have poor reproducibility. You should first assess the overall correlation between your replicate scores (e.g., using a scatterplot). If correlation is low (< 0.5), consider revisiting your experimental protocol or data preprocessing steps before trusting the IDR output.

Q3: The number of peaks passing IDR (e.g., at 1% or 5%) is unexpectedly low. What are the common causes? A: Common causes include:

- Low Replicate Concordance: High technical or biological variability.

- Incorrect Preprocessing: Different normalization or peak calling parameters between replicates.

- Weak Signal-to-Noise: The ChIP experiment itself may have high background.

- Overly Stringent Initial Peak Call: If the initial union peak list is too restricted, truly reproducible weak peaks may be excluded.

Q4: How do I choose between using the "idr" package and the "IDR" package in R? What are the key differences? A: The choice depends on your workflow and data type.

| Feature | idr (Original, Command-line) |

IDR (R Package) |

|---|---|---|

| Primary Use | Analysis of narrow peaks (e.g., transcription factors) from MACS2. | More general, can handle broader peaks (e.g., histone marks) and user-defined scores. |

| Input Format | Requires a specific 10-column BED-like format. | Works with RangedSummarizedExperiment objects or matrices. |

| Integration | Fits into UNIX command-line pipelines. | Integrates into R/Bioconductor analysis workflows. |

| Model Fitting | Expectation-Maximization (EM) algorithm. | Uses an optimized numerical maximization procedure. |

Q5: Can IDR be applied to more than two replicates? If so, how? A: The standard IDR model is defined for two replicates. For >2 replicates, a common strategy is to perform pairwise IDR analyses and take the consensus, or use the stable set approach: rank peaks by their minimum IDR score across all pairwise comparisons.

Troubleshooting Guides

Issue: Installation Failures for the idr Package.

- Symptoms: Errors during

pip install idrorsetup.py install. - Diagnosis: Often due to missing C library dependencies (GSL - GNU Scientific Library).

- Solution:

- On Ubuntu/Debian:

sudo apt-get install libgsl-dev - On macOS (using Homebrew):

brew install gsl - On Windows: Use the Windows Subsystem for Linux (WSL2) or a pre-configured virtual machine.

- Retry the installation:

pip install numpy idr(installing NumPy first can help).

- On Ubuntu/Debian:

Issue: Poor Reproducibility Between Biological Replicates Leading to No IDR Peaks.

- Symptoms: High IDR values for all peaks, warning messages about model fit.

- Diagnostic Steps & Protocol:

- Visual Inspection Protocol:

- Generate a scatterplot of peak scores (e.g., p-values) from both replicates.

- Command (

idr): Use the--plotflag. - Command (R):

plot(idrOutput)orggplot(data, aes(rep1_score, rep2_score)) + geom_point(alpha=0.3)

- Calculate Rank Correlation Protocol:

- Compute Spearman's rank correlation on the -log10(p-value) or signal value columns.

- R Code:

cor(rep1_scores, rep2_scores, method="spearman") - Expected Outcome: A correlation > 0.5 suggests reasonable reproducibility for IDR analysis.

- Action: If correlation is low, investigate wet-lab protocols (antibody specificity, cross-linking efficiency) and bioinformatics steps (read alignment quality, duplicate marking, peak caller consistency).

- Visual Inspection Protocol:

Issue: Inconsistent Results Between IDR Runs on the Same Data.

- Symptoms: Slightly different numbers of peaks passing the IDR threshold on repeated runs.

- Cause: The EM algorithm may converge to different local maxima due to random initialization.

- Solution Protocol:

- Set a random seed for reproducibility.

- For the command-line

idr: Use the--seedparameter (e.g.,--seed 42). - For the R

IDRpackage: Useset.seed()before calling theest.IDR()function. - Always report the seed value in your methodology for full reproducibility.

Table 1: Typical IDR Output Metrics and Their Interpretation

| Metric | Description | Optimal Range / Target | Indication of Problem |

|---|---|---|---|

| Number of Peaks (IDR < 0.05) | Final set of reproducible peaks. | Depends on factor/genome. Should be biologically plausible. | Drastically lower than expected from literature. |

| Spearman's ρ (Correlation) | Rank correlation of signal values in reproducible component. | High (e.g., > 0.8). | Low correlation (<0.5) suggests poor replicate agreement. |

| π₁ (Proportion of Reproducible Signal) | Estimated proportion of peaks from the reproducible component. | Should be > 0.2 for meaningful analysis. | A very low π₁ (e.g., < 0.1) indicates most data is noise. |

| Local IDR at Threshold | The irreproducible discovery rate at the chosen score rank cutoff. | Matches your FDR tolerance (e.g., 0.01, 0.05). | Cannot achieve desired local IDR without losing all peaks. |

Table 2: Comparison of FDR Control Methods in ChIP-seq Analysis

| Method | Principle | Requires Replicates? | Controls FDR for | Key Limitation |

|---|---|---|---|---|

| Benjamini-Hochberg (BH) | Adjusts p-values from a single replicate test. | No | False positives within a single sample list. | Does not assess reproducibility between experiments. |

| IDR | Models joint rank distributions from two replicates. | Yes (2+) | Irreproducible discoveries across replicates. | Requires high-quality, concordant replicates. |

| BL-IDA | Uses a beta-uniform mixture model on one sample. | No | Local false discovery rate in one sample. | Lacks the direct reproducibility measure of IDR. |

Core Experimental Protocol: IDR Analysis for ChIP-seq Replicates

Protocol: Implementing IDR with MACS2 and the idr Package

Independent Peak Calling:

- Call peaks on each biological replicate independently using MACS2.

macs2 callpeak -t rep1_treat.bam -c rep1_control.bam -n rep1 -f BAM -g hs --outdir rep1_peaksmacs2 callpeak -t rep2_treat.bam -c rep2_control.bam -n rep2 -f BAM -g hs --outdir rep2_peaks

Create a Pooled Pseudoreplicate:

- Merge aligned reads from both replicates and randomly split them into two equal-sized pseudoreplicates.

macs2 callpeak -t pooled_treat.bam -c pooled_control.bam -n pooled -f BAM -g hs --outdir pooled_peaks

Generate the Initial Union Peak List:

- Sort all peaks (from Rep1, Rep2, and Pooled) by their p-value or score, take the top N (e.g., 150,000), and merge them to create a non-redundant set.

Prepare Files for IDR:

- For each replicate and the pooled pseudoreplicate, find the signal value (e.g., -log10(p-value)) for each peak in the union list. Format into a 10+ column file where column 5 is the score.

Run IDR Analysis:

- Compare the true biological replicates.

idr --samples rep1_peaks.narrowPeak rep2_peaks.narrowPeak --peak-list union_peaks.narrowPeak --output-file idr_results.tsv --plot- Compare the self-consistency (pseudoreplicates).

idr --samples pseudo1_peaks.narrowPeak pseudo2_peaks.narrowPeak --peak-list union_peaks.narrowPeak --output-file idr_selfconsist.tsv

Extract Reproducible Peaks:

- Filter the output file to keep peaks with an IDR value below your threshold (e.g., IDR ≤ 0.05). This is your final, high-confidence peak set.

Visualizations

Title: IDR Analysis Workflow for ChIP-seq Replicates

Title: Logical Flow of the IDR Framework's Statistical Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ChIP-seq Replicate Studies Using IDR

| Item / Reagent | Function / Purpose in IDR Context | Critical Consideration |

|---|---|---|

| High-Quality Antibody (ChIP-grade) | Target-specific immunoprecipitation. Primary source of variance. | Lot consistency between replicates is paramount for IDR success. |

| Dual/Paired Biological Replicates | Provide the fundamental data for reproducibility assessment. | Must be truly independent (different cell passages, lysates) to avoid pseudoreplication. |

| MACS2 Software | Standard peak caller for narrow peaks; generates input scores for IDR. | Use identical parameters (e.g., --call-summits, -p 1e-5) for all replicates. |

| IDR Software Package | Implements the core statistical model. | Choose idr (CLI) for TF ChIP or IDR (R) for flexibility with histone marks. |

| Control/Input DNA | For background signal estimation during peak calling. | Required for accurate p-value calculation, which feeds into the IDR model. |

| GNU Scientific Library (GSL) | Numerical library required to install/run the idr package. |

Must be installed at the system level; a common installation hurdle. |

| Cross-linking Reagent (e.g., Formaldehyde) | Fixes protein-DNA interactions. | Cross-linking time must be optimized and consistent to ensure reproducible fragmentation. |

Within the broader thesis research on controlling the False Discovery Rate (FDR) in ChIP-seq data analysis, a critical challenge is generating a consensus, high-confidence peak list from biological replicates. This guide provides a practical troubleshooting framework for implementing two robust statistical methods: the filter module in MACS3 and the idr (Irreproducible Discovery Rate) package. The goal is to minimize technical artifacts and false positives, yielding a reliable peak set for downstream regulatory element analysis in drug target discovery.

Troubleshooting Guides & FAQs

Section 1: MACS3filterModule Issues

Q1: After running macs3 filter, my output BED file is empty. What are the common causes?

A: An empty output typically stems from overly stringent criteria. Check the following:

- Threshold Values: The default

-p(p-value) or-q(q-value/FDR) thresholds might be too high for your data. Try using a more lenient value (e.g.,-q 0.05instead of-q 0.01). - Peak Input: Verify your input BED file is correctly formatted (tab-separated, standard 6-column BED). Ensure it was generated by

macs3 callpeak. - Command Syntax: A misplaced flag can cause the filter to run on an empty set. Re-check your command:

macs3 filter -i peaks.bed -p 1e-5 -o filtered_peaks.bed.

Q2: What is the practical difference between filtering by -p (p-value) and -q (q-value/FDR), and which should I use?

A: The p-value measures the significance of enrichment against a random background. The q-value estimates the FDR, i.e., the proportion of peaks expected to be false positives. For FDR control research, using -q is recommended as it directly relates to the thesis's core aim of controlling false discoveries. Filtering by q-value (e.g., -q 0.01) provides a more biologically interpretable and consistent threshold across experiments.

Q3: I get the error "ValueError: invalid literal for int() with base 10". How do I fix it?

A: This indicates a formatting issue in your input BED file. Ensure the 5th column (score) contains numeric values (like p-values or q-values) and not text (like "inf"). You may need to pre-process your BED file or re-run macs3 callpeak with standard output.

Table 1: MACS3 filter Key Parameters & Troubleshooting

| Parameter | Default | Recommended for FDR Control | Common Issue | Solution |

|---|---|---|---|---|

-i (input) |

None | (Required) | File not found | Check path and file permissions. |

-p (p-value) |

1e-5 | Use -q instead |

Empty output | Use a larger p-value (e.g., 1e-3). |

-q (q-value) |

None | 0.01 or 0.05 | Not applicable if input lacks q-values | Generate input with callpeak --broad-cutoff. |

-o (output) |

stdout | filtered_peaks.bed | Permission denied | Specify a writable output directory. |

Section 2:idrPackage Analysis Issues

Q4: The IDR analysis collapses most of my peaks, suggesting low reproducibility. What steps should I take before concluding my replicates are poor? A: Perform this pre-IDR optimization workflow:

- Ranking Peaks: Ensure you are ranking peaks by

-log10(p-value)or-log10(q-value)(the default inidr). Signal value ranking can be noisier. - Peak Consistency: Run MACS3 with identical parameters on both replicates. Inconsistent peak widths or shifting summits cause low overlap.

- Pseudo-replicates: Generate pseudo-replicates from pooled data using

macs3 callpeakto establish an optimal IDR threshold curve. - Threshold Adjustment: The default IDR threshold of 0.05 is stringent. For exploratory analysis, a threshold of 0.1 or 0.2 may be acceptable, as per the IDR methodology paper.

Q5: How do I choose the correct IDR threshold (e.g., 1%, 5%, 10%) for my final peak list? A: The threshold represents the maximum proportion of irreproducible discoveries you are willing to tolerate. Follow this protocol:

- Run IDR on your true replicates and on self-consistency (pseudo) replicates.

- Plot the number of peaks passing various IDR thresholds (e.g., 0.01, 0.02, ..., 0.1) for both analyses.

- Identify the threshold where the true replicate curve begins to sharply diverge from the pseudo-replicate curve (indicating noise). A point just before this divergence (often between 0.01 and 0.05) is empirically robust.

Q6: I encounter "numpy" or memory errors when running idr on large peak files. How can I resolve this?

A: This is common with broad histone marks. Implement these fixes:

- Pre-filter: Use

macs3 filterto remove very low-significance peaks (e.g.,-q 0.1) before running IDR, reducing file size. - System Memory: Ensure you have sufficient RAM. Consider using a high-performance computing cluster.

- Software Version: Update

idr,numpy, andscipyto their latest versions.

Table 2: IDR Analysis Decision Matrix

| Scenario | Recommended Action | Expected Outcome for Thesis Research |

|---|---|---|

| High overlap (>70%) at IDR<0.05 | Proceed with the conservative IDR peak list. | Provides a high-confidence, low-FDR peak set for validation. |

| Low overlap (<30%) at IDR<0.05 | 1. Check peak calling consistency.2. Use a more lenient IDR threshold (e.g., 0.1).3. Consider the idr "rescue" method. |

Highlights experimental variability; lenient threshold may still yield usable data for hypothesis generation. |

| Pseudo-replicate curve overlaps true replicate curve | Data may be underpowered. Consider pooling replicates for a single peak call. | Suggests replicates are highly consistent, but the experiment may lack depth. FDR control is stable. |

Experimental Protocol: Integrated MACS3-IDR Workflow

Objective: Generate a robust, FDR-controlled peak list from two biological replicates of a transcription factor ChIP-seq experiment.

Protocol:

- Peak Calling (Per Replicate):

macs3 callpeak -t replicate1.bam -c control1.bam -f BAM -g hs -n rep1 --outdir rep1_peaks -q 0.05 --call-summitsRepeat for replicate 2.

Pre-Filtering (Optional, for large datasets):

macs3 filter -i rep1_peaks/rep1_peaks.narrowPeak -q 0.1 -o rep1_peaks_filtered.narrowPeakRepeat for replicate 2.IDR Analysis:

idr --samples rep1_peaks_filtered.narrowPeak rep2_peaks_filtered.narrowPeak --rank p.value --output-file idr_output.tsv --plot --log-output-file idr.logGenerate Final Consensus Peak Set: Extract peaks passing IDR threshold (e.g., < 0.05) from the

idr_output.tsvfile. Use theawkcommand as recommended in the IDR documentation:awk '{if($5 >= 540) print $0}' idr_output.tsv > robust_peaks.bed(Note: The 5th column is the scaled IDR value; -log10(0.05) ~= 540).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| MACS3 Software | Primary tool for peak calling and initial statistical filtering of ChIP-seq data. |

| IDR Package (v2.0.3+) | Implements the Irreproducible Discovery Rate method to assess reproducibility between replicates. |

| Sorted BAM Files | Alignment files for ChIP and input control samples, the essential starting input. |

| UCSC Genome Browser Tools | For visualizing and validating final peak lists in a genomic context. |

| High-Performance Computing (HPC) Cluster | Provides necessary computational resources for memory-intensive IDR analysis on large genomes. |

Visualizations

Diagram 1: ChIP-seq FDR Control Analysis Workflow

Diagram 2: IDR Threshold Selection Logic

Diagnosing and Fixing Common FDR Control Failures in Your ChIP-Seq Pipeline

Troubleshooting Guides & FAQs

Q1: Our IDR analysis on two ChIP-seq replicates yields very few peaks passing the default threshold (e.g., IDR < 0.05). This suggests low concordance. What are the primary technical causes?

A: Low replicate concordance leading to poor IDR results is often due to:

- Variable Sequencing Depth: Significant differences in total reads between replicates artificially lowers measured reproducibility.

- Inconsistent Peak Morphology: Differences in fragment size selection, antibody efficiency, or cross-linking can cause shifts in peak shape and location.

- High Background Noise: Excessive unstructured background signal from non-specific antibody binding or poor sample quality obscures true binding events.

- Threshold Sensitivity: Applying an overly stringent initial significance threshold (e.g., p-value or q-value) before IDR analysis discards real but weaker peaks.

Q2: How can I adjust my IDR pipeline to recover more true peaks when concordance appears low, without simply increasing the FDR?

A: Implement a multi-pronged strategy focused on pre-processing and parameter optimization:

- Normalize for Sequencing Depth: Use a scaling factor (e.g., based on reads in peak regions or a control sample) to align replicate signal depths before peak calling.

- Optimize the Initial Threshold: Instead of a fixed, stringent threshold, use a relaxed cutoff (e.g., rank peaks by p-value and take the top 100,000-150,000 from each replicate) as input for the IDR algorithm. This provides more data for the rank concordance model to evaluate.

- Perform IDR on Pseudoreplicates: Generate pseudoreplicates by pooling and randomly splitting replicates. Comparing pooled pseudoreplicates can help distinguish true signal from systematic noise and guide the selection of an appropriate IDR cutoff for your biological replicates.

Q3: What experimental protocol adjustments can improve replicate concordance for future ChIP-seq experiments?

A: Follow this detailed protocol for improved consistency:

- Protocol: Standardized Cross-Linking & Sonication for Chromatin Preparation

- Cross-linking: Treat cells with 1% formaldehyde for exactly 10 minutes at room temperature with gentle agitation. Quench with 125mM glycine for 5 minutes.

- Cell Lysis: Lyse cells in Farnham Lysis Buffer (5mM PIPES pH 8.0, 85mM KCl, 0.5% NP-40) with protease inhibitors for 10 minutes on ice.

- Nuclear Lysis & Sonication: Pellet nuclei. Resuspend in Sonication Buffer (50mM Tris-HCl pH 8.0, 10mM EDTA, 1% SDS). Sonicate using a focused ultrasonicator (e.g., Covaris) to achieve a majority of fragments between 200-500 bp. Optimize time/energy for each cell type.

- Immunoprecipitation: Dilute sonicated chromatin 1:10 in ChIP Dilution Buffer (0.01% SDS, 1.1% Triton X-100, 1.2mM EDTA, 16.7mM Tris-HCl pH 8.0, 167mM NaCl). Incubate with pre-validated antibody-bound beads overnight at 4°C.

- Washes & Elution: Perform sequential cold washes: Low Salt Wash Buffer (0.1% SDS, 1% Triton X-100, 2mM EDTA, 20mM Tris-HCl pH 8.0, 150mM NaCl), High Salt Wash Buffer (same as Low Salt but with 500mM NaCl), LiCl Wash Buffer (0.25M LiCl, 1% NP-40, 1% deoxycholate, 1mM EDTA, 10mM Tris-HCl pH 8.0), and two washes with TE Buffer. Elute in Elution Buffer (1% SDS, 0.1M NaHCO3).

- Reverse Cross-linking & Clean-up: Add NaCl to 200mM and RNase A, incubate at 65°C overnight. Add Proteinase K, incubate at 45°C for 2 hours. Purify DNA with SPRI beads.

- Library Preparation & Sequencing: Use a consistent, high-fidelity library prep kit. Sequence replicates to a comparable depth (minimum 20 million non-redundant, mapped reads each).

Q4: How should I set the IDR threshold in practice when dealing with noisy data?

A: The standard IDR < 0.05 corresponds to a 5% chance that a peak is a false discovery relative to the reproducible set. With noisy data:

- First, run IDR with a relaxed initial threshold.

- Plot the number of peaks passing IDR thresholds from 0.01 to 0.1. Often, a "plateau" or inflection point is visible.

- Select a threshold at the beginning of this plateau (e.g., 0.02, 0.03, or 0.04) as a balance between sensitivity and reproducibility. This decision must be consistent across all analyses within a thesis.

Table 1: Impact of Pre-IDR Threshold on Final Peak Yield

| Initial Peak Selection Method | Replicate 1 Peaks Input | Replicate 2 Peaks Input | Peaks Passing IDR < 0.05 | % Recovery vs. Stringent p-value |

|---|---|---|---|---|

| Stringent (p-value < 1e-7) | 8,500 | 7,900 | 4,200 | Baseline |

| Relaxed (Top 100,000 by p-value) | 100,000 | 100,000 | 12,500 | +198% |

| Relaxed + Depth Normalization (Top 100,000 ranks) | 100,000 | 100,000 | 14,300 | +240% |

Table 2: Recommended Sequencing Depth for ChIP-seq Replicates

| Target Type | Minimum Reads per Replicate (Mapped, Non-Redundant) | Recommended Depth for IDR Analysis |

|---|---|---|

| Sharp Histone Marks (H3K4me3) | 15-20 million | 20-25 million |

| Broad Histone Marks (H3K27me3) | 30-40 million | 40-50 million |

| Transcription Factors | 20-30 million | 30-40 million |

Visualizations

Title: Troubleshooting Workflow for Low IDR Concordance

Title: IDR Analysis with Biological and Pseudoreplicates

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function & Rationale |

|---|---|

| Validated ChIP-Grade Antibody | High specificity minimizes off-target binding, the largest source of background noise and irreproducibility. |

| Magnetic Protein A/G Beads | Provide consistent immunoprecipitation efficiency with low non-specific DNA binding. |

| Covaris Focused Ultrasonicator | Enables reproducible and precise chromatin shearing to optimal fragment sizes. |

| SPRI (Ampure) Beads | For consistent size selection and clean-up during library prep and post-IP. |

| High-Fidelity PCR Kit (e.g., KAPA HiFi) | Minimizes PCR duplicates and biases during library amplification. |

| UMI (Unique Molecular Index) Adapters | Allows bioinformatic removal of PCR duplicates, improving accuracy of read counts. |

| IDR Software Package (v2.0.4+) | Implements the core irreproducible discovery rate statistical model for replicate analysis. |

DeepTools alignqc & plotFingerprint |

Essential for QC, assessing cross-correlation, and verifying replicate similarity. |

Dealing with Low-Complexity Libraries and High Background Noise

Troubleshooting Guides & FAQs

Q1: During ChIP-seq analysis, my data shows high background noise and poor peak calling. What are the primary technical causes? A: The main causes are:

- Low-Complexity Libraries: Resulting from over-sonication (fragments too small), inadequate PCR amplification, or poor sample input quality.

- Non-Specific Antibody Binding: Leading to high background signal.

- Insufficient Sequencing Depth: Failing to distinguish true signal from noise.

- Carryover of Genomic DNA in the immunoprecipitated sample.

Q2: How can I computationally identify if my library has low complexity? A: Use the following metrics and tools:

| Metric | Tool | Threshold for Concern | Interpretation |

|---|---|---|---|

| PCR Bottleneck Coefficient (PBC) | preseq, spp |

PBC1 < 0.5 | Indicates severe bottlenecking, high duplication. |

| Non-Redundant Fraction (NRF) | samtools, custom scripts |

NRF < 0.8 | Low fraction of unique reads in library. |

| Sequence Duplication Level | picard MarkDuplicates |

> 50% duplication | High redundancy, low library diversity. |

| Fraction of Reads in Peaks (FRiP) | MACS2, SEACR |

< 1% (broad) / < 5% (sharp) | Very low signal-to-noise ratio. |

Q3: What experimental protocols can mitigate low-complexity libraries? A: Protocol: Optimized ChIP-seq Library Preparation for Low-Input/High-Noise Samples

- Input Quality Control: Use a Bioanalyzer/Tapestation to ensure intact genomic DNA post-sonication. Target fragment size of 200-500 bp.

- Titrate PCR Cycles: Use the minimum number of PCR cycles needed for library amplification (e.g., 8-12 cycles). Test with qPCR during library prep.

- Use High-Fidelity Polymerase: Enzymes like KAPA HiFi reduce PCR bias and chimeras.

- Dual-Size Selection: Use SPRI beads for strict size selection (e.g., 0.5x and 0.8x ratios) to remove very small fragments and adapter dimers.

- Spike-in Controls: Use exogenous chromatin (e.g., Drosophila S2 chromatin) and corresponding antibodies to normalize for IP efficiency and background.

Q4: How do I adjust my FDR control in peak calling when dealing with high background? A: Standard FDR control (e.g., in MACS2) may fail. Implement these strategies:

| Strategy | Tool/Implementation | Purpose in FDR Control |

|---|---|---|

| Use a Paired Input Control | Essential for all analyses. | Provides background model for peak calling. |

| Apply a p-value or q-value Fold-Change Threshold | MACS2 (-p 1e-5 with --call-summits) |

Increases stringency beyond default FDR. |

| Two-Replicate Concordance (IDR) | IDR Pipeline (https://github.com/nboley/idr) | Controls FDR by requiring reproducibility between biological replicates. |

| Alternative Peak Callers for Noise | SEACR (stringent/relaxed mode) or ZINBA |

Designed for sparse data or high background. |

Q5: What are key reagent solutions for robust ChIP in high-background scenarios? A: Research Reagent Solutions Table:

| Reagent/Material | Function | Example Product/Note |

|---|---|---|

| High-Specificity, Validated Antibody | Minimizes non-specific binding, the primary source of background. | Use antibodies with published ChIP-seq data (e.g., from CST, Abcam). |

| Magnetic Protein A/G Beads | Efficient capture with low non-specific DNA binding. | Dynabeads. |

| Protease/Phosphatase Inhibitor Cocktails | Preserves protein integrity and chromatin state during IP. | Essential for phospho-specific ChIP. |

| UltraPure BSA or Chromatin Grade Carrier | Reduces non-specific adsorption in low-input protocols. | Use at 0.1-0.5 mg/mL. |

| Molecular Biology Grade Glycogen | Improves recovery of nucleic acids during ethanol precipitation. | For clean post-IP DNA recovery. |

| Spike-in Chromatin & Antibody | Enables normalization and background assessment. | Drosophila S2 chromatin with anti-H2Av (Active Motif). |

| High-Fidelity Library Prep Kit | Reduces PCR duplicates and bias. | KAPA HyperPrep, NEB Next Ultra II. |

Experimental Protocol: IDR Analysis for FDR Control

Title: Controlling FDR via Irreproducible Discovery Rate (IDR) Analysis Purpose: To identify a consistent set of peaks across replicates, controlling the false discovery rate. Steps:

- Peak Calling per Replicate: Call peaks on each biological replicate independently against its own input control using a permissive p-value threshold (e.g.,

MACS2 callpeak -p 0.05). - Sort Peaks: Sort the resulting narrowPeak files by -log10(p-value) in descending order.

- Run IDR: Compare the sorted replicate files using the IDR script.

- Derive Consensus Set: Extract peaks passing the chosen IDR threshold (e.g., 0.05) from the output file. This is your high-confidence, FDR-controlled peak set.

- Rescue Option (Optional): For experiments with more than two replicates, use the optimal set approach detailed in the IDR documentation.

Visualizations

Diagram 2: FDR Control Strategy for Noisy Data

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ChIP-seq experiment shows high background noise. What could be wrong with my control sample? A: High background often stems from a suboptimal input or control sample. The input DNA should be sheared to the same fragment size distribution as your ChIP sample. If the input is under-sheared, it will create false-positive peaks in open chromatin regions during peak calling. Ensure your input sample undergoes identical fragmentation, size selection, and library preparation steps as your experimental samples.

Q2: I observe peaks in my negative control (IgG) sample. How does this affect my FDR, and what should I do? A: Peaks in your IgG control indicate non-specific antibody binding or high background noise. During peak calling (e.g., using MACS2), the control sample is used to model the background. If the control itself has peaks, the background model is inaccurate, leading the algorithm to call fewer peaks from your true ChIP sample to maintain a given FDR threshold. This inflates the false negative rate. To resolve this, use a higher quality antibody with validated specificity and ensure stringent wash conditions. Re-perform the control experiment with a fresh, validated IgG.

Q3: How does the sequencing depth of my input control compare to my ChIP samples? A: Inadequate sequencing depth for the input sample is a common error. The input must have equal or greater depth than the ChIP samples to robustly model background signal. Insufficient input depth increases variance in background estimation, causing the peak caller to either miss true peaks (increase false negatives) or call false peaks (increase false positives), thereby distorting the nominal FDR.

Table 1: Impact of Input vs. ChIP Sequencing Depth on Peak Calling Accuracy

| ChIP Sample Depth | Input Sample Depth | Effect on Background Model | Typical Impact on FDR |

|---|---|---|---|

| 20 million reads | 20 million reads | Robust | FDR accurately controlled |

| 20 million reads | 10 million reads | Noisy, High Variance | Inflated & Unreliable |

| 40 million reads | 20 million reads | Underpowered | Increased false discoveries |

Q4: What is the recommended protocol for an optimal input control in a histone mark ChIP-seq experiment? A: The gold standard protocol is as follows:

- Cell Collection: Use the same number of cells as your ChIP experiment.

- Cross-linking & Lysis: Perform identical cross-linking (if used) and cell lysis.

- Sonication: Shear chromatin to 200-500 bp fragments. Run an aliquot on a gel to confirm size match with ChIP samples.

- Reverse Cross-linking: Incubate with Proteinase K at 65°C overnight.

- DNA Purification: Purify DNA using phenol-chloroform extraction and ethanol precipitation.

- Size Selection and QC: Use a gel or SPRI beads to select fragments in the target size range. Quantify by Qubit.

- Library Preparation: Use the identical library prep kit and cycle number as ChIP samples.

Q5: Can I use a different cell type or condition for my input control? A: No. The input control must be from the identical cell type, treatment condition, and harvesting batch. Genetic background, chromatin accessibility landscape, and mitochondrial DNA content vary between cell types and conditions. Using a mismatched control introduces systematic biases that severely inflate FDR, as differences are misattributed to enrichment.

Experimental Protocol: Generating a Matched Input Control for ChIP-seq

Title: Protocol for Isolating Input DNA for ChIP-seq Background Modeling

Methodology:

- Harvest Cells: Collect 1x10^6 cells (or equivalent tissue) per planned input library.

- Cross-link (if used for ChIP): Add 1% formaldehyde for 10 minutes at room temperature. Quench with 125mM glycine.

- Lysate Preparation: Resuspend cell pellet in 1 mL Cell Lysis Buffer (10 mM Tris-HCl pH 8.0, 10 mM NaCl, 0.2% NP-40) with protease inhibitors. Incubate 10 min on ice. Pellet nuclei.

- Nuclear Lysis & Sonication: Resuspend nuclei in 500 µL Sonication Lysis Buffer (50 mM Tris-HCl pH 8.0, 10 mM EDTA, 1% SDS). Sonicate using the exact same instrument and settings (e.g., Covaris, 200 cycles/burst, 20% duty factor, 6 min) as your ChIP samples. Centrifuge to remove debris.

- Decrosslinking: Take 200 µL of sonicated lysate. Add 8 µL of 5M NaCl and 2 µL of Proteinase K (20 mg/mL). Incubate at 65°C overnight.

- DNA Purification: Add 200 µL phenol:chloroform:isoamyl alcohol (25:24:1). Vortex, centrifuge. Transfer aqueous phase. Precipitate DNA with 2x volumes 100% ethanol, 0.1x volume 3M NaOAc, and 1 µL glycogen. Wash with 70% ethanol.

- Resuspension and QC: Resuspend in 50 µL TE buffer. Quantify by Qubit dsDNA HS Assay. Analyze 20 ng on a Bioanalyzer High Sensitivity DNA chip to verify fragment size profile matches your ChIP samples (peak ~250-300 bp).

Visualizations

Title: Impact of Control Sample Quality on ChIP-seq FDR

Title: Optimal ChIP-seq Experiment Workflow with Matched Control

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Robust ChIP Control Experiments

| Item | Function & Importance for Control Quality |

|---|---|

| Covaris AFA Ultrasonicator | Provides consistent, reproducible chromatin shearing. Matching fragment size between ChIP and input is critical. |

| Proteinase K (Molecular Grade) | Essential for complete reversal of cross-links in input samples to ensure pure DNA template. |

| Phenol:Chloroform:Isoamyl Alcohol (25:24:1) | Provides high-purity DNA extraction for input samples, removing proteins and contaminants that inhibit library prep. |

| Glycogen (20 mg/mL) | Co-precipitant to maximize recovery of low-concentration input DNA during ethanol precipitation. |

| AMPure XP or SPRIselect Beads | For precise size selection post-sonication and post-library prep to ensure input and ChIP fragment distributions overlap. |

| Agilent High Sensitivity DNA Kit | Gold-standard QC to visually confirm matching fragment size profiles between input and ChIP samples before sequencing. |

| dsDNA High Sensitivity Qubit Assay | Accurate quantification of low-yield input DNA for balanced library preparation. |

| Validated Species-Matched IgG | For negative control IPs. Must be from same host species as ChIP antibody, ideally purified from pre-immune serum. |

Technical Support & Troubleshooting Center

FAQs & Troubleshooting Guides

Q1: How should I interpret the error "No peaks found" after running MACS2 with --broad-cutoff?

A: This error typically indicates that the p-value or q-value cutoff is too stringent. The --broad-cutoff parameter sets the cutoff for broad peak calling (e.g., for histone marks like H3K27me3). If set too low (e.g., 0.001), it may filter out all potential regions.

- Troubleshooting: For broad marks, use a more lenient cutoff. Start with