Beyond the Data Deluge: Navigating the Top 5 Bioinformatics Challenges in Large-Scale NGS Analysis

The exponential growth of Next-Generation Sequencing (NGS) data presents unprecedented opportunities and formidable challenges for biomedical research and drug discovery.

Beyond the Data Deluge: Navigating the Top 5 Bioinformatics Challenges in Large-Scale NGS Analysis

Abstract

The exponential growth of Next-Generation Sequencing (NGS) data presents unprecedented opportunities and formidable challenges for biomedical research and drug discovery. This article provides a comprehensive guide for researchers and bioinformaticians tackling large-scale NGS projects. We first explore the foundational challenges of data volume, storage, and computational scaling. We then delve into methodological approaches for alignment, variant calling, and multi-omics integration. Practical troubleshooting and optimization strategies for pipeline performance and cost management are addressed. Finally, we discuss critical validation frameworks and comparative analyses to ensure robust, reproducible results. This roadmap aims to equip professionals with the knowledge to transform vast genomic datasets into actionable biological insights.

The Data Tsunami: Understanding the Scale and Core Challenges of Modern NGS

Technical Support Center

Welcome to the technical support hub for managing large-scale NGS data workflows. This guide provides troubleshooting and FAQs for researchers grappling with the challenges of petabyte-scale genomic analysis.

Frequently Asked Questions (FAQs)

Q1: My alignment job (using BWA-MEM/Sentieon) on a 5 TB whole-genome sequencing dataset failed with an "Out of Memory" error. What are the most efficient memory optimization strategies?

A: For datasets in the 5-100 TB range, optimize memory by using reference genome indexing tailored to your chunk size. Pre-process with bwa index -a bwtsw and split the input FASTQ into chunks aligned in parallel. For a 30x human genome (~90 GB FASTQ), use a compute instance with at least 64 GB RAM. Implement a workflow manager (Nextflow/Snakemake) to control batch size and monitor memory usage via integrated profiling.

Q2: During joint variant calling (GATK GenomicsDBImport) of 10,000 samples, the process is extremely slow and I/O intensive. How can I improve performance?

A: At this scale (approximately 1 PB of intermediate GVCFs), I/O is the primary bottleneck. Use a high-performance parallel file system (e.g., Lustre, BeeGFS). Configure GenomicsDBImport with --batch-size 100 and --consolidate flags. Store data in a optimized directory structure that balances files per directory. For a 10,000-sample cohort, expect the genomics database to require ~200 TB of high-speed SSD storage for optimal performance.

Q3: My bulk RNA-Seq differential expression analysis (DESeq2) on 1,000 samples (20 TB) is failing due to matrix size limitations in R. What is the solution?

A: DESeq2 holds the entire count matrix in memory. For 1,000 samples with 60,000 features, this matrix can exceed 50 GB. Solutions: 1) Use tximport to summarize transcript-to-gene counts on a high-memory node (≥128 GB RAM). 2) Implement a chunked approach using DESeqDataSetFromMatrix with subsetted gene groups. 3) For petabyte-scale studies, consider cloud-based solutions like Terra/AnVIL which offer managed Spark implementations of key steps.

Q4: Storage costs for our raw genomic data are escalating (now ~3 PB). What are the best practices for cost-effective, tiered data lifecycle management? A: Implement a data lifecycle policy. Use the following tiered storage strategy:

| Data Tier | Access Speed | Cost (est. $/GB/Year) | Typical Content | Retention Policy |

|---|---|---|---|---|

| Hot (SSD/NVMe) | Milliseconds | $0.10 - $0.20 | Active analysis files, databases | Short-term (≤3 months) |

| Cool (HDD/Parallel FS) | Seconds | $0.01 - $0.04 | Processed BAMs, VCFs for cohort | Medium-term (1-2 years) |

| Cold (Object/Tape) | Minutes-Hours | $0.001 - $0.01 | Archival raw FASTQs, final results | Long-term (indefinite) |

Workflow: Automate data movement from Hot to Cold based on file access patterns using tools like irods or cloud lifecycle rules. Compress FASTQs with gzip (CR ~3) or CRAM for BAMs (CR ~2). For 3 PB of raw data, applying compression and a tiered policy can reduce annual costs by >60%. |

Troubleshooting Guides

Issue: Pipeline Failure Due to Corrupted or Incomplete Files in Distributed Storage. Symptoms: Jobs fail with cryptic I/O errors, checksum mismatches, or truncated file warnings. Diagnosis & Resolution:

- Pre-flight Check: Implement a checksum verification step at the start of your workflow. Compare

md5sumof transferred files against source manifest. - Network & Hardware: For transfers exceeding 50 TB, use dedicated, reliable tools (

aspera,rclonewith retries) and avoid unstable network connections. - File System Check: On parallel file systems, run

fsckor a equivalent consistency check if corruption is suspected. Use storage with built-in data integrity features (e.g., ZFS). - Procedure: Create a validation checkpoint in your workflow manager. Example Nextflow snippet:

Issue: Severe Performance Degradation in Population-Scale VCF Querying. Symptoms: Queries on a multi-sample VCF (e.g., "extract all variants in gene X") take hours. Resolution Protocol:

- Reformat Data: Convert large VCFs (>1,000 samples) to a query-optimized format.

- Method: Use

bcftoolsto create a tabix-indexed VCF.

- Method: Use

- Leverage Indexing: Ensure

tabixindex is on the same high-I/O storage as the data file. Query using regions:

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Large-Scale NGS Analysis |

|---|---|

| High-Throughput Library Prep Kits (e.g., Illumina Nextera Flex) | Enables standardized, automated preparation of thousands of libraries simultaneously, reducing batch effects and handling time for cohort studies. |

| Unique Dual Indexes (UDIs) | Critical for multiplexing thousands of samples in a single sequencing run. Prevents index hopping (misassignment) which is catastrophic at petabyte scale. |

| PCR-Free Library Prep Reagents | Essential for whole-genome sequencing at scale to avoid amplification bias and duplicate reads, ensuring higher data quality for downstream analysis. |

| Liquid Handling Robots | Automates reagent dispensing and plate setup, enabling reproducible processing of 10,000+ samples with minimal manual error. |

| Benchmark Genomes & Control Spikes (e.g., NIST GIAB, ERCC RNA Spike-Ins) | Provides gold-standard reference data for validating pipeline accuracy and performance across different data volumes and platforms. |

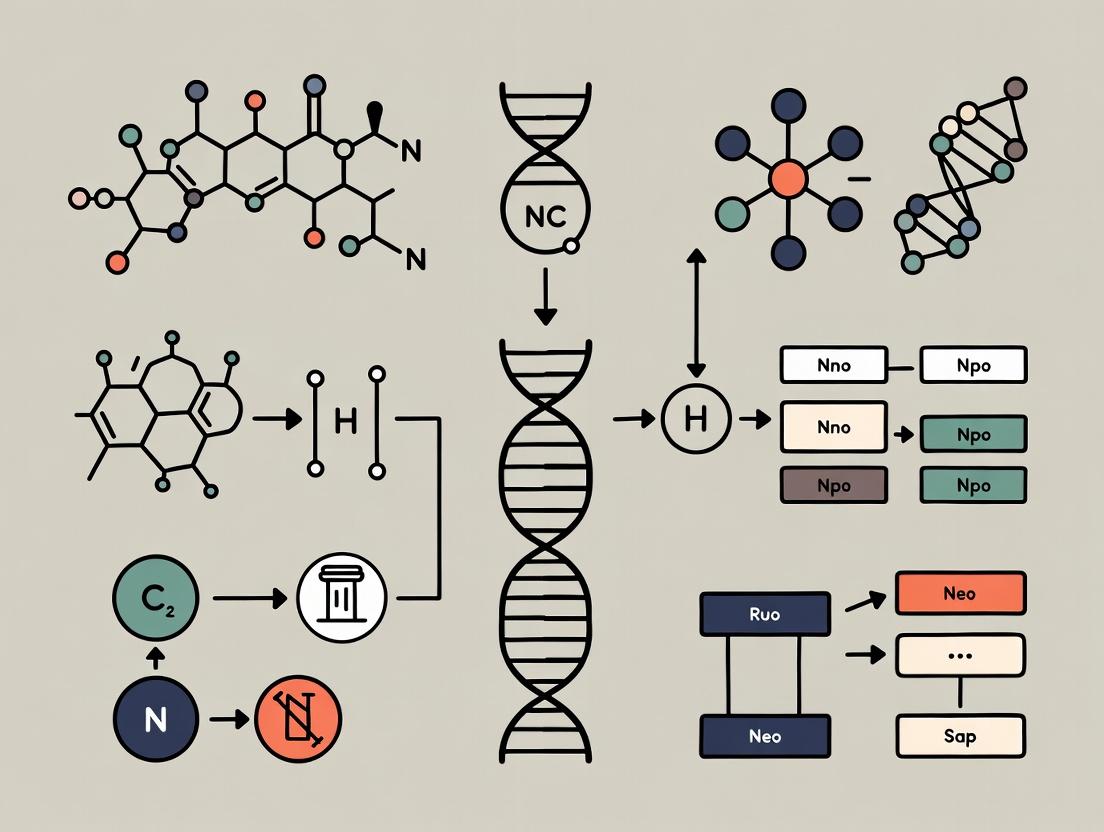

Visualizations

Diagram 1: Large-Scale NGS Data Analysis Workflow

Diagram 2: Data Lifecycle Management Strategy

Troubleshooting Guides & FAQs

Q1: Our bioinformatics pipeline fails with "No space left on device" during the alignment of large NGS datasets. What are the primary causes and solutions?

A: This error directly relates to Data Storage bottlenecks. NGS analysis generates massive intermediate files (e.g., unmapped/mapped BAMs). The primary cause is insufficient storage I/O bandwidth or capacity on the local compute node.

Troubleshooting Guide:

- Check Disk Usage: Use

df -hon the node to confirm partition capacity. - Identify Large Files: Use

du -sh *in the job directory to find the largest intermediates. - Solutions:

- Implement a Cleanup Script: Modify your pipeline (e.g., Nextflow, Snakemake) to delete intermediate files (like unsorted BAMs) immediately after a dependent step (like sorted BAM generation) completes.

- Use Node with Local NVMe SSD: For alignment (BWA-MEM, STAR) and sorting (samtools), request compute nodes with fast local storage to handle high I/O.

- Leverage Tiered Storage: Store raw FASTQs and final results on a central high-capacity system (e.g., Ceph, Isilon), but process on nodes with fast local scratch space.

Q2: Data transfer from the sequencing core to our HPC cluster is prohibitively slow, delaying analysis. What can be done?

A: This is a Data Transfer bottleneck, often due to network constraints or inefficient transfer tools.

Troubleshooting Guide:

- Diagnose Network Speed: Use

iperf3to test bandwidth between source and destination. - Verify File Count: A million small FASTQ files will transfer slower than a few large tar archives due to filesystem overhead.

- Solutions:

- Use Parallelized Transfer Tools: Replace

scporrsyncwithaspera,bbcp, orparallel-rsync. These tools use multiple concurrent streams. - Compress Before Transfer: Use

tar -czfto bundle and compress run directories. For already compressed FASTQ.gz, bundling may not help. - Schedule Transfers: Automate transfers for off-peak hours using cron jobs.

- Use Parallelized Transfer Tools: Replace

Q3: Our genome-wide association study (GWAS) job using PLINK is killed for exceeding memory limits. How can we optimize computational resource usage?

A: This is a Computational Infrastructure bottleneck related to RAM (memory) allocation.

Troubleshooting Guide:

- Profile Memory: Run a subset of data with

/usr/bin/time -vto measure peak memory usage. - Check Data Format: Uncompressed text files (e.g., PED) use more memory than binary formats (e.g., BED).

- Solutions:

- Convert to Binary: Use

plink --file mydata --make-bedto create BED/BIM/FAM files. - Use Specific Memory-Efficient Flags: In PLINK, use

--memoryto specify buffer size and--threadsfor parallelization. - Employ Data Chunking: For large matrices, use tools that support chunk-based processing (e.g., SAIGE, REGENIE) instead of loading the entire dataset into RAM.

- Convert to Binary: Use

Q4: Our RNA-Seq differential expression workflow (using Nextflow) is queueing for days in the HPC scheduler. How can we improve throughput?

A: This bottleneck involves Computational Infrastructure scheduling and pipeline efficiency.

Troubleshooting Guide:

- Analyze Queue Logs: Use

squeueorqstatto see resource requests. Over-requesting CPUs/memory per job leads to long queue times. - Review Pipeline Configuration: Check the Nextflow

processdirectives in your configuration file. - Solutions:

- Right-Size Resources: Profile a single sample run to determine actual CPU/memory/time needs. Adjust

cpus,memory, andtimedirectives in the HPC profile ofnextflow.config. - Use Cluster-Aware Execution: Ensure your pipeline uses

executor = 'slurm'orexecutor = 'pbs'to submit individual tasks as separate jobs, maximizing cluster utilization. - Implement Checkpointing: Use Nextflow's

resumefunctionality (-resume) to avoid re-computing successful steps after a failure.

- Right-Size Resources: Profile a single sample run to determine actual CPU/memory/time needs. Adjust

Key Experimental Protocols

Protocol 1: Optimized NGS Data Transfer and Integrity Verification

Purpose: Reliably and rapidly transfer large sequencing datasets. Methodology:

- At the source (sequencer PC), create a checksum manifest:

md5sum *.fastq.gz > source_manifest.md5. - Bundle files into a tar archive if they are numerous small files:

tar -czf run_archive.tar.gz *.fastq.gz. - Transfer using a parallel tool:

bbcp -P 5 -w 4M -s 16 user@dest:/path/ /local/path/run_archive.tar.gz. - At the destination, untar if needed:

tar -xzf run_archive.tar.gz. - Verify integrity:

md5sum -c source_manifest.md5.

Protocol 2: Profiling Pipeline Resource Usage for HPC Configuration

Purpose: Accurately determine CPU, memory, and storage needs for a single sample. Methodology:

- Launch an interactive job on a representative node with extra resources:

salloc --cpus=4 --mem=16G --time=6:00:00. - Run the core pipeline step (e.g., alignment) with the profiling wrapper:

/usr/bin/time -v bwa mem -t 4 ref.fa sample_1.fq sample_2.fq 2> profile.log. - Extract key metrics from

profile.log:Maximum resident set size (kbytes),Percent of CPU this job got,Elapsed (wall clock) time. - Use these values to set the

memory(with a 20% buffer),cpus, andtimedirectives in your workflow manager's configuration file.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in NGS Analysis |

|---|---|

| High-Throughput Object Store (e.g., Amazon S3, Google Cloud Storage) | Cloud-based, scalable storage for archiving raw FASTQ files and analysis results. Accessed via APIs for computational workflows. |

| Parallel File System (e.g., Lustre, BeeGFS) | Provides high-speed, shared storage for HPC clusters, essential for multi-node parallel processing of genomic data. |

| In-Memory Database (e.g., Redis, Memcached) | Caches frequently accessed reference data (e.g., genome indices, variant databases) to reduce I/O latency during analysis. |

| Container Technology (e.g., Docker, Singularity/Apptainer) | Packages software, dependencies, and environment into a portable unit, ensuring reproducibility and simplifying deployment on HPC/cloud. |

| Workflow Management System (e.g., Nextflow, Snakemake) | Orchestrates complex, multi-step pipelines across distributed compute infrastructure, managing dependencies, failures, and restarts. |

Table 1: Comparative Data Transfer Performance for 1 TB Dataset

| Tool/Protocol | Avg. Transfer Time | Avg. Bandwidth Utilized | Key Requirement |

|---|---|---|---|

Standard scp |

~8.5 hours | ~300 Mbps | Basic SSH access |

rsync (single) |

~8 hours | ~320 Mbps | Basic SSH access |

aspera |

~1.2 hours | ~1.8 Gbps | Licensed server/client |

bbcp |

~1.5 hours | ~1.5 Gbps | Open ports on firewall |

tar + scp |

~7 hours | ~350 Mbps | Reduces file count overhead |

Table 2: Computational Resource Profile for Common NGS Tasks (per sample)

| Analysis Step | Typical Tool | Recommended CPUs | Peak Memory (GB) | Local Storage I/O Need |

|---|---|---|---|---|

| Alignment (DNA-seq) | BWA-MEM | 8 | 8-16 | High (Read/Ref I/O) |

| Alignment (RNA-seq) | STAR | 8-12 | 30-40 | Very High (Genome Load) |

| Variant Calling | GATK HaplotypeCaller | 4-8 | 12-20 | Medium |

| RNA-seq Quantification | Salmon | 8-16 | 4-8 | Low (in-memory index) |

| Differential Expression | DESeq2 (R) | 1-4 | 8-12 (scales with samples) | Low |

Workflow & Relationship Diagrams

Diagram 1: NGS Data Analysis Pipeline Bottlenecks

Diagram 2: Optimized HPC Storage Architecture for NGS

Technical Support Center

Troubleshooting Guides & FAQs

Q1: We are integrating bulk RNA-seq and proteomics data. Our joint pathway analysis shows inconsistent signaling pathways between the transcript and protein levels. What are the primary technical and biological causes, and how can we validate them? A: This is a common multi-omics integration challenge. Technical causes include differences in data maturation (post-transcriptional regulation, protein turnover rates) and platform sensitivity. Biologically, it often reflects genuine regulatory decoupling.

Validation Protocol:

- Temporal Alignment: Design a time-series experiment to capture the lag between mRNA expression and protein abundance.

- Targeted Assay: For key discrepant pathways, perform Western Blot (protein) and RT-qPCR (mRNA) on the same samples.

- Phospho-Proteomics: If pathway activity is suspected to be driven by phosphorylation, not total protein abundance, run a targeted phospho-proteomic assay.

- Data Re-analysis: Apply tools like Mateo or Multi-Omics Factor Analysis (MOFA+) which model technical variance and shared/unique factors across omics layers.

Q2: During long-read (PacBio or Oxford Nanopore) transcriptome assembly, we get excessively high rates of novel isoforms. How do we distinguish real biological novelty from technical artifacts like reverse transcriptase errors or chimeric reads? A: High novel isoform rates require stringent filtration.

Validation Protocol:

- Sequencing Replicates: Require isoform detection in at least 2 biological replicates.

- Multi-Platform Support: Use short-read RNA-seq data (Illumina) to validate splice junctions of the novel isoform with tools like SQANTI3.

- ORF & Protein Conservation: Check if the novel isoform contains a credible Open Reading Frame (ORF) and if the predicted protein sequence has conserved domains (using Pfam).

- Experimental Validation: Design primers spanning novel exon-exon junctions for PCR and Sanger sequencing.

Q3: Our spatial transcriptomics (10x Visium) data shows weak gene expression signals and low correlation with matched bulk RNA-seq from the same tissue. What steps can improve signal-to-noise? A: This often stems from suboptimal tissue preparation and RNA quality.

Troubleshooting Checklist:

- Tissue Optimization: Ensure optimal OCT embedding or fresh freezing without RNase contamination. Perform H&E staining after imaging to preserve RNA.

- Probe/Protocol Validation: For platforms like Visium, always include the included positive control tissue (e.g., mouse brain) to benchmark performance.

- Permeabilization Optimization: Test different permeabilization times (as per 10x's optimization slide) to balance RNA capture efficiency and spot spatial resolution.

- Background Correction: Apply computational tools like SPOTlight or SpatialDE for background noise subtraction and histology-based filtering.

Q4: When aligning long reads to a reference genome for structural variant (SV) calling, compute time and memory usage are prohibitive. What are the key alignment parameters to optimize, and what hardware is recommended? A: Long-read aligners (minimap2, ngmlr) are resource-intensive. Key parameters:

| Parameter (minimap2) | Typical Setting | Optimization for Speed/Memory | Rationale |

|---|---|---|---|

| Preset | -ax map-ont / -ax map-pb |

Do not change. | Optimized preset for accuracy. |

| Secondary Alignments | -N |

Use -N 0 to reduce output. |

Limits number of secondary alignments reported. |

Threads (-t) |

e.g., -t 32 |

Maximize based on CPU cores. | Parallelizes alignment. |

| Memory (implicit) | High | Use --split-prefix for parallel jobs. |

For ultra-large genomes, split the reference. |

Hardware Recommendations:

- CPU: High core count (≥ 32 cores).

- RAM: Minimum 1GB RAM per 1M long reads. For a 50M read dataset, plan for ≥ 64GB RAM.

- Storage: Use high-speed NVMe drives for intermediate files.

Key Experimental Protocols Cited

Protocol 1: Cross-Platform Validation of Novel Long-Read Isoforms Objective: To experimentally validate a novel transcript isoform predicted by long-read sequencing. Steps:

- Primer Design: Design forward and reverse primers that specifically span the novel splice junction unique to the candidate isoform.

- RT-PCR: Perform reverse transcription (RT) on total RNA using a gene-specific reverse primer or oligo-dT, followed by PCR with the junction-spanning primers.

- Gel Electrophoresis: Run the PCR product on an agarose gel. A single band of the expected size is a positive indicator.

- Sanger Sequencing: Purify the gel band and perform Sanger sequencing with the same primers to confirm the exact nucleotide sequence across the novel junction.

- Quantification (Optional): Use digital PCR (dPCR) or quantitative RT-PCR with TaqMan probes designed for the novel junction to quantify isoform abundance across samples.

Protocol 2: Spatial Transcriptomics Tissue Optimization Objective: To optimize tissue preparation for 10x Visium to maximize RNA capture efficiency. Steps:

- Fresh Freezing: Embed tissue in Optimal Cutting Temperature (OCT) compound on a dry ice/ethanol bath or liquid nitrogen. Store at -80°C.

- Cryosectioning: Cut tissue sections at the recommended thickness (10µm for Visium). Use a cryostat blade cleaned with RNase decontaminant.

- Mounting: Thaw-mount the section directly onto the center of the Visium slide's capture area. Immediately refreeze the slide on dry ice.

- Fixation & Staining: Fix the tissue in pre-chilled methanol on the slide for 30 minutes at -20°C. Proceed to H&E or immunofluorescence staining without RNase fixation.

- Imaging: Image the stained tissue at high resolution (20x objective recommended) using a slide scanner.

- Permeabilization & cDNA Synthesis: Follow the Visium protocol, but include the Permeabilization Optimization Slide. Compare different permeabilization times (e.g., 12, 18, 24 minutes) to determine the optimal condition for your tissue type.

Visualizations

Diagram 1: Multi-Omics Data Integration & Validation Workflow

Diagram 2: Long-Read Isoform Discovery & Filtration Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Omics/Spatial Context |

|---|---|

| RNase Inhibitor (e.g., Recombinant RNasin) | Critical for long-read and spatial protocols. Protects intact RNA during tissue handling, cDNA synthesis, and library preparation. |

| OCT Compound (Optimal Cutting Temperature) | For spatial transcriptomics. Embeds tissue for cryosectioning while preserving RNA integrity. Must be RNase-free. |

| Methanol (Molecular Biology Grade), -20°C | Preferred fixative for spatial transcriptomics (vs. formalin). Preserves tissue morphology while maintaining RNA accessibility for capture. |

| High-Sensitivity DNA/RNA Assay Kits (e.g., Qubit, Bioanalyzer) | Accurate quantification and quality assessment of low-input and degraded material common in micro-dissected or spatially captured samples. |

| Multiplex PCR Master Mix (e.g., for dPCR) | Enables precise, absolute quantification of specific novel isoforms or low-abundance targets identified in multi-omics studies. |

| Phosphatase & Protease Inhibitor Cocktails | Essential for proteomics and phospho-proteomics sample preparation to preserve the native protein and phosphorylation state. |

| Ultra-Pure BSA (Bovine Serum Albumin) | Used as a blocking agent and carrier protein in library preparations for low-input samples to reduce surface adsorption and improve yields. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Findability Issues Q: My NGS dataset has been deposited in a public repository, but other researchers report they cannot find it using standard keyword searches. What might be the problem? A: This is a common findability (F) issue. Ensure you have used a persistent identifier (e.g., a DOI or accession number). Check that your metadata includes rich, structured keywords aligned with community standards (e.g., EDAM ontology for bioscientific data). Incomplete or non-standard sample attribute descriptions are a primary cause of failed searches.

FAQ 2: Accessibility & Download Errors

Q: I am trying to download a controlled-access dataset from dbGaP via the command line using prefetch from the SRA Toolkit, but I keep getting "accession not found" or permission errors. How do I resolve this?

A: This is an accessibility (A) problem. Follow this protocol:

- Authorization: Ensure you have been granted access through dbGaP and your eRA Commons account is linked.

- Configure Toolkit: Run

vdb-config --interactive. Navigate to the "Remote Access" tab and set "NCBI Data Access" to "Use Remote Access". Enter your credentials. - Use the Correct Tool: For controlled-access data, always use the

dbGaP-provided download script orprefetchwith the project-specific key file (ngc). The command should resemble:prefetch --ngc <path_to_.ngc_file> SRR1234567. - Cache Issues: Clear the SRA cache with

prefetch --clear-cacheand retry.

FAQ 3: Interoperability in Metadata Q: My lab's metadata spreadsheets are constantly rejected by repository submission portals due to "formatting errors." How can we create interoperable metadata efficiently? A: This is an interoperability (I) challenge. Adopt a metadata standard and template from the start.

- Protocol: Use established standards like the MINSEQE for sequencing experiments or ISA-Tab format. Utilize tools like ISAcreator to structure your metadata into Investigation, Study, and Assay sheets. This ensures machine-actionability and prevents formatting mismatches during submission.

- Validation: Many repositories provide validation tools (e.g., ENA's metadata validation suite). Run your files through these before submission.

FAQ 4: Reusability & Missing Information Q: A published paper cites data "available upon request," but the authors are not responding. How can I ensure my data is reusable without such barriers? A: This defeats reusability (R). Proactively provide comprehensive documentation.

- Solution: Attach a data descriptor or README file with your deposition. This file must include: detailed experimental protocols (wet-lab and computational), software versions with exact parameters/command lines, definitions of all column headers in data files, and a clear license (e.g., CC0, CC-BY). Depositing in a trusted, public repository with a clear usage license is mandatory, not "upon request."

FAQ 5: File Format for Long-Term Reuse Q: We store aligned sequencing data in BAM files. Is this the most interoperable and reusable format for long-term archival? A: For analysis, BAM is standard. For long-term archival and maximum interoperability, consider the CRAM format. It offers significant compression (often ~50% smaller than BAM) while maintaining lossless encoding of all essential data, provided a stable reference genome is specified. This enhances accessibility by reducing storage and transfer burdens.

Table 1: Comparison of Common Genomic Data Repository FAIR Compliance (Generalized Metrics)

| Repository | Persistent Identifier | Standard Metadata | Open Access | Standard File Formats | Citation Required |

|---|---|---|---|---|---|

| ENA / SRA | Accession Number (ERS/DRX) | MINSEQE, ENA checklist | Mixed (Controlled & Open) | FASTQ, BAM, CRAM, VCF | Yes |

| dbGaP | Accession Number (phs) | dbGaP schema | Controlled (Authorized Access) | Varies by submitter | Yes |

| Zenodo | Digital Object Identifier (DOI) | Dublin Core, Custom Fields | Open (Embargo optional) | Any | Yes (via DOI) |

| EGA | Accession Number (EGAD) | Custom Standards | Controlled (DAC Managed) | Encrypted formats common | Yes |

Table 2: Impact of FAIR Implementation on Data Retrieval Efficiency (Hypothetical Study)

| Scenario | Average Time to Locate Dataset | Success Rate of Automated Processing | User-Reported Clarity for Reuse |

|---|---|---|---|

| Non-FAIR Adherent | 2-4 hours | < 30% | Low (Extensive contact needed) |

| Basic FAIR (PID, License) | 30 minutes | ~50% | Moderate |

| Full FAIR (Rich Metadata, Standards) | < 5 minutes | > 90% | High (Fully documented) |

Experimental Protocols

Protocol 1: Submitting RNA-Seq Data to ENA/SRA with FAIR-Compliant Metadata

- Prepare Sequence Files: Demultiplex raw reads. Store as compressed FASTQ files (

.fq.gzor.fastq.gz). Verify read quality with FastQC. - Generate Metadata Spreadsheet: Download the latest ENA metadata template. Populate fields for:

- Study (Title, Description, Abstract).

- Sample (Sample alias, Title, Scientific Name, Tissue, Cell Type, using NCBI Taxonomy ID).

- Experiment (Library Strategy: RNA-Seq, Layout: PAIRED, Platform: ILLUMINA).

- Run (linking to FASTQ file names).

- Validate and Submit: Use the ENA Webin CLI validation tool (

webin-cli -validate) on your metadata and files. Correct any errors. Submit via the Webin portal or CLI. - Post-Submission: Upon receipt of accession numbers (ERX..., ERS...), include them in your manuscript's data availability statement.

Protocol 2: Creating a Reproducible Computational Workflow for NGS Analysis

- Containerization: Create a Docker or Singularity container image with all necessary software (e.g., Trim Galore!, HISAT2, featureCounts, DESeq2) at specific versions.

- Workflow Scripting: Encode the analysis pipeline using a workflow management system like Nextflow or Snakemake. This script should define rules/processes for each step (quality control, alignment, quantification, differential expression).

- Parameterization: Use a configuration file (e.g.,

nextflow.config,config.yaml) to define all input file paths, reference genomes, and critical software parameters. Do not hard-code these values. - Execution and Logging: Run the workflow, ensuring it generates a detailed log file and a

results/folder with all output, including the final count matrix and differential expression table.

Mandatory Visualizations

Diagram 1: FAIR Data Submission and Retrieval Workflow

Diagram 2: Core FAIR Principles Logical Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for FAIR NGS Data Management

| Item | Category | Function & Relevance to FAIR |

|---|---|---|

| ISAcreator Software | Metadata Tool | Assists in creating structured, machine-readable metadata in ISA-Tab format, directly supporting Interoperability (I) and Reusability (R). |

| SRA Toolkit / ENA CLI | Data Transfer Tool | Standardized command-line utilities for uploading to and downloading from major sequence repositories, ensuring Accessible (A) data flows. |

| Docker / Singularity | Containerization | Packages complete software environments, enabling reproducible re-analysis and fulfilling Reusable (R) computational workflows. |

| Nextflow / Snakemake | Workflow Manager | Encodes complex NGS analysis pipelines, capturing all steps and parameters critical for Reusability (R) and reproducibility. |

| RO-Crate Specification | Packaging Standard | A method for packaging research data with their metadata and context into a single, FAIR distribution format, enhancing all four principles. |

| FAIRsharing.org Registry | Standards Resource | A curated directory of metadata standards, databases, and policies, guiding researchers to community Interoperability (I) standards. |

Building Robust Pipelines: Best Practices for Alignment, Variant Calling, and Interpretation

Troubleshooting Guides & FAQs

Q1: During alignment of a large human whole-genome sequencing dataset, my aligner (e.g., BWA-MEM2, Bowtie2) is extremely slow and consumes more than 500GB of RAM. What are the primary strategies to improve efficiency?

A: This is a core challenge in large-scale NGS analysis. The primary strategies involve a combination of algorithmic optimization, hardware utilization, and workflow design.

- Algorithmic/Software: Use ultra-fast, memory-efficient aligners designed for scale, such as Minimap2 (for long reads/spliced alignment) or SNAP. For traditional aligners, ensure you are using the latest version (e.g., BWA-MEM2 offers AVX-512 optimizations over BWA-MEM). Always pre-index the reference genome.

- Computational: Implement parallel processing. Split large FASTQ files into chunks and align in parallel on a high-performance computing (HPC) cluster or multi-core server. Use tools like

GNU parallelor workflow managers (Nextflow, Snakemake). - Workflow: Consider a two-pass alignment strategy for variant calling. First, perform a fast alignment to generate candidate regions, then realign those regions with higher precision.

- Memory: For BWA, the

-Kflag can control memory usage per thread. For Bowtie2, the--offrateparameter can reduce memory footprint at the cost of speed.

Q2: I am getting a very low overall alignment rate (<50%) for my RNA-Seq data against the human reference genome. What could be the causes and solutions?

A: Low alignment rates in RNA-Seq often stem from reference mismatch or data quality.

- Reference Mismatch: Ensure you are using a transcriptome-aware aligner (STAR, HiSAT2, HISAT2) or providing a splice junction database (SJDB). Using a standard DNA aligner will fail to map reads spanning introns.

- Contaminated Reference: Verify you are using the correct genome assembly (e.g., GRCh38 vs. GRCh37) and that it matches the source of your samples.

- Adapter Contamination: Screen for and trim sequencing adapters from your reads using tools like fastp, Trim Galore!, or Cutadapt before alignment.

- Sequence Quality: Check the raw read quality with FastQC. Apply quality trimming if Phred scores are low.

- Biological Cause: High rates of non-alignment could indicate sample contamination or the presence of novel sequences (e.g., pathogens, fusion genes) not in the reference.

Q3: When running a splice-aware aligner like STAR, the job fails with an error: "EXITING because of FATAL ERROR: could not create output file." What does this mean?

A: This is typically a file system permission or disk space issue. STAR writes large temporary files and final output.

- Solution 1: Check the available disk space on the output directory (

df -h) and the parent drive. STAR can require tens of GB of temporary space for large genomes. - Solution 2: Verify you have write permissions in the output directory.

- Solution 3: Explicitly set the temporary directory using STAR's

--outTmpDirparameter to a location with sufficient space and permissions (e.g., a local scratch disk on an HPC node).

Q4: How do I choose between a hash-table-based aligner (Bowtie2) and a Burrows-Wheeler Transform (BWT)-based aligner (BWA) for my DNA re-sequencing project?

A: The choice involves trade-offs between speed, memory, and sensitivity.

| Feature | BWT-based (BWA-MEM/BWA-MEM2) | Hash-table-based (Bowtie2) |

|---|---|---|

| Indexing Speed | Slower | Faster |

| Index Size | Smaller (~5.3GB for human) | Larger (~7.5GB for human) |

| Alignment Speed | Generally faster for ungapped alignment; BWA-MEM2 is highly optimized. | Very fast, especially for shorter reads (<50bp). |

| Memory Usage | Lower during alignment. | Moderate, depends on index options. |

| Sensitivity | High for gapped alignment (indels) and longer reads. | Configurable via --sensitive presets; may require tuning for optimal indel detection. |

| Best For | Whole-genome sequencing (WGS), long-read mapping, variant discovery. | ChIP-seq, ATAC-seq, metagenomics, where speed is critical. |

Q5: What are the critical steps to validate the success and accuracy of a large-scale alignment run before proceeding to downstream analysis (e.g., variant calling, differential expression)?

A: Implement a robust QC pipeline post-alignment.

- Alignment Metrics: Use tools like

samtools flagstatandsamtools statsto calculate key metrics. Summarize these in a table.

| Metric | Tool/Command | Target Range (Human WGS Example) |

|---|---|---|

| Total Reads | samtools flagstat |

N/A |

| Overall Alignment Rate | samtools flagstat |

>95% (DNA), >70-90% (RNA) |

| Properly Paired Rate | samtools flagstat |

>90% (for paired-end) |

| Duplication Rate | picard MarkDuplicates |

Varies; <20% typical for WGS. |

| Insert Size Mean/SD | picard CollectInsertSizeMetrics |

Check matches library prep. |

| On-Target Rate (Targeted) | picard CalculateHsMetrics |

>60% (exome capture) |

- Visual Inspection: Use a genome browser (IGV) to visually inspect alignments in a few genomic regions for proper pairing, splicing, and absence of systematic errors.

- Compare to Baseline: If available, compare metrics to a known good sample processed with the same pipeline.

Experimental Protocols

Protocol 1: Optimized Large-Scale DNA Read Alignment using BWA-MEM2 on an HPC Cluster

This protocol details a chunking strategy for parallel alignment of billions of short reads.

1. Software & Environment:

- BWA-MEM2 (v2.x)

- SAMtools (v1.x)

- GNU Parallel

- HPC cluster with SLURM scheduler

2. Methodology:

- A. Reference Indexing:

bwa-mem2 index GRCh38.fa - B. Input Preparation: Split large FASTQ files into chunks of 10-20 million reads using

splitorseqtk. - C. Parallel Alignment Script (SLURM):

- D. Merge & Deduplicate: Merge sorted BAM chunks:

samtools merge final.bam *.bam. Mark duplicates:gatk MarkDuplicates -I final.bam -O final.dedup.bam -M metrics.txt.

Protocol 2: Spliced Alignment of Bulk RNA-Seq Data using STAR for Differential Expression

This protocol outlines a standard two-pass alignment for sensitive novel junction detection.

1. Software:

- STAR (v2.7.x)

- RSEM or featureCounts for quantification

2. Methodology:

- A. Generate Genome Index:

STAR --runThreadN 20 --runMode genomeGenerate --genomeDir /path/to/GRCh38_index --genomeFastaFiles GRCh38.fa --sjdbGTFfile gencode.v44.annotation.gtf --sjdbOverhang 99 - B. First Pass Alignment (Per Sample): Align reads to generate a list of novel junctions for each sample.

STAR --genomeDir /path/to/GRCh38_index --readFilesIn sample_R1.fastq.gz sample_R2.fastq.gz --runThreadN 12 --outSAMtype BAM Unsorted --outFileNamePrefix sample1_ --outFilterMultimapNmax 20 --alignSJoverhangMin 8 - C. Compile Novel Junctions: Combine

SJ.out.tabfiles from all samples, filtering for high-confidence junctions. - D. Re-index Genome with New Junctions: Re-run

genomeGeneratewith the--sjdbFileChrStartEndoption including the combined junction file. - E. Second Pass Alignment: Align each sample again using the new, more comprehensive index. Output can be directed to a quantifier like RSEM (

--quantMode TranscriptomeSAM) or sorted for use with featureCounts.

Visualizations

Diagram 1: Scalable Alignment Decision Workflow

Diagram 2: Two-Pass RNA-Seq Alignment Strategy

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Scalable Alignment |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Ensures accurate library amplification with minimal bias, reducing artifacts that complicate alignment. |

| Dual-Indexed UMI Adapters | Enables precise detection and removal of PCR duplicates post-alignment, improving variant calling accuracy in ultra-deep sequencing. |

| Ribo-depletion Kits (e.g., Ribo-Zero) | For RNA-Seq, removes abundant ribosomal RNA, increasing the fraction of informative, alignable mRNA reads. |

| Exome Capture Panels (e.g., IDT xGen, Twist) | Targets specific genomic regions, drastically reducing the required sequencing depth and alignment burden for variant discovery. |

| Methylated Spike-in Controls | Helps assess alignment performance in bisulfite-seq experiments where reads are chemically modified (C->U). |

| Physical Gels or TapeStation | For accurate library fragment size selection, ensuring insert size distribution matches aligner assumptions. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our cohort's SNV callset has an unusually high Ti/Tv ratio (>3.0) compared to the expected ~2.1 for whole exome/genome data. What could be causing this, and how do we troubleshoot? A: A high Transition/Transversion (Ti/Tv) ratio suggests a bias towards detecting transitions (Ti), often indicative of false positives due to sequencing artifacts or alignment errors.

- Primary Troubleshooting Steps:

- Verify Base Quality Score Recalibration (BQSR): Ensure BQSR was correctly applied during preprocessing. Rerun BQSR using a known set of variants (e.g., dbSNP) appropriate for your cohort's ancestry.

- Check for Contamination: Use tools like

VerifyBamIDto estimate cross-sample contamination. Contamination > 3% can skew metrics. - Inspect PCR Duplicates: High duplicate rates can create artifactual consensus reads. Confirm mark-duplicates step was executed.

- Region-Specific Analysis: Calculate Ti/Tv ratio in genomic regions with different mappability. A uniform elevation points to pipeline issues; elevation only in low-complexity regions suggests alignment problems.

Q2: When performing CNV analysis from whole-genome sequencing (WGS) data using read-depth methods, we observe excessive noise and poor concordance with expected validation assays. How can we improve signal-to-noise ratio? A: This is common in samples with variable library quality or in regions with extreme GC content.

- Protocol for Optimized Read-Depth CNV Calling:

- Input: Coordinate-sorted, duplicate-marked BAM files for all cohort samples.

- GC Correction: Calculate read counts in fixed bins (e.g., 20 kb). Use a tool like

GATK CollectReadCounts. Fit a LOESS regression between bin counts and GC content, and adjust counts accordingly. - Inter-Sample Normalization: Apply median normalization or use a reference set of samples to create a coverage profile.

- Segmentation: Use a circular binary segmentation (CBS) algorithm (e.g., in

DNAcopyR package) on the normalized log2 ratio profile to define copy number segments. - Filtering: Filter segments based on:

- Number of supporting bins (min=10).

- Distance from log2 ratio of 0 (e.g., |log2 ratio| > 0.2).

- Population frequency (exclude common CNVs from DGV).

Q3: Our structural variant (SV) calling from paired-end WGS data yields many calls that fail validation by PCR. What are the most common sources of false positives? A: False positives often arise from mis-assembly of repetitive regions or improper handling of paired-end read signatures.

- Troubleshooting Guide:

| SV Type | Common False Positive Source | Diagnostic Check | Solution |

|---|---|---|---|

| Deletions | Incorrect insert size estimation or poor alignment in low-mappability regions. | Plot actual insert size distribution. Check alignment quality (MAPQ) around breakpoints. | Use multiple SV callers (e.g., Manta, Delly) and take consensus. Require split-read support. |

| Tandem Duplications | Polymerase slippage during PCR or optical duplicates. | Check if putative duplications are enriched in high-GC regions. Verify with read-depth signal. | Apply stricter duplicate marking. Filter SVs where both breakpoints fall in segmental duplications. |

| Inversions | Mis-oriented reads due to paralogous sequence variants. | Check for SNP density shift across breakpoint. | Require supporting evidence from both split-read and read-pair signals. |

| Translocations | Misalignment of chimeric reads from homologous regions. | Examine soft-clipped sequences for microhomology. Check if reads also map to other genomic locations. | Use long-read or linked-read data for validation in critical regions. |

Q4: How do we effectively integrate SNV, CNV, and SV calls from a 1000-sample cohort for a unified association analysis? A: Integration requires a standardized, quality-filtered variant representation.

- Detailed Methodology for Variant Integration:

- Variant Normalization: Use

bcftools normon all VCFs to left-align and trim alleles, ensuring identical representation of multi-allelic variants. - Joint Genotyping (for SNVs/Indels): For GATK-based calls, perform joint genotyping across all samples to refine allele frequencies and genotype qualities.

- Quality Score Recalibration (VQSR): Apply VQSR separately for SNVs and Indels using known resources (HapMap, Omni, etc.) to create a sensitivity vs. specificity threshold.

- CNV/SV Cohort Merging: Use a tool like

SURVIVORto merge calls from multiple samples/algorithm within a defined distance (e.g., 1000 bp), requiring support from multiple callers for increased precision. - Creation of a Unified Cohort Matrix: Generate a sample x variant matrix where entries are genotypes (0/1/2) for SNVs/Indels, copy number states for CNVs, and presence/absence or genotypes for SVs.

- Variant Normalization: Use

Visualizations

Title: High-Throughput Variant Analysis Core Workflow

Title: Bioinformatics Thesis: NGS Cohort Variant Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in High-Throughput Variant Analysis |

|---|---|

| Reference Genome (GRCh38/hg38) | Standardized coordinate system for aligning sequencing reads and calling variants. Crucial for reproducibility. |

| Curated Variant Databases (gnomAD, dbSNP, DGV) | Provide population allele frequencies used to filter common polymorphisms and prioritize rare, likely pathogenic variants. |

| Targeted Capture Probes (for Exome) | Solution-based hybridization probes (e.g., IDT xGen, Twist Bioscience) to enrich exonic regions prior to sequencing. |

| PCR-Free Library Prep Kits | Minimize duplicate reads and amplification bias, essential for accurate CNV and SV detection in WGS. |

| Molecular Barcodes (UMIs) | Unique Molecular Identifiers added during library prep to accurately identify and collapse PCR duplicates. |

| Positive Control DNA (e.g., NA12878) | Well-characterized reference sample (e.g., from Coriell Institute) for benchmarking pipeline accuracy and precision. |

| Variant Annotation Resources (ClinVar, COSMIC) | Databases linking variants to known clinical phenotypes or cancer associations for biological interpretation. |

| High-Performance Computing (HPC) Cluster | Essential infrastructure for parallel processing of large cohort data through alignment and variant calling steps. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the alignment of WGS and RNA-seq data from the same sample, I encounter low concordance rates between genomic variants called from WGS and those from RNA-seq. What are the primary causes and solutions?

A: Low concordance is a common challenge. Primary causes include:

- Alignment Artifacts in RNA-seq: Spliced aligners may misalign reads across exon-intron boundaries, especially for novel or poorly annotated splice variants. This can lead to false positive variant calls near splice sites.

- Allelic Expression Bias: RNA-seq reflects expressed alleles. If one allele is transcriptionally silenced (e.g., via imprinting, X-inactivation), it will be absent in RNA-seq data, leading to false homozygous calls from RNA-seq.

- RNA Editing: True biological discrepancies where the RNA sequence differs from the DNA template (e.g., A-to-I editing) will appear as discordant calls.

- Technical Noise: Lower coverage in RNA-seq, sequencing errors, and biases in cDNA synthesis can affect call accuracy.

Protocol: Concordance Validation Workflow

- Filtering: Isolate variants in expressed genomic regions (FPKM > 1). Apply stringent quality filters (e.g., GATK: QD < 2.0 || FS > 60.0 || MQ < 40.0).

- Context Analysis: Categorize discordant variants by genomic context (exonic, intronic, splice site +/- 10bp). High discordance in splice regions suggests alignment issues.

- Visual Inspection: Load BAM files for discordant loci in IGV. Check for alignment patterns, strand bias, and read quality.

- Validation: Use orthogonal methods (e.g., Sanger sequencing from genomic DNA and cDNA) for a subset of high-impact discordant variants.

Q2: When integrating DNA methylation (e.g., from bisulfite sequencing) with gene expression data, how do I distinguish true inverse correlations (hyper methylation / low expression) from spurious associations?

A: Spurious correlations arise from confounding factors like cell type heterogeneity or batch effects.

- Solution 1: Deconvolution. Use a reference-based (e.g., CIBERSORTx) or reference-free (e.g., Factor Analysis) method to estimate cell type proportions in your bulk tissue sample. Include these proportions as covariates in your correlation model (e.g., linear regression: Expression ~ Methylation + CellType1 + ... + CellTypeN + Batch).

- Solution 2: Prioritize Functional Regions. Focus analysis on CpG sites in putative regulatory regions (promoters, enhancers) defined by chromatin accessibility (ATAC-seq) or histone marks (ChIP-seq). An association is more robust if the CpG site is within an active regulatory element for that cell type.

- Solution 3: Temporal/Longitudinal Analysis. For dynamic processes, perform paired sampling. A true regulatory relationship is supported if the change in methylation precedes the change in expression.

Protocol: Methylation-Expression Integration with Covariate Adjustment

- Data Preparation: Generate matrices of promoter-average beta values (methylation) and log2(TPM+1) values (expression) for paired samples.

- Covariate Collection: Compose a covariate matrix including batch, age, sex, and estimated cell type proportions (from tools like EpiDISH for methylation data).

- Modeling: For each gene, fit a robust linear model:

lm(gene_expression ~ promoter_methylation + ., data = covariate_matrix). - Significance: Apply FDR correction to p-values of the methylation coefficient. Report only FDR < 0.05 associations.

Q3: In a multi-omics cohort study, how should I handle missing data for different omics layers (e.g., some samples have WGS and RNA-seq, but not epigenomics)?

A: The strategy depends on the analysis goal and scale of missingness.

- For Association Studies: Use pairwise complete-case analysis. While inefficient, it avoids imputation artifacts. State sample size for each analysis clearly.

- For Predictive Modeling (e.g., clinical outcome): Employ multi-omics aware imputation.

- Option A (Recommended for <30% missing): Use a multi-view matrix completion method like MOGONET or Proprietary Tools (e.g., from Amazon Omics or Google Genomics) that leverages correlations between omics layers to impute missing data.

- Option B: Perform within-omics imputation first (e.g., using missForest for methylome data), then proceed with analysis.

- Critical Step: Always perform a Missing Completely at Random (MCAR) test (e.g., Little's test) to assess bias. If data is not MCAR, results may be unreliable.

Key Performance Metrics & Benchmarks

Table 1: Typical Concordance Rates and Data Requirements for Multi-Omics Integration

| Integration Type | Typical Concordance/Accuracy Range | Minimum Recommended Coverage/Depth | Key Quality Metric |

|---|---|---|---|

| WGS vs RNA-seq (SNVs) | 70-85% (in expressed exons) | WGS: 30x; RNA-seq: 50M paired-end reads | Ti/Tv ratio ~2.1 (WGS) & ~2.8 (RNA-seq) |

| Methylation (BSseq) vs RNA-seq | Significant (FDR<0.05) inverse correlation in 5-15% of gene-promoter pairs | BSseq: 20x; RNA-seq: 30M reads | Bisulfite conversion efficiency >99% |

| ChIP-seq (H3K27ac) vs ATAC-seq | Peak overlap (Jaccard index) of 0.4-0.7 | ChIP-seq: 20M reads; ATAC-seq: 50M reads | FRiP score >1% (ChIP), TSS enrichment >5 (ATAC) |

| Multi-omics Clustering (e.g., MOFA+) | Cluster stability (Silhouette score) > 0.7 | Varies by modality; see above. | Total variance explained by factors > 30% |

Essential Methodologies

Protocol: Cross-Modality Integration Using MOFA+ This protocol integrates multiple omics matrices to identify latent factors driving variation.

- Input Data Preparation: Create separate

data.frameobjects for each omics modality (e.g., Mutation burden matrix, Transcript TPM matrix, Methylation M-value matrix). Ensure rows are features and columns are matched samples. - MOFA Object Creation:

MOFAobject <- create_mofa_from_data(data_list) - Data Options: Set model options:

ModelOptions <- get_default_model_options(MOFAobject); ModelOptions$likelihoods <- c("poisson", "gaussian", "gaussian")for count, continuous, and continuous data, respectively. - Training Options:

TrainOptions <- get_default_training_options(MOFAobject); TrainOptions$convergence_mode <- "slow"; TrainOptions$seed <- 42 - Model Training:

MOFAobject.trained <- prepare_mofa(MOFAobject, model_options = ModelOptions, training_options = TrainOptions) %>% run_mofa - Downstream Analysis: Use functions

plot_variance_explained(),plot_factors(), andget_weights()to interpret factors.

Protocol: Identifying Driver Regulatory Events (Enhancer-Gene Links)

- Define Active Enhancers: Intersect H3K27ac ChIP-seq peaks with ATAC-seq peaks outside promoter regions (e.g., +/- 2kb from TSS).

- Chromatin Interaction Data: Overlap active enhancers with Hi-C or HIChip-derived chromatin loops. Assign enhancers to gene promoters within the same topologically associating domain (TAD).

- Correlation Analysis: For each enhancer-gene pair, calculate correlation between enhancer accessibility (ATAC-seq signal) and gene expression (RNA-seq) across samples.

- Triangulation with Methylation: Superimpose CpG methylation within the enhancer. A strong candidate driver link is supported by: a) Physical contact (Hi-C), b) Significant accessibility-expression correlation (FDR < 0.01), and c) Significant negative correlation between enhancer methylation and expression.

Visualizations

Multi-Omics Analysis Core Workflow

Epigenetic Silencing Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Kits for Multi-Omics Studies

| Item | Function | Example Vendor/Kit |

|---|---|---|

| Poly(A) Magnetic Beads | Isolation of polyadenylated mRNA from total RNA for RNA-seq library prep. | Thermo Fisher Dynabeads mRNA DIRECT Purification Kit |

| Tn5 Transposase | Simultaneously fragments DNA and adds sequencing adapters for ATAC-seq and other tagmentation-based assays. | Illumina Tagment DNA TDE1 Enzyme |

| Proteinase K | Essential for digesting proteins and nucleases during DNA/RNA extraction, especially from FFPE or complex tissues. | Qiagen Proteinase K |

| Bisulfite Conversion Reagent | Chemical treatment that converts unmethylated cytosine to uracil, allowing methylation detection via sequencing. | Zymo Research EZ DNA Methylation-Lightning Kit |

| Methylation-Sensitive Restriction Enzymes (e.g., HpaII) | Used in alternative methylation assays (e.g., MRE-seq) to digest unmethylated CpG sites. | NEB HpaII |

| SPRI Beads | Size-selective magnetic beads for clean-up, size selection, and PCR purification in NGS library prep. | Beckman Coulter AMPure XP Beads |

| Unique Dual Index (UDI) Oligos | Multiplexing oligonucleotides with unique dual barcodes to minimize index hopping in pooled sequencing. | Illumina IDT for Illumina UD Indexes |

| RNase Inhibitor | Protects RNA samples from degradation during cDNA synthesis and other enzymatic reactions. | Takara Bio Recombinant RNase Inhibitor |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Next-Generation Sequencing (NGS) alignment job on a Kubernetes cluster is failing with a "Pod Evicted due to Memory Pressure" error. What are the primary troubleshooting steps? A: This indicates your alignment pod (e.g., running BWA-MEM or STAR) requested more memory than available on the node.

- Check Resource Requests/Limits: Examine your pod specification. Ensure

resources.requests.memoryis set to the typical requirement andresources.limits.memoryis set to the maximum peak usage for your dataset. For example: BWA-MEM for a 30x human WGS may need ~30GB. - Profile Tool Memory: Run the alignment tool on a single sample with a smaller dataset locally or on a dedicated node using

/usr/bin/time -vto measure "Maximum resident set size". - Review Node Capacity: Use

kubectl describe nodesto see allocatable memory and existing allocations. Consider scaling your node pool with larger memory-optimized instances. - Enable Horizontal Pod Autoscaler (HPA): If the issue is due to too many pods on one node, configure HPA to distribute pods based on memory utilization.

Q2: When using a burstable cloud HPC instance (e.g., AWS Spot or Azure Low-Priority VMs) for a large-scale variant calling workflow (GATK), the instance is terminated unexpectedly, causing the entire job to fail. How can I design for fault tolerance? A: Design for checkpointing and workflow resilience.

- Use a Workflow Manager: Implement pipelines with Nextflow, Snakemake, or Cromwell. They inherently provide crash recovery and can resume from the last successful step.

- Leverage Persistent Storage: All intermediate files must be written to persistent, network-attached storage (e.g., Amazon EFS, Google Filestore, Azure NetApp Files). Do not use local instance storage.

- Configure Checkpointing: For monolithic tools without native checkpointing, structure your workflow into smaller, atomic tasks (e.g., per-chromosome variant calling).

- Spot Interruption Notice: Configure your instances to listen for termination notices (e.g., AWS Spot Instance Termination Notice, 2 minutes warning) and trigger a graceful shutdown, saving state.

Q3: Data transfer from my on-premises HPC cluster to the cloud object storage (e.g., S3, GCS) for analysis is the bottleneck. How can I accelerate this? A: Optimize transfer strategy and leverage specialized services.

- Parallelize Transfer: Use tools like

rclone,gsutil -m(multi-threaded), oraws s3 syncwith parallel processing settings. Avoid single-threadedscp. - Use Accelerated Services: For large, initial datasets (>10TB), consider physical data transport (AWS Snowball, Azure Data Box) or dedicated high-speed interconnect services (Google Cloud Transfer Appliance, Direct Connect).

- Compress Before Transfer: Use efficient compression (e.g.,

pbzip2for FASTQ,bgzipfor VCF/BCF) to reduce data volume. - Transfer Incrementally: Only sync new or modified files. Structure your project directories clearly.

Q4: My elastic cloud cluster scales up correctly, but the parallel genomic analysis jobs (e.g., multi-sample joint genotyping) show sub-linear scaling performance. What are common causes? A: Look for shared resource contention and I/O bottlenecks.

- Shared Filesystem Contention: When hundreds of pods read/write to the same persistent volume (PV), I/O latency spikes. Solution: Shard data where possible or use a high-throughput parallel file system (e.g., Lustre, WekaIO) designed for HPC.

- Centralized Database Bottlenecks: If your pipeline frequently queries a central genomic database (e.g., for annotation), it can become a single point of contention. Cache results locally per pod or use a replicated database instance.

- Check Final Gathering Step: The final step that merges all parallel results (e.g., gathering VCFs) is inherently serial and can be slow. Ensure this step is allocated sufficient CPU/memory.

Experimental Protocol: Benchmarking Elastic Scaling for RNA-Seq Analysis

- Objective: Measure the cost-efficiency and time savings of elastic cloud-HPC for a bursty RNA-Seq workload.

- Workflow: Bulk RNA-Seq alignment & quantification (STAR → RSEM).

- Dataset: 1000 samples (paired-end, ~50M reads each) from a public cohort (e.g., TCGA).

- Control Environment: Fixed on-premises HPC cluster (50 nodes, 32 cores/256GB RAM each).

- Test Environment: Kubernetes cluster on Google Kubernetes Engine (GKE) with Cluster Autoscaler enabled. Initial pool: 5

n2-standard-32nodes. Node pool can scale to 50 nodes. - Method:

- Containerize the STAR and RSEM tools using Docker.

- Implement the pipeline in Nextflow with a Kubernetes executor.

- Configure Nextflow to process each sample as an independent pod.

- Deploy the workflow simultaneously in both environments.

- Record: Total wall-clock time, total compute cost (cloud only), and average job queue time.

- Metrics: Compute Cost per Sample, Total Analysis Duration, Resource Utilization Rate.

Quantitative Data Summary

| Metric | On-Premises HPC (Fixed 50 Nodes) | Cloud-HPC (Elastic, 5-50 Nodes) |

|---|---|---|

| Total Wall-Clock Time | 48 hours | 18 hours |

| Average Queue Time per Job | 45 minutes | < 1 minute |

| Total vCPU-Hours Consumed | ~76,800 hours | ~28,800 hours |

| Estimated Compute Cost | N/A (Capital Expenditure) | ~$1,440* |

| Peak Nodes Utilized | 50 | 47 |

| Cost per Sample | N/A | ~$1.44 |

*Estimated using list price of $0.05 per vCPU-hour for n2-standard VMs. Sustained use discounts would apply.

Visualization: Cloud-Native NGS Analysis Workflow

Title: Elastic NGS Analysis on Kubernetes

The Scientist's Toolkit: Research Reagent Solutions for Cloud-Native Bioinformatics

| Item | Function & Relevance to Cloud/HPC |

|---|---|

| Workflow Manager (Nextflow/Snakemake) | Defines, executes, and monitors portable, scalable, and reproducible analysis pipelines across compute environments. Essential for leveraging elastic clouds. |

| Container Images (Docker/Singularity) | Package software, dependencies, and environment into a single, portable unit. Enables seamless execution from laptop to cloud HPC. |

| Object Storage Client (gsutil/awscli) | Command-line tools to efficiently transfer, manage, and access large genomic datasets stored in cost-effective cloud object storage (S3, GCS). |

| Kubernetes Job Spec YAML | Configuration file defining compute resources (CPU, RAM), storage mounts, and secrets for a single analysis task pod. The "recipe" for elastic execution. |

| Persistent Volume Claim (PVC) | A request for dynamic storage (e.g., fast SSD block storage) by a cloud pod. Allows temporary scratch space for high-I/O tasks like alignment. |

| Cluster Autoscaler | Automatically adjusts the number of nodes in a Kubernetes cluster based on pending pod resource requests. Key to handling bursty workloads cost-effectively. |

| Monitoring Stack (Prometheus/Grafana) | Collects and visualizes metrics on cluster resource utilization, job progress, and costs. Critical for performance tuning and budget management. |

Optimizing Performance and Cost: Practical Fixes for Slow Pipelines and Budget Overruns

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My alignment job (using BWA-mem) is taking over 48 hours for a 30x human WGS sample. The CPU usage reported by the cluster scheduler is low (<30%). What is the likely bottleneck and how can I confirm it? A: This pattern strongly suggests an I/O (Input/Output) bottleneck. The CPU is idle waiting for data to be read from storage or for intermediate files to be written. Low CPU utilization despite long runtimes is a classic symptom.

- Diagnostic Protocol:

- Monitor I/O Wait: On the compute node running the job, use the

iostat -x 5command. Focus on the%utilandawaitcolumns for your storage device. A%utilconsistently above 70-80% and highawaittimes indicate saturation. - Check File System Type & Location: Are input FASTQ files on a parallel file system (e.g., Lustre, GPFS) or a slower network-attached storage (NAS)? Is the temporary directory (

-Tin BWA) set to a local SSD or the slow shared storage? - Profile with

strace/dtrace: A lightweight trace can reveal if the process is blocked on read/write system calls:strace -c -e trace=file bwa mem ....

- Monitor I/O Wait: On the compute node running the job, use the

Q2: During variant calling (GATK HaplotypeCaller), my job fails with an OutOfMemoryError or is killed by the cluster's OOM killer. How can I estimate the correct memory allocation?

A: Memory peaks during the "active region" processing phase, which varies by genome complexity and sequencing depth.

- Diagnostic & Mitigation Protocol:

- Baseline Profiling: Run HaplotypeCaller on a single, representative chromosome (e.g., chr21) using the

-L chr21argument. Monitor peak memory usage with/usr/bin/time -v(look for "Maximum resident set size"). - Calculate Scaling: Memory need does not scale linearly with input size. Use the empirical data from step 1 and GATK's internal calculations. As a rule of thumb, provide ~4-6 GB per CPU core as a starting point for WGS.

- Key Tuning Parameters: Use

--java-options "-Xmx8G -Xms4G"to set JVM heap. Consider using--intervalsto process the genome in chunks, reducing per-task memory overhead.

- Baseline Profiling: Run HaplotypeCaller on a single, representative chromosome (e.g., chr21) using the

Q3: My multi-sample joint genotyping pipeline has high CPU utilization but makes slow progress. The top command shows mostly sys (kernel) time, not user (application) time. What does this mean?

A: High system (sys) CPU time often indicates excessive context switching due to too many parallel threads competing for limited memory bandwidth or I/O resources, or excessive inter-process communication.

- Diagnostic Protocol:

- Check Thread Count: Are you using an excessively high number of threads (e.g.,

-t 32on a node with only 16 physical cores)? This can lead to thrashing. - Profile System Calls: Use

perfto analyze kernel activity:perf stat -e context-switches,cpu-migrations,sched:sched_switch <your_command>. High counts confirm the issue. - Optimization: Limit tools to the number of physical cores available. For memory-bandwidth-bound tasks (e.g., compression, some BAM operations), using fewer than the total cores can sometimes improve performance.

- Check Thread Count: Are you using an excessively high number of threads (e.g.,

Q4: My pipeline stage (e.g., MarkDuplicates) runs fast on a small test dataset but slows dramatically on the full dataset. CPU and memory metrics seem fine. What could be wrong? A: This is often a sign of inefficient algorithmic scaling or hidden I/O on larger data structures.

- Diagnostic Protocol:

- Algorithm Complexity: Understand the tool's scaling behavior. MarkDuplicates has a

O(n log n)sorting step, which becomes disproportionately expensive asn(read count) grows. - Disk I/O for Sorting: Large sorts can spill to temporary disk. Use

iostatduring the slow phase to check for writes to a temporary directory. If the temp directory is on slow storage, it becomes a bottleneck. - Solution: Allocate more memory to the sorting process (e.g.,

-Xmxin Picard) to minimize disk spilling, and ensure the JVM temp directory (-Djava.io.tmpdir) points to fast local storage.

- Algorithm Complexity: Understand the tool's scaling behavior. MarkDuplicates has a

Quantitative Bottleneck Analysis Table

| Bottleneck Type | Primary Symptom | Key Diagnostic Tool / Metric | Common Culprit in NGS Pipelines | Potential Mitigation |

|---|---|---|---|---|

| I/O | Low CPU%, long wall time, high await in iostat |

iostat -x, iotop, strace -e trace=file |

Reading/Writing FASTQ/BAM from/to slow network storage; many small files. | Use local SSD for temp files; stage data to fast parallel file system; merge small files. |

| Memory | Job fails with OutOfMemoryError or is OOM-killed. |

/usr/bin/time -v, cluster job logs (OOM kill flag). |

GATK HaplotypeCaller, large-reference aligners, high-depth regions. | Increase JVM heap (-Xmx); split workload by genomic intervals; use more, smaller nodes. |

| Compute (CPU) | High user CPU%, but progress is slow per core. |

top (user% vs sys%), perf stat (instructions per cycle). |

Complex variant calling, de novo assembly, high-parameter model training. | Optimize thread count; use optimized binaries (e.g., samtools with --disable-multithreading); leverage accelerators (GPU). |

| Compute (Context Switches) | High sys CPU%, low overall throughput. |

perf stat -e context-switches, pidstat -w. |

Over-subscription of threads (> physical cores), memory thrashing. | Reduce -t/--threads to match physical cores; increase memory-per-core ratio. |

Experimental Protocol: Integrated Pipeline Profiling

Objective: To systematically identify compute, memory, and I/O bottlenecks in a standard NGS variant calling workflow. Workflow: FASTQ → Alignment (BWA-mem) → Sort & MarkDuplicates (samtools, picard) → Base Recalibration & Variant Calling (GATK). Protocol:

- Instrumentation: Run the pipeline on a representative chromosome (e.g., chr21) using a controlled environment.

- Data Collection:

- Compute: Use

perf stat -dto capture CPU cycles, instructions, cache misses, and branch misses for each major command. - Memory: Use

/usr/bin/time -vto record maximum resident set size (RSS) and minor/major page faults. - I/O: Use

iostat -xmtz 5in a background process, logging data for the relevant storage volume during the entire run.

- Compute: Use

- Analysis: Correlate low CPU utilization phases with high I/O wait times. Identify stages where memory RSS peaks. Correlate high system time with thread count and context switch metrics.

- Iteration: Apply mitigations (e.g., change temp directory, adjust threads/memory) and repeat profiling to measure improvement.

Workflow Diagram: NGS Pipeline Bottleneck Diagnosis

Title: NGS Bottleneck Diagnosis Decision Tree

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item / Tool | Category | Function in NGS Profiling |

|---|---|---|

perf (Linux) |

System Profiler | Low-overhead performance counter tool. Measures CPU cycles, cache misses, instructions per cycle (IPC), and system calls to pinpoint compute inefficiencies. |

iostat / iotop |

I/O Monitor | Reports storage device utilization (%util), wait times (await), and throughput. Identifies I/O saturation and slow storage links. |

time -v (GNU time) |

Resource Monitor | Provides detailed runtime data including maximum memory footprint (RSS), page faults, and context switches. Critical for memory sizing. |

strace / dtrace |

System Call Tracer | Traces file, network, and process system calls. Reveals if an application is blocked on specific I/O operations. |

htop / pidstat |

Process Viewer | Interactive view of CPU, memory, and thread usage per process. Helps identify runaway processes or thread imbalances. |

| Node-Local SSD Storage | Hardware | Provides high-throughput, low-latency storage for temporary files, preventing I/O bottlenecks from slowing shared network storage. |

| Java Virtual Machine (JVM) | Runtime Environment | Used by many tools (GATK, Picard). Requires careful tuning of heap size (-Xmx, -Xms) to balance memory use and garbage collection overhead. |

| Scheduler Profiling (Slurm, LSF) | Cluster Manager | Job scheduler accounting data (CPU efficiency, memory usage) provides historical trends for pipeline optimization at scale. |

Troubleshooting Guides & FAQs

Q1: Our Next-Generation Sequencing (NGS) alignment pipeline (using BWA-MEM) is taking too long and exceeding our compute budget on the cloud. What are the most cost-effective instance types for this CPU-intensive workload?

A: For CPU-bound NGS tasks like read alignment and variant calling, the optimal choice balances vCPU count, memory ratio, and hourly cost. General Purpose (G) or Compute Optimized (C) instances are typically best. Avoid memory-optimized families unless your specific tool (e.g., some genome assemblers) requires exceptionally high RAM. Consider the following comparison for a major cloud provider (data is illustrative; perform a live search for current pricing):

| Instance Family | Example Type | vCPUs | Memory (GiB) | Relative Cost per Hour (Indexed) | Best For |

|---|---|---|---|---|---|

| General Purpose | G4dn.xlarge | 4 | 16 | 1.00 | Balanced BWA/GATK workflows, moderate RAM needs |

| Compute Optimized | C5a.2xlarge | 8 | 16 | 1.15 | High-throughput alignment, CPU-heavy preprocessing |

| Memory Optimized | R6i.xlarge | 4 | 32 | 1.40 | De novo assembly, large-reference alignment |

| Spot Instance (Compute) | C5a.2xlarge (Spot) | 8 | 16 | ~0.35 | Fault-tolerant, interruptible batch alignment jobs |

Protocol for Selection Experiment:

- Identify Benchmark: Select a representative subset of your data (e.g., 10 million read pairs).

- Instance Testing: Run your alignment pipeline on 2-3 candidate instance types (e.g., G4dn.xlarge, C5a.2xlarge).

- Metric Collection: Record wall-clock time to completion and total compute cost (instance price * hours).

- Analysis: Calculate cost per genome/equivalent. The instance with the lowest cost per unit processed, while meeting your time constraints, is optimal.

Q2: We have petabytes of archived BAM/FASTQ data that we access rarely, but regulatory requirements demand we keep it for 10 years. Which cloud storage tier is most appropriate?

A: For long-term archival of NGS data with infrequent retrieval, use the Coldline or Archive/Glacier Deep Archive tiers. The cost savings versus Standard storage are substantial, but retrieval fees and times are higher.

| Storage Tier | Ideal Access Pattern | Minimum Storage Duration | Relative Storage Cost (per GB/Month) | Retrieval Cost & Time |

|---|---|---|---|---|

| Standard | Frequent, immediate access | None | 1.00 (Baseline) | Low, milliseconds |

| Nearline | Accessed <1x per month | 30 days | ~0.25 | Moderate, milliseconds |

| Coldline | Accessed <1x per quarter | 90 days | ~0.10 | Higher, milliseconds |

| Archive/Deep Archive | Accessed <1x per year | 180 days | ~0.05 | Highest, 12-48 hours retrieval |

Protocol for Implementing a Tiered Storage Strategy:

- Define Lifecycle Policy: Create rules based on file age and format. Example: Move FASTQ files to Coldline after 90 days of no access. Move finalized project BAMs to Archive after 1 year.

- Use Object Tags: Tag data with metadata like

project-id,date, andsample-typeto automate tiering policies. - Retrieval Planning: For data in archival tiers, plan batch retrievals in advance to minimize costs and wait times.

Q3: We want to use Spot Instances for our scalable somatic variant calling workflow (using Nextflow on Kubernetes), but we are worried about jobs failing due to instance termination. How can we design a resilient pipeline?

A: Spot instances offer 60-90% cost savings but can be reclaimed with a 2-minute warning. The key is to use checkpointing and fault-tolerant orchestration.

FAQs on Spot Instance Troubleshooting:

- Q: My job keeps failing and restarting from scratch, wasting time. What should I do?

A: Ensure your workflow engine (Nextflow, Snakemake) is configured with checkpointing. This allows tasks interrupted by Spot termination to resume from the last saved state, not the beginning. Use the

-resumeflag in Nextflow. - Q: How do I handle persistent disk data when a Spot node vanishes? A: Never use local instance storage for critical intermediate data. Always use persistent, network-attached storage (e.g., AWS EBS, Google Persistent Disk) that detaches and reattaches to a new node if the original is reclaimed.

- Q: Can I specify a mix of instance types?

A: Yes. Use Flexible Instance Policies or Instance Groups that specify multiple instance families/sizes (e.g., both

c5.largeandc5a.large). This increases the pool of available Spot capacity and reduces the likelihood of mass terminations.

Protocol for Deploying a Resilient Spot-Based Cluster:

- Configure Orchestrator: Set up a Kubernetes cluster with a managed node group (e.g., AWS EKS Managed Node Groups, GKE Node Pools) configured for 100% Spot instances.

- Define Instance Diversity: In the node group configuration, specify 4-5 different instance types with similar CPU/Memory profiles.

- Configure Workflow Engine: Launch your Nextflow pipeline with the Kubernetes executor. Ensure the pipeline

processdirectives define reasonable memory and CPU limits to allow scheduling on various nodes. - Persistent Storage: Create a ReadWriteMany (RWX) Persistent Volume Claim for your shared working directory.

Q4: Our multi-step NGS workflow has different compute requirements for each step (e.g., QC, alignment, post-processing). How can we optimize costs without manually managing each step?

A: Implement an auto-scaling, heterogeneous compute environment using a workflow orchestrator. This allows each pipeline stage to request the most cost-effective instance type for its needs.

Dynamic Instance Selection in NGS Workflow

The Scientist's Toolkit: Research Reagent & Cloud Solutions

| Item / Solution | Function in Bioinformatics Research |

|---|---|

| Workflow Orchestrator (Nextflow, Snakemake) | Defines, manages, and executes complex, reproducible data analysis pipelines across heterogeneous cloud compute. |

| Container Technology (Docker, Singularity) | Packages software, dependencies, and environment into a portable unit, ensuring consistent analysis across any cloud instance. |

| Persistent Block Storage (EBS, Persistent Disk) | Provides durable, network-attached storage for reference genomes, intermediate files, and results, surviving instance termination. |

| Object Storage w/ Lifecycle Policies (S3, Cloud Storage) | Scalable, durable storage for raw data (FASTQ) and final results, with automated tiering to Cold/Archive for cost savings. |

| Managed Kubernetes Service (EKS, GKE, AKS) | Provides a resilient cluster for running containerized pipelines, with automatic scaling and support for Spot instance nodes. |

| Spot Instance / Preemptible VM | Drastically reduces compute cost for fault-tolerant batch processing jobs like read alignment and variant calling. |

| Cost Management & Budgeting Tools | Sets alerts and tracks spending across projects, storage, and compute services to prevent budget overruns. |

Technical Support Center: Troubleshooting & FAQs

FAQ 1: Why does my Nextflow/Snakemake pipeline fail when I move it to a high-performance computing (HPC) cluster, even with a container?

- Answer: This is a common issue due to environment and filesystem differences. The primary culprits are:

- Singularity & Bind Paths: Singularity on HPCs often has restricted bind mounts. Your pipeline may reference paths (

/home/user,/project) that are not automatically available inside the container. Use the Singularity--bindflag or configure it within your Nextflow/Snakemake profile (singularity.runOptions = "--bind /path/to/data"). - Resource Manager: Your